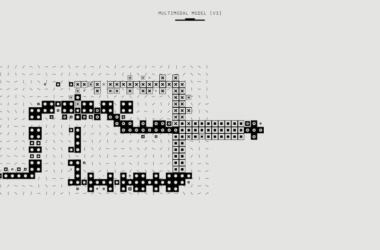

NVIDIA on Monday launched Nemotron 3 Nano Omni, an open multimodal model that unifies vision, audio, and language processing into a single system, delivering up to 9x efficiency improvements over traditional multi-model agent architectures. According to NVIDIA’s blog post, the model tops six leaderboards for complex document intelligence, video understanding, and audio processing while maintaining production-grade accuracy.

The launch comes as enterprises grapple with mounting infrastructure costs and efficiency challenges in AI deployment. Cast AI’s 2026 State of Kubernetes Optimization Report found that most companies run GPU fleets at roughly 5% utilization — six times worse than baseline expectations — while cloud providers like AWS have raised reserved H200 GPU prices by 15% in January 2026.

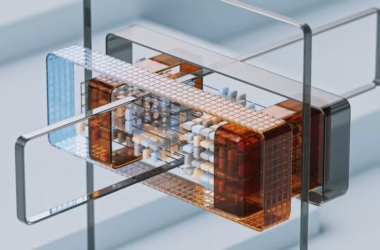

Unified Architecture Eliminates Multi-Model Overhead

Traditional AI agent systems require separate models for vision, speech, and language processing, creating bottlenecks as data passes between models. Nemotron 3 Nano Omni consolidates these capabilities into a single architecture, processing text, images, audio, video, documents, charts, and graphical interfaces through one unified system.

The model handles multimodal input while outputting text responses, enabling agents to process complex scenarios without the latency and context loss associated with model-switching. This architectural approach directly addresses the operational challenges that have led over 40% of agentic AI projects to face abandonment by 2027 due to high costs and complexity.

NVIDIA positions the model as production-ready for enterprises requiring full deployment flexibility and control over their AI infrastructure.

Open Source Competition Intensifies Efficiency Focus

The Nemotron 3 Nano Omni release follows Xiaomi’s launch of MiMo-V2.5 and MiMo-V2.5-Pro models under MIT licensing. According to VentureBeat, Xiaomi’s Pro model leads the open-source field with 63.8% performance in ClawEval benchmarks while using fewer tokens than competing models.

This efficiency focus reflects broader industry pressure around inference costs. Reasoning models like GPT-5.5 and the o1 series achieve higher performance by spending additional compute resources on hidden reasoning tokens during generation — tokens that never appear in final responses but represent significant billable compute on enterprise invoices.

According to Towards Data Science, this “inference scaling” or “test-time compute” approach transforms model selection from a simple toggle into a high-stakes operational decision involving cost-quality-latency tradeoffs.

Enterprise Infrastructure Challenges Drive Architectural Innovation

The efficiency gains delivered by unified architectures address critical enterprise pain points beyond raw performance metrics. Infrastructure teams face mounting pressure as memory suppliers pushed HBM3e prices up 20% for 2026, while enterprises struggle with GPU utilization rates far below operational targets.

“Many of the neoclouds are not cloud,” Cast AI co-founder Laurent Gil told VentureBeat. “They are neo-real estate.” Gil’s observation highlights how GPU scarcity creates a cycle where enterprises hoard unused capacity rather than optimize utilization.

Mistral AI’s recent launch of Workflows, a Temporal-powered orchestration engine, reflects similar industry recognition that operational infrastructure — not model capabilities — has become the primary bottleneck for enterprise AI adoption. Mistral’s head of product Elisa Salamanca told VentureBeat that “organizations are struggling to go beyond isolated proofs of concept” due to operational gaps.

Production Deployment Considerations

Nemotron 3 Nano Omni’s open architecture enables enterprises to deploy locally or on virtual private clouds, providing data sovereignty controls that many organizations require for production workloads. The model’s unified processing eliminates the need for complex orchestration between separate vision, audio, and language models.

For organizations implementing agentic workflows, the efficiency improvements directly impact operational costs. With usage-based billing becoming standard across services like Microsoft’s GitHub Copilot, token efficiency translates directly to budget impact.

What This Means

NVIDIA’s Nemotron 3 Nano Omni represents a architectural shift toward efficiency-first design in enterprise AI systems. The 9x efficiency improvement over multi-model approaches addresses the fundamental cost and complexity challenges that have stalled enterprise AI adoption beyond proof-of-concept stages.

The timing aligns with broader industry pressure points: rising GPU costs, poor utilization rates, and the operational complexity of managing multiple specialized models. By consolidating multimodal capabilities into a single system, NVIDIA provides enterprises with a path to reduce both infrastructure overhead and operational complexity.

The open-source positioning also signals intensifying competition in the efficiency-focused segment, with Xiaomi, Mistral, and NVIDIA all targeting the gap between model capabilities and production deployment requirements. This competition benefits enterprises by providing multiple architectural approaches to the same fundamental challenge: making AI systems that actually work in production environments.

FAQ

What makes Nemotron 3 Nano Omni different from existing multimodal models?

Nemotron 3 Nano Omni processes vision, audio, and language in a single unified architecture rather than requiring separate models for each modality. This eliminates the latency and context loss that occurs when data passes between multiple specialized models.

How does the 9x efficiency improvement translate to real cost savings?

The efficiency gain reduces the computational overhead of running multimodal AI agents by consolidating processing that previously required multiple models. In production environments where enterprises pay per token or per compute hour, this directly reduces operational costs while improving response times.

Can enterprises deploy Nemotron 3 Nano Omni on their own infrastructure?

Yes, the model is available as an open release that enterprises can deploy locally or on virtual private clouds. This provides full control over data processing and deployment configurations, addressing data sovereignty requirements for production workloads.