NVIDIA Nemotron 3 Nano Omni Unifies Vision, Audio in Single Model

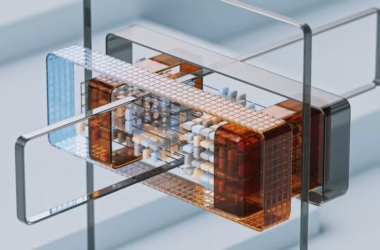

NVIDIA on Monday launched Nemotron 3 Nano Omni, an open multimodal model that processes video, audio, images, and text within a single system rather than requiring separate models for each capability. According to NVIDIA’s developer blog, the model delivers up to 9x efficiency gains over traditional multi-model approaches while topping six leaderboards for document intelligence, video understanding, and audio processing.

The unified architecture eliminates the context loss and latency that occurs when AI agents pass data between separate vision, speech, and language models. Nemotron 3 Nano Omni handles text, images, audio, video, documents, charts, and graphical interfaces as input while generating text responses.

Xiaomi Releases Competing Open Source Models for Agent Tasks

Xiaomi simultaneously released MiMo-V2.5 and MiMo-V2.5-Pro under the MIT License, targeting what the industry calls “agentic claw” tasks where AI systems complete work on users’ behalf. VentureBeat reported that both models rank near the top of efficiency charts for powering systems like OpenClaw and NanoClaw, which handle marketing content creation, email organization, and scheduling through third-party messaging apps.

The Pro model leads open-source options with a 63.8% success rate on ClawEval benchmarks while using fewer tokens than competing models. This efficiency matters as services like Microsoft’s GitHub Copilot shift to usage-based billing that charges per token rather than flat subscription rates.

https://x.com/xiaomimimo/status/2048821516079661561

Enterprise Data Infrastructure Becomes AI Bottleneck

While multimodal capabilities advance rapidly, MIT Technology Review highlighted that enterprise AI adoption faces a fundamental infrastructure challenge. Bavesh Patel, senior vice president at Databricks, told the publication that “the quality of that AI and how effective that AI is, is really dependent on information in your organization.”

Most enterprise data remains fragmented across legacy systems and disconnected formats, preventing AI systems from generating trustworthy outputs. Companies must consolidate data into open formats with unified governance before multimodal AI can deliver meaningful business value. Without proper data infrastructure, organizations risk what Patel calls “terrible AI” that fails to leverage their competitive data advantages.

Medical Applications Drive Specialized Multimodal Development

Researchers demonstrated multimodal AI’s potential in healthcare with an automated system for detecting dosing errors in clinical trial narratives. According to an arXiv paper, the system combines 3,451 features spanning traditional NLP, semantic embeddings, medical patterns, and transformer scores to achieve 0.8725 ROC-AUC on the CT-DEB benchmark dataset.

The research processed 42,112 clinical trial narratives with a median length of 5,400 characters each, addressing severe class imbalance where only 4.9% of cases contained dosing errors. Feature efficiency analysis showed that selecting the top 500-1000 features yielded optimal performance, outperforming the full feature set through noise reduction.

Google Reports 1,302 Production AI Use Cases

Google documented 1,302 real-world generative AI implementations across enterprises, governments, and research organizations in an updated case study collection. The Google Cloud blog noted that the majority showcase agentic AI applications built with tools like Gemini Enterprise and Security Command Center.

The expansion from an original list of 101 use cases demonstrates what Google calls “the fastest technological transformation we’ve seen.” Production AI and agentic systems now deploy meaningfully across thousands of organizations, with multimodal capabilities enabling more sophisticated automation across text, image, and audio workflows.

What This Means

The convergence of unified multimodal models like Nemotron 3 Nano Omni with open-source alternatives from Xiaomi signals a maturation of AI agent capabilities beyond simple chatbots. These systems can now process visual interfaces, understand context across multiple data types, and execute complex tasks with minimal human oversight.

However, the enterprise adoption gap highlighted by MIT Technology Review reveals that technical capabilities alone won’t drive business value. Organizations must first solve fundamental data infrastructure challenges before multimodal AI can access the unified, governed information needed for reliable decision-making.

The medical applications research demonstrates that specialized domains require careful feature engineering and quality control, even with advanced multimodal models. As AI agents become more autonomous, ensuring accuracy and safety through proper data preparation becomes increasingly critical.

FAQ

What makes multimodal AI models more efficient than separate models?

Multimodal models like NVIDIA’s Nemotron 3 Nano Omni eliminate the latency and context loss that occurs when passing data between separate vision, audio, and language models. This unified approach can deliver up to 9x efficiency gains while maintaining accuracy across different input types.

Why are open-source multimodal models important for enterprises?

Open-source models like Xiaomi’s MiMo series allow enterprises to modify, deploy locally, and control their AI systems without vendor lock-in. The MIT License enables commercial use while token efficiency reduces operational costs as more services move to usage-based pricing.

What infrastructure changes do companies need for multimodal AI?

Enterprises must consolidate fragmented data across legacy systems into unified, open formats with proper governance. Without this foundation, multimodal AI cannot access the quality information needed to generate trustworthy outputs that leverage competitive data advantages.