The artificial intelligence hardware landscape continues to evolve rapidly, with NVIDIA maintaining its position as the dominant force in AI accelerator technology while facing emerging challenges from shifting market dynamics and specialized applications.

Technical Architecture Leadership

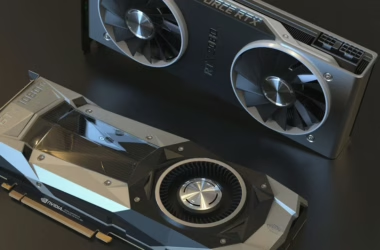

NVIDIA’s current generation of AI accelerators, anchored by the H100 and H200 Hopper architecture, represents a significant leap in computational capability for large-scale machine learning workloads. The H100’s Transformer Engine, specifically optimized for attention mechanisms in large language models, delivers up to 9x faster training performance compared to previous generations through its support for FP8 precision and advanced memory subsystems.

The upcoming Blackwell architecture promises even greater performance improvements, with Jensen Huang’s recent presentations highlighting architectural innovations including enhanced tensor processing units and improved memory bandwidth that could deliver 2.5x performance gains over Hopper for inference workloads.

Specialized AI Applications Drive Market Segmentation

While NVIDIA’s GPUs excel in general-purpose AI training and inference, emerging applications are creating demand for more specialized hardware solutions. The recent surge in AI-driven drug discovery, exemplified by companies like Chai Discovery, demonstrates how domain-specific AI workloads may require different computational approaches than traditional large language model training.

Chai Discovery’s rapid rise in the biotech sector illustrates the growing importance of AI in molecular simulation and protein folding prediction—workloads that benefit from NVIDIA’s CUDA ecosystem but may also drive demand for specialized accelerators optimized for scientific computing applications.

Performance Metrics and Benchmarking

Current benchmarking data shows NVIDIA’s H100 delivering approximately 1,000 TOPS (Tera Operations Per Second) for AI inference workloads, with memory bandwidth of 3.35 TB/s enabling efficient processing of large neural network models. The chip’s 80GB HBM3 memory capacity supports models with billions of parameters without requiring model sharding across multiple devices.

For training applications, the H100’s NVLink interconnect technology enables scaling across multiple GPUs with minimal performance degradation, crucial for training foundation models that require distributed computing across hundreds or thousands of accelerators.

Market Dynamics and Competition

Despite NVIDIA’s technical leadership, the AI hardware market is experiencing increased competition from both traditional semiconductor companies and cloud providers developing custom silicon. The growing diversity of AI workloads—from edge inference to scientific computing—is creating opportunities for specialized architectures that may challenge NVIDIA’s one-size-fits-all approach.

The company’s software ecosystem, particularly CUDA and the recently expanded suite of AI development tools, remains a significant competitive moat. However, emerging frameworks and the push for hardware-agnostic AI development could potentially reduce this advantage over time.

Future Technical Trajectories

Looking ahead, the integration of AI accelerators with emerging memory technologies like compute-in-memory and neuromorphic architectures could reshape the hardware landscape. NVIDIA’s roadmap suggests continued focus on scaling traditional GPU architectures while exploring novel computing paradigms for next-generation AI workloads.

The technical challenges of training increasingly large models while managing power consumption and heat dissipation will likely drive innovation in both chip architecture and system-level design, areas where NVIDIA’s engineering expertise provides significant advantages but where breakthrough innovations from competitors could disrupt market dynamics.

Readers new to the underlying architecture can start with, see how large language models actually work.