Zyphra released ZAYA1-8B this week, an 8-billion parameter reasoning model that activates only 760 million parameters while matching GPT-4-level performance on benchmarks. The open-source model was trained entirely on AMD Instinct MI300 GPUs and is available under Apache 2.0 license through Hugging Face.

According to Zyphra’s announcement, ZAYA1-8B achieves competitive performance against GPT-4-High and DeepSeek-V3.2 despite using a fraction of the active parameters found in trillion-parameter models from major labs. The mixture-of-experts (MoE) architecture allows the model to maintain reasoning capabilities while requiring significantly less computational resources during inference.

Chain-of-Thought Reasoning Shows Length-Driven Bias

New research reveals that reasoning models exhibit increased position bias as their chain-of-thought trajectories grow longer. A study published on arXiv tested thirteen reasoning configurations across models including DeepSeek-R1 and found that twelve showed positive correlation between trajectory length and position bias scores.

The research examined multiple-choice question answering across MMLU, ARC-Challenge, and GPQA benchmarks. Position Bias Scores ranged from 0.11 to 0.41 across length quartiles, with longer reasoning chains consistently showing stronger bias toward specific answer positions. Even DeepSeek-R1 at 671 billion parameters showed this effect in its longest reasoning quartile, achieving a PBS of 0.071.

Truncation experiments provided causal evidence for this phenomenon. When reasoning trajectories were cut and resumed from later points, models became 16% to 32% more likely to shift toward position-preferred options. The researchers argue this challenges assumptions that reasoning-capable models are inherently order-robust.

Recursive Reasoning Systems Need Better Termination Criteria

Researchers are developing new frameworks for determining when recursive reasoning systems should stop iterating. A paper on arXiv introduces the concept of “order-gap” as a termination criterion for systems that alternate between evidence acquisition and understanding refinement.

The approach represents reasoning states as epistemic graphs encoding claims, evidence relationships, and confidence weights. The order-gap measures the distance between states reached by expand-then-consolidate versus consolidate-then-expand operations. When this gap becomes small, it suggests the two orderings agree and further iteration provides diminishing returns.

The framework applies to various AI reasoning approaches including tree-of-thought reasoning, theorem proving, and agent loops. The researchers provide necessary and sufficient conditions for when the order-gap criterion remains informative rather than becoming algebraically vacuous near convergence points.

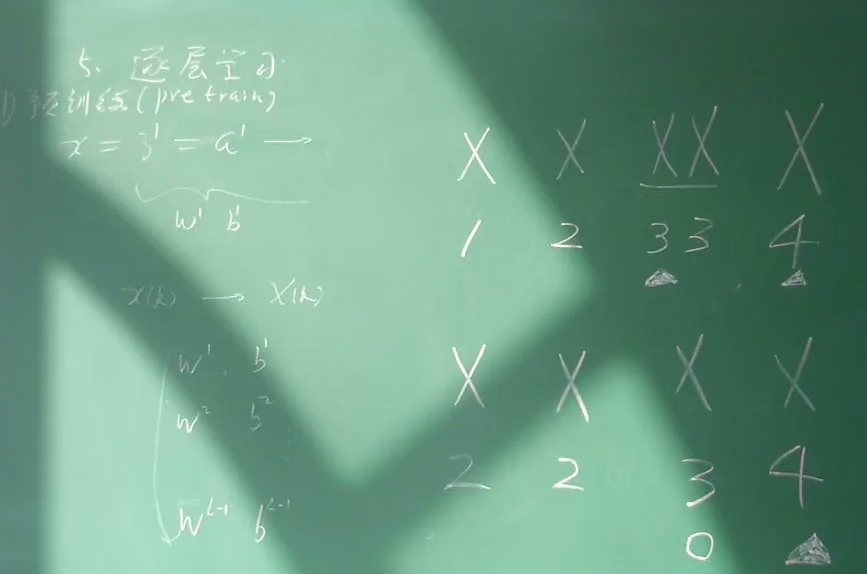

LLM Engineering Requires Understanding Multiple Components

Building production LLM systems requires mastery across tokenization, architecture design, training strategies, and evaluation methods. Towards Data Science outlines the key knowledge areas engineers need when transitioning into LLM development.

Tokenization forms the foundation, converting text into numerical representations that models can process. Modern approaches use subword tokenization methods like Byte-Pair Encoding (BPE) or SentencePiece to handle vocabulary efficiently while managing out-of-vocabulary words.

Transformer architectures rely on attention mechanisms to process sequences in parallel rather than sequentially. Engineers must understand how attention weights are computed, how positional encodings work, and how different architectural choices affect model performance and computational requirements.

Training strategies include pre-training on large text corpora, fine-tuning for specific tasks, and alignment techniques like Reinforcement Learning from Human Feedback (RLHF). Each approach requires different data preparation, computational resources, and evaluation methodologies.

AMD GPUs Prove Viable for AI Model Training

ZYAYA1-8B’s development on AMD Instinct MI300 GPUs demonstrates that alternatives to NVIDIA’s dominant position in AI training are emerging. VentureBeat reports that Zyphra used a full stack of AMD hardware to achieve competitive results, challenging the assumption that NVIDIA GPUs are necessary for state-of-the-art model development.

The MI300 series offers high-bandwidth memory and computational throughput suitable for large-scale model training. Zyphra’s success suggests that the AI hardware ecosystem is diversifying, potentially reducing costs and increasing competition in the GPU market.

Enterprise developers can now access ZAYA1-8B through Hugging Face or test it via Zyphra Cloud’s inference platform. The Apache 2.0 license allows commercial use and modification, making it attractive for organizations seeking reasoning capabilities without the costs associated with proprietary models.

What This Means

The convergence of efficient architectures, hardware diversification, and better understanding of reasoning limitations marks a significant shift in AI development. ZAYA1-8B proves that smaller, open models can achieve competitive reasoning performance while requiring fewer computational resources than massive proprietary systems.

The discovery of length-driven bias in reasoning models has immediate implications for evaluation pipelines. Organizations using chain-of-thought reasoning for critical decisions should implement bias detection and mitigation strategies, particularly for multiple-choice scenarios where position effects could skew results.

The emergence of viable AMD GPU alternatives reduces dependency on single hardware vendors and could accelerate innovation in AI training infrastructure. As more labs demonstrate successful training on diverse hardware platforms, compute costs may decrease and availability may improve for smaller research teams and companies.

FAQ

How does ZAYA1-8B achieve competitive performance with fewer active parameters?

ZYAYA1-8B uses mixture-of-experts (MoE) architecture, which activates only 760 million of its 8 billion parameters for each input. This selective activation maintains reasoning quality while reducing computational overhead during inference.

Why do longer reasoning chains increase position bias in AI models?

Research shows that as chain-of-thought trajectories grow longer, models accumulate bias toward specific answer positions in multiple-choice questions. This suggests that extended reasoning doesn’t eliminate heuristic shortcuts but may actually amplify certain biases over time.

What makes AMD GPUs a viable alternative to NVIDIA for AI training?

AMD’s Instinct MI300 series provides sufficient memory bandwidth and computational power for large model training, as demonstrated by ZAYA1-8B’s development. This diversifies the hardware ecosystem and potentially reduces costs for AI researchers and companies.