Airbnb joined Shopify and Google in reporting that AI now generates the majority of its codebase, with 60% of new code written by AI tools like Claude Code. According to TechCrunch, Airbnb CEO Brian Chesky said even managers are now programming directly with Claude Code, marking a significant shift in how enterprises approach software development.

The trend follows similar disclosures from Shopify, which reported 50% AI-generated code, and Google, which claimed 75% of its codebase now comes from AI assistance. These figures represent a dramatic acceleration in AI adoption across major technology companies over the past 18 months.

The Evolution from “Vibe Coding” to Agentic Engineering

The industry has moved beyond what Andrej Karpathy termed “vibe coding” in February 2025 toward more structured approaches. According to Towards Data Science, Karpathy himself acknowledged this shift just one year later, stating that “this era is ending and that we are entering the age of agentic engineering.”

The new paradigm focuses on “orchestrating agents against detailed specifications with human oversight” rather than direct code generation. Professional developers now spend most of their time managing AI agents rather than writing code directly, with the goal of “claiming the leverage from the use of agents but without any compromise on the quality of the software.”

This transition represents a fundamental change in software development workflows, where developers act as orchestrators and overseers rather than primary code authors.

Security Vulnerabilities in AI Coding Tools

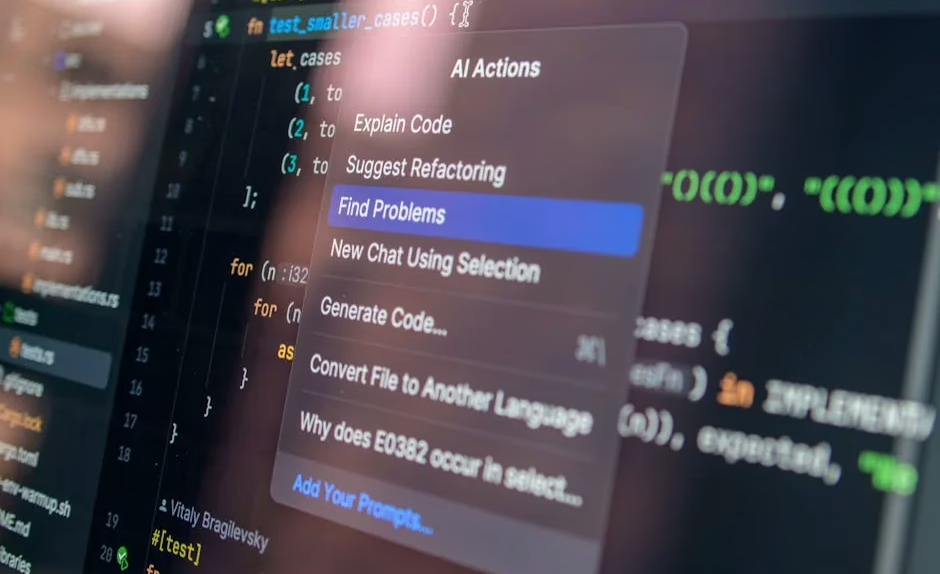

Several critical security issues have emerged with popular AI coding assistants. Between May 6-7, 2026, security researchers published findings about vulnerabilities in Claude Code, Cursor CLI, Gemini CLI, and CoPilot CLI that could allow malicious code execution with minimal user interaction.

According to Dark Reading, researchers at Adversa AI discovered that these tools inadequately warn users about malicious repositories that can “auto-approve and spawn a Model Context Protocol (MCP) server without their explicit approval or knowledge.”

The vulnerability, dubbed “TrustFall,” affects all four major coding tools but manifests differently across platforms. Claude Code was identified as offering the least information in its trust dialog, while Gemini AI provided the most detailed warnings along with explicit choices for allowing or disallowing MCP server execution.

Enterprise Authorization Challenges

The security issues stem from what experts call a “confused deputy” problem, where AI agents operate with broad permissions that don’t respect traditional user authorization boundaries. Carter Rees, VP of Artificial Intelligence at Reputation, told VentureBeat that “the flat authorization plane of an LLM fails to respect user permissions.”

Key security concerns include:

- AI agents inheriting full human permission sets without granular controls

- Automatic execution of code without proper user consent mechanisms

- Cross-platform vulnerabilities affecting Chrome extensions and OAuth tokens

- SCADA gateway targeting in industrial control systems

Kayne McGladrey, an IEEE senior member, noted that “enterprises are cloning human permission sets onto agentic systems” where “the agent does whatever it needs to do to get its job done, and sometimes that means using far more permissions than a human would.”

Browser-Based Development Environments

Despite security concerns, AI-powered development continues expanding into new environments. Developers can now write, compile, and deploy WebAssembly applications entirely within web browsers using tools like GitHub Codespaces and Emscripten.

According to Towards Data Science, this approach allows developers to “grab say a piece of C code and compile it in a way that would run in the browser” without any local installation requirements.

The browser-based development workflow includes Visual Studio Code online, port forwarding capabilities, and direct integration with scientific libraries like Gemmi and FreeSASA that have been compiled to WebAssembly.

What This Means

The rapid adoption of AI coding tools represents both unprecedented productivity gains and significant security challenges for enterprise software development. While major companies report 50-75% AI-generated code, the security vulnerabilities discovered across all major platforms suggest the industry may be moving too quickly without adequate safety measures.

The shift from “vibe coding” to “agentic engineering” indicates the technology is maturing beyond simple code generation toward more sophisticated orchestration models. However, the authorization and trust boundary issues identified by security researchers highlight fundamental architectural problems that require industry-wide solutions.

Organizations adopting AI coding tools must implement granular permission controls, enhanced user consent mechanisms, and comprehensive security audits to mitigate the risks associated with automated code generation and execution.

FAQ

How much code are major tech companies generating with AI?

Airbnb reports 60% AI-generated code, Shopify reports 50%, and Google claims 75% of its codebase comes from AI assistance as of May 2026.

What is the “TrustFall” vulnerability in AI coding tools?

TrustFall is a security flaw affecting Claude Code, Cursor CLI, Gemini CLI, and CoPilot CLI that allows malicious repositories to execute code with minimal user warnings or approval.

What’s the difference between “vibe coding” and “agentic engineering”?

Vibe coding involved direct AI code generation, while agentic engineering focuses on orchestrating AI agents against detailed specifications with human oversight and quality controls.

Related news

- Dark Reading Celebrates 20 Years as a Leading Authority on Cybersecurity, Highlighting the People, Events, Ideas, and Technologies Shaping the Modern Risk Landscape – Dark Reading

- DIGITAL RIGHTS: ‘The priority should be holding tech companies accountable, not banning children from the digital world’ – civicus lens – Google News – Tech Companies

Sources

- After Shopify and Google said that 50% and 75% of their code is AI-generated, it’s now Airbnb’s turn to say that 60% of its codebase is also AI-generated. Moreover, Airbnb’s CEO says that even managers are programming with Claude Code. – Reddit Singularity

- Running Claude Code or Claude in Chrome? Here’s the audit matrix for every blind spot your security stack misses – VentureBeat

- ‘TrustFall’ Convention Exposes Claude Code Execution Risk – Dark Reading