The Duality of AI Progress: Technical Optimization Versus Public Perception Challenges

Introduction

The artificial intelligence landscape presents a fascinating dichotomy: while researchers achieve remarkable technical breakthroughs in optimization and performance, public discourse increasingly reflects growing concerns about AI’s societal impact. This analysis examines both the cutting-edge technical developments advancing the field and the emerging challenges that shape public perception of AI systems.

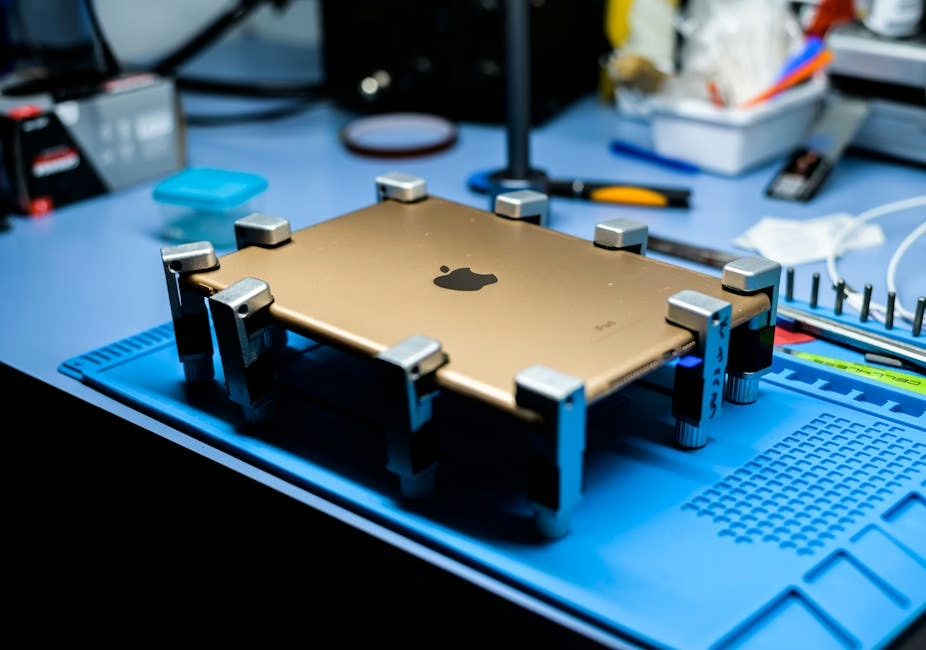

Technical Advances: Data Transfer Optimization in AI/ML Workloads

Performance Bottlenecks and Solutions

Recent research has identified data transfer bottlenecks as critical limiting factors in AI/ML system performance. These bottlenecks occur at multiple levels of the computational hierarchy, from memory bandwidth limitations to inter-node communication delays in distributed training environments.

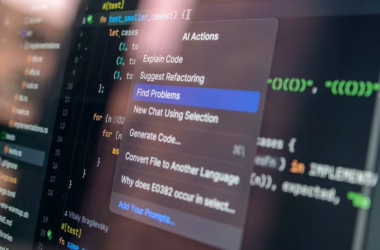

The implementation of NVIDIA Nsight™ Systems represents a significant advancement in profiling and optimization methodologies. This toolkit enables researchers to:

– Identify Memory Access Patterns: Real-time analysis of data movement between CPU, GPU, and storage systems

– Quantify Transfer Overhead: Precise measurement of latency and throughput bottlenecks

– Optimize Pipeline Architecture: Strategic restructuring of data flows to minimize idle computational resources

Technical Architecture Implications

Modern AI workloads require sophisticated data pipeline architectures that can handle massive datasets while maintaining training efficiency. The optimization of data transfer mechanisms directly impacts:

– Training Convergence Speed: Reduced I/O wait times accelerate gradient computation cycles

– Resource Utilization: Improved data flow maximizes GPU utilization rates

– Scalability Metrics: Enhanced transfer protocols enable larger distributed training configurations

The Perception Challenge: AI-Generated Content Proliferation

Content Quality and Authenticity Concerns

While technical systems advance rapidly, the proliferation of AI-generated content has created new challenges for information quality and authenticity. Analysis of content distribution platforms reveals:

– Automated Content Generation: Text-to-speech synthesis combined with AI-generated video creates entirely synthetic media

– Factual Accuracy Issues: Generated content often contains technical inaccuracies or impossible scenarios

– Detection Complexity: Current AI-generated content detection systems struggle with sophisticated synthesis models

Technical Analysis of Synthetic Media

The observed AI-generated content demonstrates several technical characteristics:

– Temporal Inconsistencies: Physics violations in generated video sequences indicate training data limitations

– Audio Synthesis Artifacts: TTS systems exhibit characteristic prosodic patterns distinguishable from human speech

– Visual Rendering Anomalies: Geometric impossibilities in generated imagery reveal model boundary conditions

Community Discourse Evolution

From Enthusiasm to Critical Analysis

The AI research community has evolved from primarily celebrating technical achievements to engaging in more nuanced discussions about implementation challenges and societal implications. This shift reflects:

– Maturation of the Field: Recognition that technical capability must be balanced with responsible deployment

– Real-World Performance Gaps: Acknowledgment of differences between laboratory results and production environments

– Stakeholder Impact Assessment: Increased focus on how AI systems affect various user populations

Technical Recommendations for Future Development

Optimization Strategies

1. Data Pipeline Architecture: Implement asynchronous data loading with prefetching mechanisms

2. Memory Management: Utilize gradient checkpointing and mixed-precision training to optimize memory bandwidth

3. Distributed Computing: Deploy efficient all-reduce algorithms for multi-GPU training scenarios

Quality Assurance Frameworks

1. Content Verification Systems: Develop robust detection mechanisms for AI-generated media

2. Training Data Curation: Implement systematic approaches to dataset quality and bias assessment

3. Performance Benchmarking: Establish standardized metrics for evaluating real-world AI system performance

Conclusion

The current state of AI development exemplifies the complex relationship between technical innovation and societal integration. While breakthrough optimization techniques continue to advance system performance and efficiency, the challenge of responsible deployment requires equal attention to content quality, authenticity verification, and public trust. Future progress depends on maintaining technical excellence while addressing the legitimate concerns that arise from widespread AI adoption.

The field’s evolution toward more critical discourse represents a healthy maturation process, where technical capabilities are evaluated not only for their performance metrics but also for their broader implications on information quality and user experience.