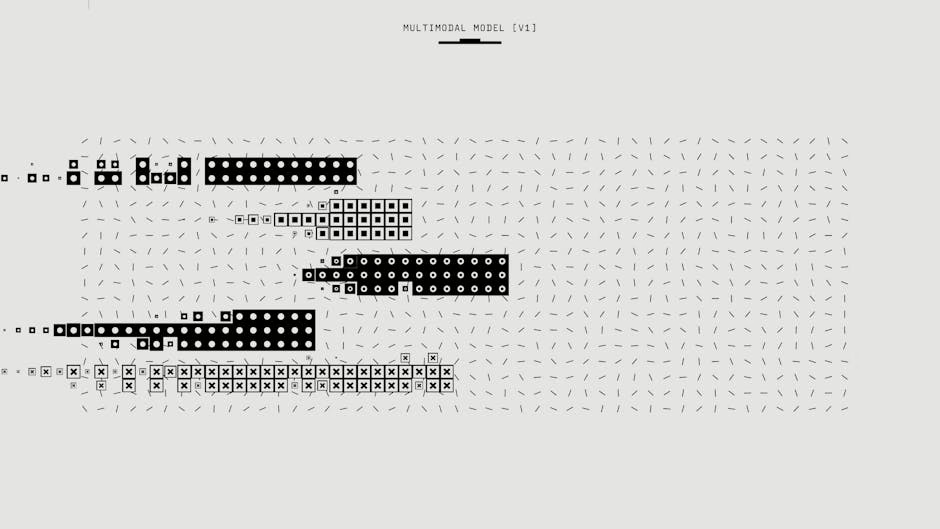

Multimodal AI systems are failing roughly one in three production attempts while expanding attack surfaces across vision, audio, and text modalities, creating unprecedented security challenges for enterprise deployments. According to Stanford HAI’s ninth annual AI Index report, this reliability gap represents the “defining operational challenge for IT leaders in 2026” as AI agents become embedded in critical enterprise workflows.

The security implications are profound. While frontier models like Anthropic’s Claude Opus 4.7 and Adobe’s new Firefly AI Assistant demonstrate impressive capabilities across multimodal tasks, their unpredictable failure patterns create exploitable vulnerabilities that threat actors are already targeting.

The Jagged Frontier Creates Exploitable Attack Vectors

Security researchers have identified what Stanford HAI calls the “jagged frontier” – the unpredictable boundary where AI excels then suddenly fails – as a critical vulnerability in multimodal systems. AI models can win gold medals at mathematical competitions but still cannot reliably tell time, creating inconsistent security postures that attackers can exploit.

This inconsistency manifests across multiple threat vectors:

- Vision-language model (VLM) spoofing: Attackers craft adversarial images that cause multimodal models to misinterpret visual content while maintaining normal text processing

- Cross-modal injection attacks: Malicious prompts embedded in image metadata or audio streams bypass text-based filtering systems

- Reliability exploitation: Threat actors target the 33% failure rate to trigger system compromises during model uncertainty

The problem is exacerbated by enterprise adoption rates reaching 88%, according to MIT Technology Review, meaning vulnerable multimodal systems are now deeply integrated into business-critical operations.

Enterprise Multimodal Deployments Expand Attack Surface

Adobe’s Firefly AI Assistant exemplifies the security challenges of enterprise multimodal deployment. The system orchestrates complex workflows across Adobe’s entire Creative Cloud suite from a single conversational interface, processing images, video, audio, and text simultaneously.

Key security concerns include:

- Privilege escalation: The assistant’s ability to execute multi-step workflows across multiple applications creates opportunities for unauthorized access escalation

- Data exfiltration: Cross-application data flows could be manipulated to extract sensitive creative assets

- Supply chain attacks: Integration with third-party AI engines like Kling 3.0 video models introduces additional attack vectors

Alexandru Costin, Vice President of AI & Innovation at Adobe, acknowledged the complexity: “We want creators to tell us the destination and let the Firefly assistant bring the tools to you.” However, this convenience comes at the cost of expanded attack surfaces that security teams struggle to monitor and protect.

Vision-Language Models Present Novel Threat Vectors

The rapid advancement in vision-language capabilities has introduced sophisticated attack methodologies that traditional security frameworks cannot address. Microsoft’s MAI-Image-2-Efficient demonstrates both the promise and peril of accessible multimodal AI.

Emerging attack patterns include:

Adversarial Visual Prompts

Attackers embed malicious instructions in images that appear benign to human reviewers but trigger unintended behaviors in VLMs. These attacks exploit the model’s attempt to interpret visual and textual information simultaneously.

Model Confusion Exploits

The 22% faster processing speed and 4x greater throughput efficiency of Microsoft’s new model, while beneficial for performance, also accelerates the potential impact of successful attacks. Rapid processing can amplify the damage from compromised inputs before security systems detect anomalies.

Cross-Modal Data Poisoning

Threat actors inject malicious training data that appears normal in individual modalities but creates vulnerabilities when processed together. This attack vector is particularly concerning given the massive datasets required for multimodal training.

Critical Infrastructure Vulnerabilities in Multimodal Systems

The infrastructure supporting multimodal AI presents additional security challenges that extend beyond individual model vulnerabilities. According to MIT Technology Review, AI data centers worldwide now consume 29.6 gigawatts of power – enough to run New York state at peak demand.

Infrastructure attack vectors include:

- Supply chain concentration: TSMC fabricates almost every leading AI chip, creating a single point of failure for global multimodal AI capabilities

- Power grid targeting: The massive energy requirements make AI data centers attractive targets for nation-state actors seeking to disrupt multimodal AI capabilities

- Water supply attacks: OpenAI’s GPT-4o alone requires water exceeding the drinking needs of 12 million people, creating environmental attack vectors

The fragility of this infrastructure means that successful attacks could cascade across multiple multimodal AI systems simultaneously, amplifying the impact of security breaches.

Advanced Persistent Threats Target Multimodal Capabilities

Anthropic’s decision to restrict its most powerful model, Mythos, to a small number of enterprise partners highlights the weaponization potential of advanced multimodal AI. The model is being used specifically for cybersecurity testing and patching vulnerabilities that it rapidly exposed in enterprise software.

This development indicates that:

- Nation-state actors are likely developing similar capabilities for offensive operations

- Advanced persistent threat (APT) groups may already be using multimodal AI to enhance social engineering and reconnaissance

- Insider threats could leverage multimodal AI to bypass traditional security controls

The competitive landscape further complicates security. With the US and China “almost neck and neck on AI model performance” according to Arena rankings, the geopolitical implications of multimodal AI security vulnerabilities extend far beyond individual organizations.

Defense Strategies for Multimodal AI Security

Security teams must adapt their strategies to address the unique challenges of multimodal AI systems. Recommended defensive measures include:

Multi-Modal Input Validation

- Implement separate validation pipelines for each input modality (vision, audio, text)

- Use ensemble detection methods that analyze cross-modal consistency

- Deploy adversarial input detection specifically trained on multimodal attack patterns

Zero-Trust Multimodal Architecture

- Segment multimodal AI systems from critical business applications

- Implement strict access controls for cross-application workflows

- Monitor and log all inter-modal data transfers

Continuous Security Assessment

- Regularly test multimodal systems against known attack vectors

- Implement red team exercises that specifically target cross-modal vulnerabilities

- Establish baseline performance metrics to detect anomalous behavior patterns

Privacy-Preserving Multimodal Processing

- Use federated learning approaches to minimize centralized data exposure

- Implement differential privacy techniques across all input modalities

- Deploy homomorphic encryption for sensitive multimodal computations

What This Means

The convergence of multimodal AI capabilities with enterprise adoption creates an unprecedented security challenge that traditional cybersecurity frameworks cannot adequately address. The 33% failure rate in production environments, combined with the “jagged frontier” of unpredictable AI behavior, provides attackers with exploitable vulnerabilities across vision, audio, and text processing systems.

Organizations deploying multimodal AI must recognize that they are not simply adding new features but fundamentally expanding their attack surface. The integration of systems like Adobe’s Firefly AI Assistant and Microsoft’s MAI-Image-2-Efficient into business-critical workflows requires a complete rethinking of security architecture and threat modeling.

The restriction of Anthropic’s Mythos model demonstrates that the most capable multimodal AI systems are already being recognized as dual-use technologies with significant security implications. As these capabilities become more accessible, the window for establishing robust defensive measures is rapidly closing.

FAQ

Q: What makes multimodal AI more vulnerable than traditional AI systems?

A: Multimodal AI processes multiple input types (vision, audio, text) simultaneously, creating cross-modal attack vectors where malicious content in one modality can exploit vulnerabilities in another. The 33% failure rate and unpredictable “jagged frontier” behavior patterns provide attackers with exploitable inconsistencies.

Q: How should organizations assess multimodal AI security risks?

A: Organizations should conduct threat modeling that accounts for cross-modal attack vectors, implement separate validation pipelines for each input modality, and establish continuous monitoring for anomalous behavior patterns. Zero-trust architecture principles should be applied specifically to multimodal AI deployments.

Q: Why are advanced multimodal AI models being restricted from public release?

A: Models like Anthropic’s Mythos have demonstrated the ability to rapidly identify and exploit vulnerabilities in enterprise software, indicating significant weaponization potential. These capabilities could be used by threat actors for automated vulnerability discovery and exploitation at scale.

Related news

- OpenAI Widens Access to Cybersecurity Model After Anthropic’s Mythos Reveal – SecurityWeek

- CIOs fret over rising security concerns amid AI adoption – Cybersecurity Dive – Google News – AI Security

- Stellantis, Microsoft unveil five-year AI and cybersecurity partnership – Yahoo Finance UK – Google News – Microsoft

For a side-by-side look at the flagship models in play, see our full 2026 AI model comparison.