New York Governor Kathy Hochul signed an executive order banning state employees from using insider information to trade on prediction markets, joining California and Illinois in a growing regulatory crackdown on government corruption in the emerging sector.

The order, signed today and viewed by WIRED, prohibits New York’s government workforce from using “any nonpublic information obtained in the course of their official duties” to participate on prediction market platforms like Kalshi and Polymarket, or to help others profit using those services.

“Getting rich by betting on inside information is corruption, plain and simple,” Hochul said in a statement. “Our actions will ensure that public servants work for the people they represent, not their own personal enrichment.”

Rapid State-Level Response

The New York order follows similar executive actions in California and Illinois, signaling coordinated state-level concern about potential corruption in prediction markets. California Governor Gavin Newsom issued a comparable executive order last month, while Illinois Governor JB Pritzker followed suit yesterday.

According to New York State Executive Chamber deputy communications director Sean Butler, the order was not triggered by specific incidents. “There are no known instances of this behavior to date,” Butler said.

The rapid succession of state orders suggests coordinated preparation for potential abuses as prediction markets gain mainstream adoption. Platforms like Polymarket saw explosive growth during the 2024 election cycle, with billions in trading volume on political outcomes.

Federal Action and Congressional Interest

Beyond state-level initiatives, Congress has introduced several bills targeting market manipulation and corruption in prediction markets. The legislation includes provisions barring elected officials from participating in prediction markets entirely.

CNN reported that the White House recently warned executive branch staff against trading on prediction markets. Individual politicians are also discouraging or outright barring their staff from buying event contracts on these platforms.

The federal response indicates growing recognition that prediction markets create new vectors for government corruption, particularly as these platforms expand beyond political events into economic indicators, policy outcomes, and regulatory decisions.

Surveillance and AI Security Concerns

Separately, lawmakers remain deadlocked over extending Section 702 of the Foreign Intelligence Surveillance Act (FISA), which expires April 30. The law allows U.S. intelligence agencies to collect overseas communications without individualized warrants, but also sweeps up Americans’ data.

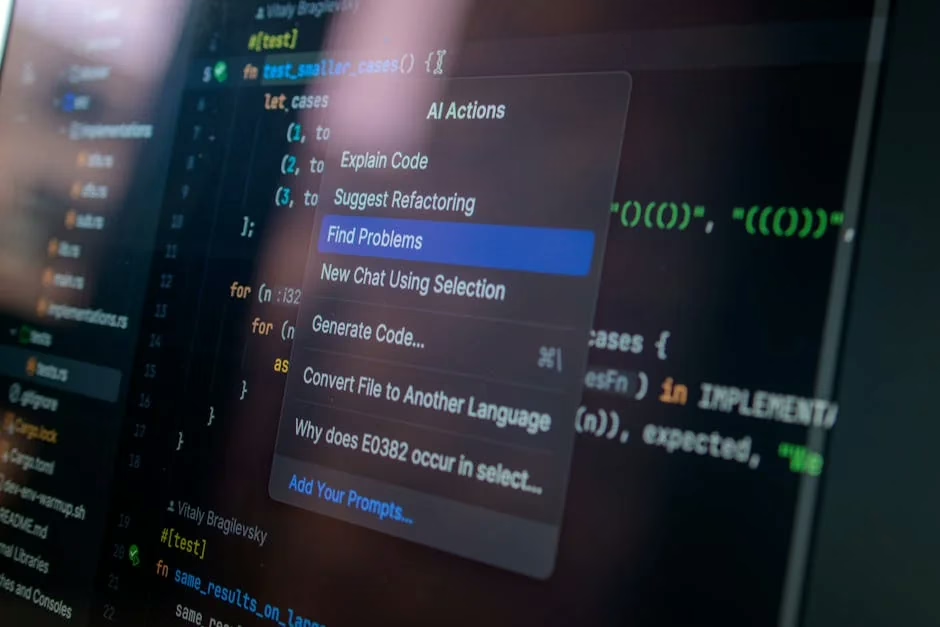

A bipartisan group introduced the Government Surveillance Reform Act in March, seeking to curtail warrantless surveillance programs. The legislation comes as AI security tools face new threats — CrowdStrike reported that adversaries compromised AI tools at more than 90 organizations in 2025.

The emergence of autonomous SOC agents with firewall access creates new attack vectors. Unlike previous compromised tools that could only read data, these agents can rewrite infrastructure through approved API calls that appear as authorized activity.

Global Social Media Restrictions

Meanwhile, multiple countries are implementing social media restrictions for minors. Australia became the first country to ban social media for children under 16 in December 2025, blocking access to Facebook, Instagram, Snapchat, TikTok, X, and other platforms.

The Australian ban requires social media companies to implement age verification systems and face penalties up to $49.5 million AUD ($34.4 million USD) for non-compliance. The legislation notably exempts WhatsApp and YouTube Kids while requiring multiple verification methods beyond user-entered ages.

Other countries are preparing similar measures, citing concerns about cyberbullying, addiction, mental health impacts, and predator exposure. Critics, including Amnesty Tech, argue such bans are ineffective and ignore younger generations’ digital realities.

Enterprise AI Adoption Accelerates

As regulatory frameworks develop, enterprise AI deployment continues expanding rapidly. Google reported tracking 1,302 real-world generative AI use cases across leading organizations, up from 101 cases two years ago.

The cases predominantly feature agentic AI applications built with tools like Gemini Enterprise and Security Command Center. Google characterized this as “the fastest technological transformation we’ve seen,” with production AI and agentic systems deployed across virtually every organization attending their Next ’26 conference.

The rapid enterprise adoption creates new regulatory challenges as autonomous systems gain capabilities to modify critical infrastructure. Cisco announced AgenticOps for Security in February, featuring autonomous firewall remediation and compliance capabilities that operate without human oversight.

What This Means

The convergence of prediction market regulation, surveillance law debates, and AI security concerns reflects lawmakers struggling to keep pace with technological change. State-level prediction market bans demonstrate proactive governance, but the patchwork approach may create compliance challenges for platforms operating across multiple jurisdictions.

The FISA extension deadlock highlights ongoing tension between national security needs and privacy rights, complicated by AI tools that can process surveillance data at unprecedented scale. As autonomous agents gain infrastructure access, the potential impact of both foreign surveillance and domestic corruption increases exponentially.

Social media age restrictions represent another regulatory experiment, with Australia’s implementation serving as a global test case. The effectiveness of technical age verification measures will likely influence whether other countries adopt similar approaches or pursue alternative child safety strategies.

FAQ

Which states have banned government prediction market trading?

New York, California, and Illinois have all issued executive orders banning state employees from using insider information to trade on prediction markets. The bans were implemented within weeks of each other, suggesting coordinated action.

What penalties do social media companies face under Australia’s youth ban?

Social media platforms that fail to prevent children under 16 from accessing their services face penalties up to $49.5 million AUD ($34.4 million USD). Companies must implement age verification systems that go beyond user-entered ages.

How do compromised AI security agents differ from previous cyber threats?

Unlike traditional malware that requires network access, compromised autonomous SOC agents can modify firewalls, IAM policies, and quarantine endpoints through legitimate API calls using their own privileged credentials, making detection extremely difficult.