Multimodal AI Security Threats Emerge as Models Advance Rapidly

Multimodal AI systems are advancing at breakneck speed while introducing critical security vulnerabilities that organizations struggle to address. According to Stanford HAI’s 2026 AI Index report, frontier models are failing one in three production attempts despite 88% enterprise adoption rates, creating a dangerous reliability gap that threat actors can exploit.

The emergence of sophisticated vision-language models (VLMs) like Anthropic’s Claude Opus 4.7 and Adobe’s Firefly AI Assistant represents a paradigm shift in attack surface complexity. These systems process multiple data modalities simultaneously—text, images, video, and audio—creating exponentially more entry points for adversaries.

Critical Vulnerability Patterns in Multimodal Systems

The “jagged frontier” phenomenon identified by AI researcher Ethan Mollick reveals fundamental security weaknesses. Models that can solve International Mathematical Olympiad problems still fail at basic time-telling tasks, creating unpredictable failure modes that attackers can weaponize.

Key vulnerability categories include:

- Cross-modal injection attacks: Malicious prompts embedded in image metadata or audio streams

- Vision-based prompt manipulation: Adversarial images that trigger unintended model behaviors

- Multimodal data poisoning: Compromised training datasets affecting multiple input channels

- Agent workflow exploitation: Attacks targeting autonomous tool-calling capabilities

The reliability gap is particularly concerning for enterprise deployments. While leading models achieve 87% accuracy on MMLU-Pro benchmarks and 74.5% on GAIA assistant evaluations, the remaining failure rate creates consistent attack opportunities for sophisticated threat actors.

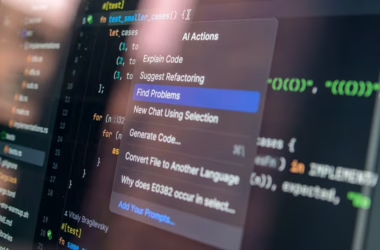

Enterprise Attack Vectors Through AI Agents

Agentic AI systems present unprecedented security challenges as they gain autonomous capabilities across enterprise workflows. Adobe’s Firefly AI Assistant exemplifies this risk—orchestrating complex multi-step operations across Creative Cloud applications from conversational interfaces.

Primary attack vectors include:

- Privilege escalation through tool access: Agents with broad system permissions become high-value targets

- Lateral movement via API chains: Compromised agents can propagate attacks across connected services

- Data exfiltration through legitimate workflows: Malicious prompts triggering authorized but unintended data access

- Supply chain vulnerabilities: Third-party AI models introducing unknown security risks

The τ-bench results showing 62.9%-70.2% success rates on real-world agent tasks indicate substantial room for exploitation. Attackers can leverage these failure modes to manipulate agent behavior in production environments.

Infrastructure Security Implications

The massive computational requirements for multimodal AI create critical infrastructure vulnerabilities. According to MIT Technology Review, AI data centers now consume 29.6 gigawatts of power—equivalent to New York state’s peak demand—while creating concerning supply chain dependencies.

Key infrastructure risks:

- Single points of failure: TSMC’s dominance in AI chip fabrication creates systemic vulnerabilities

- Resource exhaustion attacks: Computational demands enable new forms of denial-of-service

- Physical security concerns: Concentrated data center infrastructure becomes attractive targets

- Environmental monitoring gaps: Power and water usage patterns reveal operational intelligence

The fragility extends to model deployment strategies. Microsoft’s MAI-Image-2-Efficient offers 41% cost reduction and 22% speed improvements, but efficiency optimizations often sacrifice security controls for performance gains.

Geopolitical Security Considerations

The near-parity between US and Chinese AI capabilities, as measured by Arena’s ranking platform, creates complex security dynamics. Nation-state actors can leverage multimodal AI for sophisticated influence operations that traditional detection systems cannot identify.

Critical concerns include:

- Deepfake proliferation: Advanced video and image generation enabling disinformation campaigns

- Cross-border data flows: Multimodal training data creating new espionage opportunities

- Technology transfer risks: Open research enabling adversarial capability development

- Regulatory arbitrage: Different jurisdictions creating compliance and security gaps

The rapid advancement pace—with frontier models improving 30% annually on specialized benchmarks—outstrips security framework development, creating persistent vulnerability windows.

Defense Strategies and Security Controls

Organizations must implement comprehensive security frameworks addressing multimodal AI’s unique threat landscape. Traditional cybersecurity controls are insufficient for systems processing multiple data types simultaneously.

Essential defensive measures:

- Input validation across all modalities: Scanning text, images, audio, and video for malicious content

- Model behavior monitoring: Real-time analysis of output patterns to detect anomalies

- Segmented deployment architectures: Isolating AI systems from critical infrastructure

- Continuous security testing: Regular adversarial testing across all input channels

- Audit trail implementation: Comprehensive logging of multimodal interactions

The restricted release of Anthropic’s Mythos model for cybersecurity testing demonstrates the importance of controlled deployment strategies. Organizations should adopt similar phased approaches for internal multimodal AI implementations.

Privacy and Data Protection Challenges

Multimodal AI systems process vast quantities of sensitive data across multiple formats, creating complex privacy compliance requirements. Each data modality introduces distinct regulatory obligations under frameworks like GDPR, CCPA, and sector-specific regulations.

Key privacy considerations:

- Biometric data handling: Voice and image processing triggering enhanced protection requirements

- Cross-modal correlation: AI systems inferring sensitive attributes from seemingly innocuous inputs

- Data retention complexity: Different modalities requiring varying retention periods

- Consent management: Obtaining meaningful consent for multimodal processing scenarios

The global nature of AI development complicates compliance efforts, as models trained on international datasets may contain data subject to multiple jurisdictions’ privacy laws.

What This Means

Multimodal AI advancement significantly outpaces security framework development, creating a dangerous capability-protection gap. Organizations deploying these systems face unprecedented attack surfaces while lacking mature defensive tools. The combination of reliability issues, infrastructure dependencies, and geopolitical tensions demands immediate attention to security controls and risk management strategies. Success requires treating multimodal AI security as a distinct discipline requiring specialized expertise and dedicated resources.

FAQ

What are the biggest security risks with multimodal AI systems?

Cross-modal injection attacks, unpredictable failure modes, and agent workflow exploitation represent the most critical threats, as traditional security controls cannot adequately address multiple simultaneous input channels.

How can organizations protect against multimodal AI attacks?

Implement comprehensive input validation across all modalities, deploy behavior monitoring systems, use segmented architectures, conduct regular adversarial testing, and maintain detailed audit trails for all AI interactions.

Why are multimodal AI systems particularly vulnerable to nation-state actors?

These systems enable sophisticated influence operations through deepfakes and disinformation while creating new espionage opportunities through cross-border data flows and technology transfer mechanisms that traditional defenses cannot detect.

Further Reading

- In Other News: Satellite Cybersecurity Act, $90K Chrome Flaw, Teen Hacker Arrested – SecurityWeek

- Coast Guard’s New Cybersecurity Rules Offers Lessons for CISOs – Dark Reading

- OpenClaw Exposes the Real Cybersecurity Risks of Agentic AI – Infosecurity Magazine – Google News – AI Security