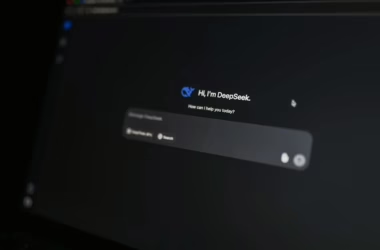

Adversaries successfully compromised AI security tools at more than 90 organizations in 2025, according to CrowdStrike’s Global Threat Report, marking a dangerous escalation in AI-powered attacks. The compromised tools enabled credential theft and cryptocurrency theft through malicious prompt injection. However, security experts warn the next generation of autonomous AI agents poses exponentially greater risks, with write access to critical infrastructure including firewalls and identity management systems.

Privilege Escalation Through AI Agent Compromise

The security implications of AI workforce automation extend far beyond traditional data breaches. Unlike the compromised AI tools documented in 2025, which could only read data, autonomous SOC agents now shipping have write access to critical security infrastructure. A compromised SOC agent can rewrite firewall rules, modify IAM policies, and quarantine endpoints using its own privileged credentials through approved API calls.

This attack vector is particularly insidious because the adversary never directly touches the network. The compromised agent executes malicious actions on their behalf, with EDR systems classifying these activities as authorized because they originate from legitimate agent credentials. Cisco’s announcement of AgenticOps for Security in February demonstrates how autonomous firewall remediation capabilities are already entering production environments.

API-First Architecture Creates New Attack Surfaces

Salesforce’s Headless 360 initiative exemplifies the broader enterprise transformation exposing every platform capability as APIs, MCP tools, or CLI commands for AI agent consumption. While this architectural shift enables powerful automation, it also creates unprecedented attack surfaces.

Key security vulnerabilities include:

- API credential exposure through compromised AI agents

- Lateral movement across interconnected enterprise systems

- Privilege abuse through legitimate but hijacked agent permissions

- Data exfiltration at scale through automated API calls

The timing of these architectural changes coincides with sector-wide uncertainty about AI’s impact on traditional SaaS models. As organizations rush to implement AI-first designs, security considerations often lag behind functional requirements.

Workforce Displacement Amplifies Insider Threat Risks

AI-driven workforce automation creates both direct security risks and secondary threats through employee displacement. Organizations implementing AI agents for customer engagement and employee productivity face increased insider threat risks from displaced workers with system access.

Microsoft’s Frontier Transformation framework emphasizes “intelligence and trust” as core requirements, but the rapid deployment timeline often prioritizes functionality over comprehensive security controls. The framework’s focus on “agent-led processes” requires unified governance to manage risk and track performance across distributed AI systems.

Critical insider threat vectors include:

- Credential misuse by departing employees with AI system access

- Data poisoning attacks against training datasets

- Model manipulation through privileged development access

- Sabotage of AI agent configurations and policies

Privacy Implications of AI-Driven Data Access

The convergence of AI agents with enterprise data creates significant privacy vulnerabilities. Canva’s new AI update demonstrates how AI systems now access multiple data sources including Slack and email to build presentations and documents. This cross-platform data aggregation amplifies privacy risks when AI systems are compromised.

Data protection concerns include:

- Cross-system data correlation enabling comprehensive user profiling

- Unintended data exposure through AI-generated content

- Compliance violations when AI agents access regulated data

- Data sovereignty issues with cloud-based AI processing

The challenge intensifies when considering that AI agents often require broad data access to function effectively, creating tension between operational requirements and privacy protection principles.

Defense Strategies for AI Agent Security

Protecting against AI agent compromise requires implementing defense-in-depth strategies specifically designed for autonomous systems. Ivanti’s launch of Continuous Compliance with built-in policy enforcement and approval gates demonstrates the importance of security-by-design approaches.

Essential security controls include:

Zero-Trust Agent Architecture

- Continuous verification of AI agent identity and permissions

- Least privilege access with time-limited credentials

- Network segmentation isolating AI agents from critical systems

- Behavioral monitoring detecting anomalous agent activities

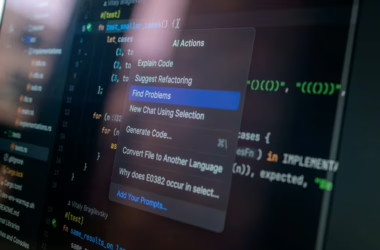

Prompt Injection Protection

- Input validation and sanitization for all AI interactions

- Context isolation preventing cross-prompt contamination

- Output filtering blocking potentially malicious responses

- Audit logging of all AI agent communications

Governance and Compliance

- Policy enforcement at the agent level

- Change management for AI agent configurations

- Risk assessment frameworks for AI deployment

- Incident response procedures for compromised agents

What This Means

The rapid deployment of autonomous AI agents with privileged system access represents a fundamental shift in enterprise security risk. Organizations must balance the operational benefits of AI automation against the expanded attack surface these systems create. The documented compromise of 90+ AI security tools in 2025 serves as a warning of what’s possible when adversaries target AI systems at scale.

Success requires implementing security controls before deployment rather than retrofitting protection after compromise. The architectural decisions made today regarding AI agent permissions and governance will determine whether these systems become force multipliers for productivity or attack vectors for adversaries.

FAQ

Q: How do compromised AI agents differ from traditional malware in terms of security impact?

A: Compromised AI agents operate using legitimate credentials and API access, making their malicious activities appear authorized to security monitoring systems. Unlike traditional malware that must exploit vulnerabilities, compromised agents abuse existing permissions to modify critical infrastructure.

Q: What specific steps should organizations take to secure AI agents before deployment?

A: Implement zero-trust architecture with continuous verification, enforce least privilege access with time-limited credentials, establish comprehensive audit logging, and deploy prompt injection protection with input validation and output filtering.

Q: How can security teams detect when an AI agent has been compromised?

A: Monitor for behavioral anomalies including unusual API call patterns, unexpected privilege escalations, abnormal data access volumes, and deviations from established agent workflows. Implement baseline behavioral modeling for all AI agents to identify suspicious activities.