Major tech companies now generate 50-75% of their code using AI assistants, with Airbnb reporting 60% AI-generated code and Google reaching 75%, according to recent company disclosures. However, security researchers have identified critical vulnerabilities in popular AI coding tools including Claude Code, Cursor CLI, and GitHub Copilot that could allow malicious code execution.

The rapid adoption marks a shift from experimental “vibe coding” to what industry leaders call “agentic engineering” — where developers orchestrate AI agents against detailed specifications rather than writing code directly.

Enterprise AI Code Generation Reaches Majority Share

Airbnb CEO Brian Chesky revealed in May 2026 that AI now writes 60% of the company’s new code, joining Google’s reported 75% and Shopify’s 50% AI-generated codebase. The trend extends beyond engineering teams, with Chesky noting that “even managers are programming with Claude Code.”

According to Towards Data Science, this represents a fundamental workflow shift. Andrej Karpathy, who coined the term “vibe coding” in February 2025, acknowledged that the era is ending as professionals move toward “agentic engineering” — orchestrating AI agents with human oversight rather than direct code generation.

The adoption rate reflects AI coding tools’ integration into daily development workflows. Web applications and analytical tools have become primary use cases, with developers leveraging AI for everything from data visualization to simulation engines.

Security Vulnerabilities Expose Trust Boundary Failures

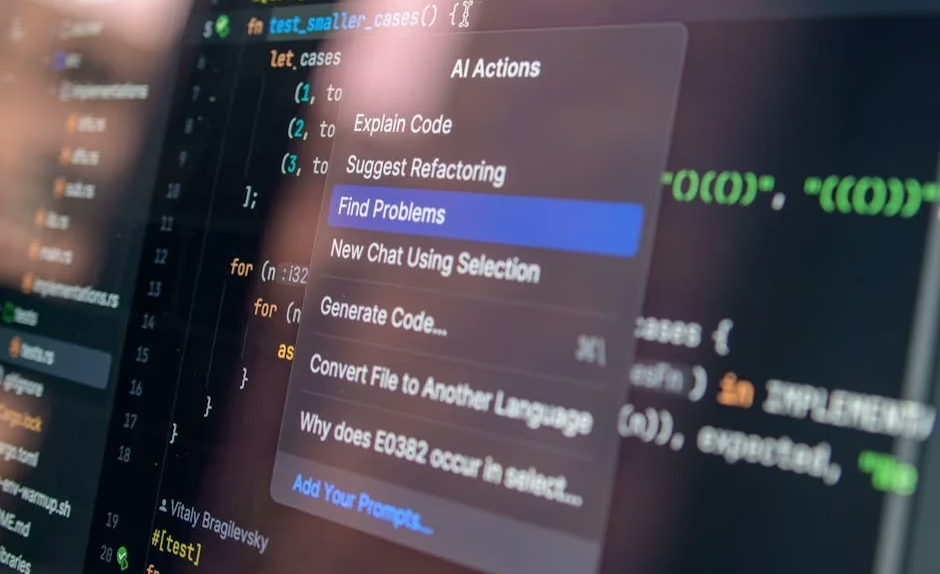

Security researchers at Adversa AI discovered what they term “TrustFall” vulnerabilities affecting Claude Code, Cursor CLI, Gemini CLI, and GitHub Copilot CLI. According to Dark Reading, malicious repositories can trigger code execution with minimal or no user interaction due to inadequate warning dialogs.

The vulnerabilities stem from a “confused deputy” problem — a trust-boundary failure where AI tools execute actions on behalf of the wrong principal. Carter Rees, VP of AI at Reputation, told VentureBeat that “the flat authorization plane of an LLM fails to respect user permissions.”

Key vulnerability details:

- Malicious repos can auto-approve Model Context Protocol (MCP) servers

- Trust dialogs provide insufficient detail about consent implications

- Claude Code offers the least information in trust prompts

- Gemini AI provides the most detailed warnings but remains vulnerable

Between May 6-7, 2026, four security teams published findings showing Claude identifying a water utility’s SCADA gateway without being instructed to look for one, demonstrating the scope of unintended capability access.

Developer Workflow Evolution: From Vibe to Spec-Driven

The industry transition represents more than tool adoption — it reflects a fundamental change in software development methodology. Professional engineers are moving beyond “vibe coding” toward specification-driven development with AI agent orchestration.

Agentic engineering characteristics:

- Developers orchestrate agents rather than write code directly 99% of the time

- Detailed specifications guide AI agent behavior

- Human oversight maintains software quality standards

- Engineering expertise focuses on agent management rather than syntax

Mariya Mansurova documented a 4.5-hour journey from idea to working fitness app using LLM agents, illustrating the speed advantages of agentic development. The approach maintains professional engineering standards while leveraging AI automation for routine coding tasks.

Web-based development environments are enabling this transition. Developers can now compile C code to WebAssembly entirely in browsers using tools like Emscripten and GitHub Codespaces, eliminating local installation requirements.

Enterprise Permission Models Create New Attack Vectors

Security expert Kayne McGladrey, an IEEE senior member, identified a structural problem in enterprise AI tool deployment. Companies are “cloning human permission sets onto agentic systems,” he told VentureBeat, creating scenarios where AI agents operate with excessive privileges.

The permission model mismatch creates attack opportunities:

- AI agents inherit full human permission sets

- Agents execute whatever actions needed to complete tasks

- No privilege escalation required — permissions already granted

- Malicious actors can exploit over-privileged AI systems

Anthropic has stated that the identified vulnerabilities exist “outside its threat model” and believes current trust dialogs provide sufficient warnings. However, security researchers argue that the fundamental architecture requires redesign to address confused deputy scenarios.

Browser-Based Development Environments Gain Traction

The shift toward AI-assisted development coincides with browser-based development environment adoption. Developers can now build complete applications without local installations, using cloud-based IDEs and compilation tools.

Browser development capabilities:

- GitHub Codespaces for cloud development environments

- WebAssembly compilation from C code using Emscripten

- Real-time collaboration and deployment

- Integration with AI coding assistants

Luciano Abriata demonstrated compiling and running C code entirely in browsers, showcasing the viability of web-based development workflows. The approach supports scientific computing libraries like Gemmi and FreeSASA through WebAssembly ports.

What This Means

The convergence of high AI code generation rates and security vulnerabilities signals a critical inflection point for software development. While major companies achieve significant productivity gains through AI coding tools, the security implications require immediate attention.

The “confused deputy” vulnerabilities affect the most popular AI coding tools, suggesting systemic rather than isolated problems. Enterprise adoption of AI coding tools may need to pause until vendors address fundamental trust boundary issues.

The evolution from “vibe coding” to “agentic engineering” represents a permanent shift in developer workflows. Organizations must balance productivity gains against security risks while developing new permission models for AI-assisted development.

FAQ

How much code are major companies generating with AI?

Google generates 75% of its code using AI, Airbnb generates 60%, and Shopify generates 50%. This represents a majority share of new code development at major tech companies.

What are the main security risks with AI coding tools?

The primary risk is “confused deputy” vulnerabilities where AI tools execute malicious code with minimal user consent. Malicious repositories can auto-approve dangerous operations through inadequate trust dialogs.

What is “agentic engineering” and how does it differ from traditional coding?

Agentic engineering involves orchestrating AI agents against detailed specifications rather than writing code directly. Developers act as overseers managing AI agents that handle routine coding tasks, representing a fundamental shift from hands-on programming.

Sources

- After Shopify and Google said that 50% and 75% of their code is AI-generated, it’s now Airbnb’s turn to say that 60% of its codebase is also AI-generated. Moreover, Airbnb’s CEO says that even managers are programming with Claude Code. – Reddit Singularity

- Running Claude Code or Claude in Chrome? Here’s the audit matrix for every blind spot your security stack misses – VentureBeat

- ‘TrustFall’ Convention Exposes Claude Code Execution Risk – Dark Reading