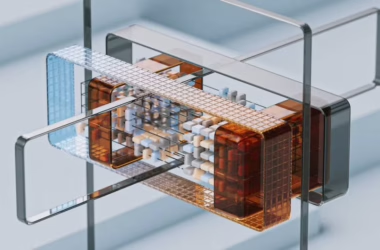

A new continuous benchmark called TokenArena has exposed dramatic performance variations across AI model endpoints, with the same model showing accuracy differences of up to 12.5 points on math and code tasks depending on deployment configuration. According to research published on arXiv, the benchmark evaluated 78 endpoints serving 12 model families across five core metrics: output speed, time to first token, workload-blended pricing, effective context, and quality.

The TokenArena framework introduces endpoint-level evaluation rather than model-level comparison, measuring the specific (provider, model, stock-keeping-unit) combinations that enterprises actually deploy. This granular approach revealed that deployment decisions significantly impact real-world AI performance beyond the underlying model architecture.

Massive Performance Variations Across Identical Models

The benchmark uncovered substantial disparities in how the same AI models perform when deployed through different endpoints. Key findings include:

- Accuracy variance: Up to 12.5-point differences in mean accuracy on mathematical and coding tasks

- Fingerprint similarity: Up to 12-point variations in output distribution similarity to first-party references

- Latency differences: Tail latency varying by an order of magnitude between endpoints

- Energy efficiency: Modeled joules per correct answer differing by a factor of 6.2

These variations stem from differences in quantization strategies, decoding approaches, regional deployment, and serving infrastructure. According to the researchers, “deployment decisions are actually made at the endpoint level,” making traditional model-only benchmarks insufficient for enterprise AI selection.

Workload-Specific Pricing Reshapes Leaderboards

TokenArena’s workload-aware pricing analysis demonstrated how different use cases dramatically alter cost-effectiveness rankings. The benchmark tested three preset scenarios:

- Chat preset (3:1 input-to-output ratio): Traditional conversational AI usage

- Retrieval-augmented preset (20:1 ratio): Document search and analysis workflows

- Reasoning preset (1:5 ratio): Complex problem-solving tasks requiring extended outputs

Under workload-aware blended pricing, 7 of the top 10 endpoints in the chat preset fell out of the top 10 for retrieval-augmented workflows. The reasoning preset elevated frontier closed models that the chat preset penalized due to higher per-token costs.

“Workload-aware blended pricing reorders the leaderboard substantially,” the researchers noted, highlighting how enterprises must consider their specific usage patterns when selecting AI endpoints.

Three Composite Metrics Synthesize Performance

TokenArena combines its five core measurements into three headline metrics designed for enterprise decision-making:

- Joules per correct answer: Energy efficiency for sustainability-conscious deployments

- Dollars per correct answer: Cost effectiveness across different workload patterns

- Endpoint fidelity: Output distribution similarity to first-party model references

The framework also incorporates modeled energy estimates, addressing growing enterprise concerns about AI’s environmental impact. This energy modeling allows organizations to balance performance, cost, and sustainability objectives in their AI deployment strategies.

Enterprise AI Safety Gets New Evaluation Framework

Separate research introduced the Partial Evidence Bench, a benchmark specifically designed for enterprise AI agents operating under access control constraints. According to research published on arXiv, this benchmark addresses a critical failure mode where AI systems produce seemingly complete answers despite lacking access to material evidence.

The benchmark includes 72 tasks across three enterprise scenarios:

- Due diligence: Financial and legal research with confidentiality restrictions

- Compliance audit: Regulatory review with role-based access controls

- Security incident response: Threat investigation with compartmentalized information

Each scenario includes ACL-partitioned data corpora, complete reference answers, authorized-view answers, and structured gap-report templates. The evaluation measures four critical dimensions: answer correctness, completeness awareness, gap-report quality, and unsafe completeness behavior.

Preliminary testing revealed that “silent filtering is catastrophically unsafe across all shipped families,” while explicit fail-and-report behavior eliminated unsafe completeness without reducing the benchmark to “trivial abstention.”

LLM Debate Rankings Show Model-Specific Strengths

The LLM Debate Benchmark received significant updates with nine new model additions, revealing evolving competitive dynamics in conversational AI. According to results posted on Reddit, the benchmark uses adversarial multi-turn debates across 683 curated topics with Bradley-Terry ratings on an Elo-like scale.

Notable performance shifts include:

- Claude Opus 4.7 maintains the lead at 1711 Bradley-Terry points

- GPT-5.5 (high) enters at 1574, below GPT-5.4 (high) at 1625

- Grok 4.3 underperforms the older Grok 4.20 Beta: 1512 → 1419

- GLM-5.1 improves over GLM-5: 1536 → 1573

- DeepSeek V4 Pro advances over V3.2: 1438 → 1517

The benchmark’s three-model judging panel maintains a mean cross-judge winner agreement of 0.55 on overlapping matchups, indicating moderate but imperfect consensus in debate evaluation.

What This Means

These benchmark developments signal a maturation in AI evaluation methodology, moving beyond simple model comparisons toward deployment-realistic assessment. TokenArena’s endpoint-level analysis reveals that enterprises cannot rely on model-level benchmarks alone — deployment configuration significantly impacts real-world performance and cost-effectiveness.

The emergence of workload-specific evaluation reflects growing enterprise sophistication in AI procurement. Organizations increasingly recognize that optimal model selection depends on specific use case requirements, from chat-heavy customer service to reasoning-intensive analysis workflows.

Enterprise safety benchmarks like Partial Evidence Bench address governance-critical failure modes that traditional academic benchmarks miss. As AI agents gain broader enterprise deployment, evaluating behavior under access control constraints becomes essential for maintaining security and compliance.

The continued evolution of debate benchmarks demonstrates the challenge of evaluating subjective AI capabilities. While mathematical and coding benchmarks provide objective scoring, conversational and reasoning abilities require more nuanced evaluation frameworks that account for judge variability and task complexity.

FAQ

Why do identical AI models perform differently across endpoints?

Deployment factors like quantization strategies, decoding approaches, regional infrastructure, and serving stacks create performance variations. The same base model can show 12.5-point accuracy differences depending on these implementation choices.

How should enterprises choose AI endpoints for specific workloads?

Enterprises should evaluate endpoints using workload-specific input-to-output ratios rather than generic benchmarks. Chat, retrieval-augmented, and reasoning workloads have different cost-performance profiles that can dramatically reorder endpoint rankings.

What makes enterprise AI safety benchmarks different from academic ones?

Enterprise benchmarks like Partial Evidence Bench test real-world constraints like access controls and authorization boundaries. They evaluate whether AI systems safely handle incomplete information rather than just accuracy on complete datasets.

Related news

- Playing Around with the ARC-AGI-3 Benchmark – Reddit Singularity

Sources

- Token Arena: A Continuous Benchmark Unifying Energy and Cognition in AI Inference – arXiv AI

- Tesla Model Y is first car to meet new US driver assistance safety benchmark – TechCrunch

- Partial Evidence Bench: Benchmarking Authorization-Limited Evidence in Agentic Systems – arXiv AI

- Update to the LLM Debate Benchmark: GPT-5.5, Grok 4.3, DeepSeek V4 Pro, GLM-5.1, Kimi K2.6, Qwen 3.6 Max Preview, Xiaomi MiMo V2.5 Pro, Tencent Hy3 Preview, and Mistral Medium 3.5 High Reasoning added – Reddit Singularity