Open-source AI models like Meta’s Llama and Mistral AI have fundamentally shifted how enterprises approach artificial intelligence deployment, with new research revealing that smaller, efficiently trained models can outperform larger counterparts when optimized for real-world inference scenarios. Recent developments in fine-tuning methodologies and local inference capabilities are enabling organizations to deploy sophisticated AI systems without relying on expensive cloud-based APIs, according to multiple industry analyses.

Revolutionary Training Methodologies Reshape Model Development

The traditional approach to large language model development has been challenged by groundbreaking research from University of Wisconsin-Madison and Stanford University. Their Train-to-Test (T²) scaling framework demonstrates that training substantially smaller models on vastly more data, then using saved computational overhead for multiple inference samples, delivers superior performance compared to conventional scaling approaches.

This methodology directly contradicts established wisdom that bigger models automatically yield better results. The research shows that inference-time scaling techniques, such as generating multiple reasoning samples during deployment, can dramatically improve accuracy while maintaining manageable per-query costs. For enterprise developers training custom models, this represents a proven blueprint for maximizing return on investment without requiring frontier-scale computational resources.

The implications extend beyond cost optimization. Organizations can now achieve strong performance on complex reasoning tasks using models that fit within practical deployment budgets, making sophisticated AI capabilities accessible to a broader range of enterprises.

Fine-Tuning Democratizes Advanced AI Capabilities

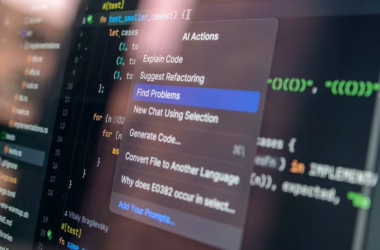

The democratization of fine-tuning through platforms like Hugging Face has made advanced AI customization accessible to organizations without extensive machine learning infrastructure. Modern fine-tuning approaches using PyTorch and Hugging Face transformers enable developers to adapt pre-trained models for specific use cases with minimal computational overhead.

Key technical advances in fine-tuning include:

- Parameter-efficient methods like LoRA (Low-Rank Adaptation) that modify only small subsets of model weights

- Quantization techniques that compress models for deployment on consumer-grade hardware

- Domain-specific adaptation strategies that preserve general capabilities while enhancing task-specific performance

These developments have particularly benefited organizations working with specialized domains where off-the-shelf models may lack necessary context or terminology. Financial institutions, healthcare organizations, and legal firms can now create highly specialized AI assistants without starting from scratch or sharing sensitive data with external providers.

Local Inference Challenges Traditional Security Models

A significant shift toward local AI inference is creating new security considerations for enterprise IT departments. According to VentureBeat analysis, the traditional CISO playbook of controlling browser-based AI access is becoming inadequate as employees increasingly run capable models locally on laptops.

This trend, dubbed “Shadow AI 2.0” or the “bring your own model” era, stems from three converging factors:

- Consumer-grade accelerators now support serious AI workloads, with MacBook Pros equipped with 64GB unified memory capable of running quantized 70B-class models

- Mainstream quantization has made model compression straightforward and accessible

- Improved model efficiency allows practical inference on standard enterprise hardware

The security implications are profound. When inference happens locally, traditional data loss prevention (DLP) systems cannot observe the interaction. This creates a blind spot where sensitive data processing occurs without organizational oversight or governance controls.

Enterprise AI Security Faces Critical Gaps

Recent survey data reveals alarming disconnects between executive confidence and actual AI security posture. The Gravitee State of AI Agent Security 2026 survey of 919 executives and practitioners found that 82% of executives believe their policies protect against unauthorized agent actions, yet 88% reported AI agent security incidents in the past twelve months.

More concerning, only 21% have runtime visibility into agent activities, while Arkose Labs research indicates 97% of enterprise security leaders expect material AI-agent-driven incidents within 12 months. Despite this risk, only 6% of security budgets address AI-specific threats.

These statistics highlight a critical infrastructure gap: monitoring without enforcement, enforcement without isolation. Organizations are investing heavily in observation capabilities while lacking the runtime controls necessary to prevent unauthorized AI actions from causing damage.

Technical Architecture Advances Enable Broader Adoption

The technical foundations supporting open-source AI deployment have matured significantly. Modern model architectures incorporate several key innovations:

Transformer Architecture Optimizations: Recent improvements in attention mechanisms and layer normalization have enhanced both training efficiency and inference speed. These optimizations enable smaller models to achieve performance levels previously requiring significantly more parameters.

Memory-Efficient Training: Techniques like gradient checkpointing and mixed-precision training have reduced memory requirements, making it feasible to fine-tune large models on more modest hardware configurations.

Deployment Flexibility: Container-based deployment systems and model serving frameworks have simplified the process of moving from development to production, reducing the technical expertise required for enterprise adoption.

These architectural advances, combined with comprehensive model hubs like Hugging Face, have created an ecosystem where organizations can rapidly prototype, customize, and deploy AI solutions tailored to their specific requirements.

What This Means

The convergence of efficient training methodologies, accessible fine-tuning tools, and capable local hardware represents a fundamental shift in enterprise AI strategy. Organizations no longer need to choose between customization and cost-effectiveness, as demonstrated by the Train-to-Test framework showing that smaller, well-trained models can outperform larger alternatives when properly optimized for inference scenarios.

However, this democratization introduces new challenges. The rise of local inference capabilities creates security blind spots that traditional IT governance models cannot address. Organizations must evolve their security frameworks to account for on-device AI processing while maintaining the benefits of customized, locally-controlled AI systems.

The technical implications suggest that successful enterprise AI strategies will increasingly focus on model efficiency rather than raw scale, leveraging open-source foundations with targeted fine-tuning to achieve domain-specific excellence. This approach offers the dual benefits of cost optimization and data sovereignty while requiring new approaches to security and governance.

FAQ

Q: How do open-source models like Llama compare to proprietary alternatives for enterprise use?

A: Open-source models offer comparable performance to proprietary alternatives while providing greater customization flexibility, data sovereignty, and cost predictability. The Train-to-Test research demonstrates that properly optimized smaller models can outperform larger proprietary systems for specific use cases.

Q: What security risks does local AI inference introduce to enterprise environments?

A: Local inference creates visibility gaps where traditional DLP systems cannot monitor AI interactions with sensitive data. This “Shadow AI 2.0” phenomenon requires new security architectures that can observe and control on-device AI processing without relying solely on network-based monitoring.

Q: What technical requirements are necessary for effective AI model fine-tuning?

A: Modern fine-tuning requires modest computational resources thanks to parameter-efficient methods like LoRA and improved quantization techniques. A high-end laptop with sufficient memory can handle many fine-tuning tasks, though GPU acceleration significantly improves training speed for larger models.