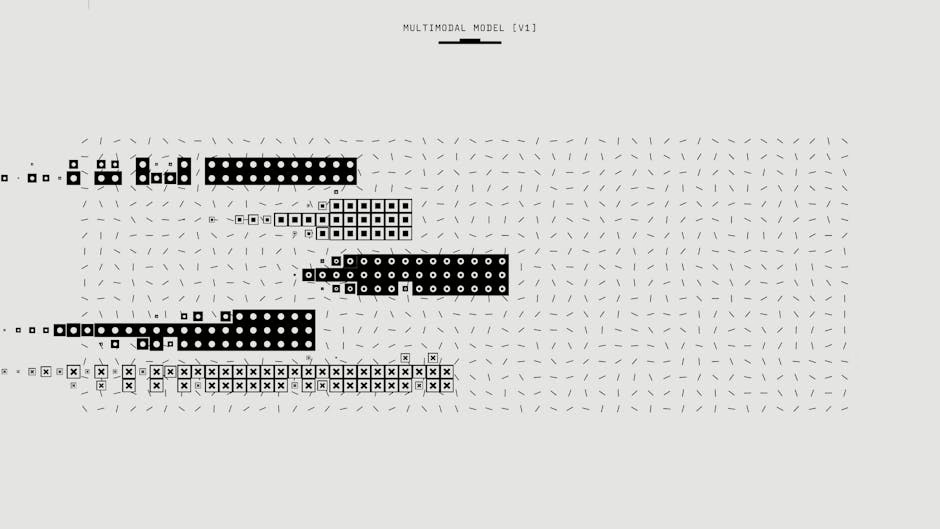

Multimodal AI systems achieved significant progress in May 2026, with Thinking Machines unveiling real-time interaction models that process voice and video simultaneously, while new research revealed how vision-language models are converging toward unified representations of reality. These developments mark a shift from turn-based AI interactions toward more natural, continuous human-AI communication.

Thinking Machines Debuts Real-Time Interaction Models

Thinking Machines, the startup founded by former OpenAI CTO Mira Murati and researcher John Schulman, announced a research preview of “interaction models” — multimodal systems designed for continuous, real-time conversation across voice and video channels. According to VentureBeat, these models treat interactivity as a core architectural feature rather than an external software layer.

The new approach addresses a fundamental limitation in current AI systems, which operate in “turn-based” mode where users provide input, wait for processing, and receive output. Thinking Machines’ interaction models can process and respond to inputs while simultaneously handling new incoming data streams, enabling more fluid conversations.

The company reported “impressive gains on third-party benchmarks and reduced latency” compared to traditional multimodal systems. However, the models remain in limited research preview, with broader access planned for coming months as the company collects feedback from early users.

Auto-Rubric Framework Improves Multimodal Training

Researchers introduced Auto-Rubric as Reward (ARR), a new framework for training multimodal generative models that addresses key weaknesses in current reward modeling approaches. Published on arXiv, the research tackles how existing reinforcement learning from human feedback (RLHF) methods collapse complex human preferences into simple scalar values.

ARR externalizes a vision-language model’s internal preference knowledge as explicit, prompt-specific rubrics before any pairwise comparison. This converts implicit preference structures into “independently verifiable quality dimensions,” reducing evaluation biases including positional bias that plague current training methods.

The framework includes Rubric Policy Optimization (RPO), which distills structured multi-dimensional evaluation into binary rewards for generative training. On text-to-image generation and image editing benchmarks, ARR-RPO outperformed both pairwise reward models and VLM judges, demonstrating more reliable and data-efficient multimodal alignment.

Enterprise Voice AI Scales with GPT-5.4 Integration

Parloa, a Berlin-based startup, deployed its AI Agent Management Platform (AMP) using OpenAI’s GPT-5.4 models to handle enterprise customer service interactions at scale. According to OpenAI’s blog, the platform enables businesses to design and deploy voice-driven customer service systems without traditional rule-based programming.

The AMP platform allows teams to define agent behavior in natural language rather than mapping rigid intents and conversation flows. Parloa’s system handles everything from simple call routing to complex, multi-step customer requests, with continuous testing against real scenarios before deployment.

Key capabilities include:

- Natural language behavior definition for voice agents

- Built-in simulation and evaluation tools

- Integration with internal enterprise systems

- Real-time conversation handling with performance monitoring

Ciaran O’Reilly Ibañez, Engineering Manager at Parloa, told OpenAI that “the models only matter if they work in production,” emphasizing the company’s focus on reliability and latency for real-time conversations.

AI Models Converge Toward Unified Reality Representation

Research published in Towards Data Science revealed that major reasoning models, regardless of their training data or architecture, are converging toward the same internal representation of reality as they improve in capability. This phenomenon, building on 2024 MIT research, suggests that effective AI systems naturally develop similar “thinking cores” when accurately modeling the world.

The convergence occurs across models trained on different modalities — text, images, audio, and video. As these systems scale and achieve better performance, they arrive at increasingly similar conclusions about how reality is structured, supporting what researchers call the “Platonic Representation Hypothesis.”

This finding has implications for multimodal AI development, suggesting that the most effective vision-language models will naturally develop compatible internal representations. The research indicates that successful modeling of reality constrains AI systems toward a single optimal representational structure, regardless of their initial training approach.

Chinese Firm Bets on Cost-Competitive Multimodal Models

SenseTime, a U.S.-sanctioned Chinese AI company, is pursuing a strategy focused on lower-cost multimodal models to compete globally despite quality gaps with leading systems. Co-founder Lin Dahua told CNBC that cheaper models could win market share even when trailing in performance benchmarks.

The Hong Kong-listed firm continues expanding internationally, maintaining Middle East expansion plans despite sanctions. SenseTime’s approach reflects broader competition dynamics where platform advantages and cost efficiency may outweigh pure technical performance in multimodal AI adoption.

This strategy contrasts with the high-cost, high-performance approach of companies like OpenAI and Google, suggesting multiple viable paths in the evolving multimodal AI market.

What This Means

These developments signal a maturation phase for multimodal AI, moving beyond proof-of-concept demonstrations toward practical deployment at scale. The shift from turn-based to real-time interaction represents a fundamental architectural evolution that could enable AI systems to handle more natural, human-like conversations across multiple modalities simultaneously.

The convergence research suggests that competition among multimodal models may eventually center on implementation efficiency and specialized capabilities rather than fundamental representational differences. As models approach optimal reality modeling, differentiation will likely occur in deployment speed, cost, and domain-specific fine-tuning.

For enterprises, the combination of improved training methods (ARR), real-time interaction capabilities, and cost-competitive options creates more viable paths to multimodal AI adoption. However, the technology remains in early deployment phases, with most advanced systems still in limited preview or research stages.

FAQ

What makes interaction models different from current AI systems?

Interaction models process and respond to inputs while simultaneously handling new incoming data, enabling continuous conversation rather than turn-based exchanges. This architectural change allows for more natural, real-time communication across voice and video channels.

How does the Auto-Rubric framework improve AI training?

ARR converts implicit human preferences into explicit, verifiable criteria before training, reducing biases and enabling more reliable multimodal model alignment. This approach outperformed traditional reward modeling on image generation and editing benchmarks.

Why are different AI models converging to similar representations?

Research suggests that as models become more accurate at modeling reality, they naturally develop similar internal representations because there’s only one reality to model effectively. This convergence occurs regardless of training data type or initial architecture differences.