OpenAI ChatGPT Images 2.0 Launches With Text Generation Inside Images

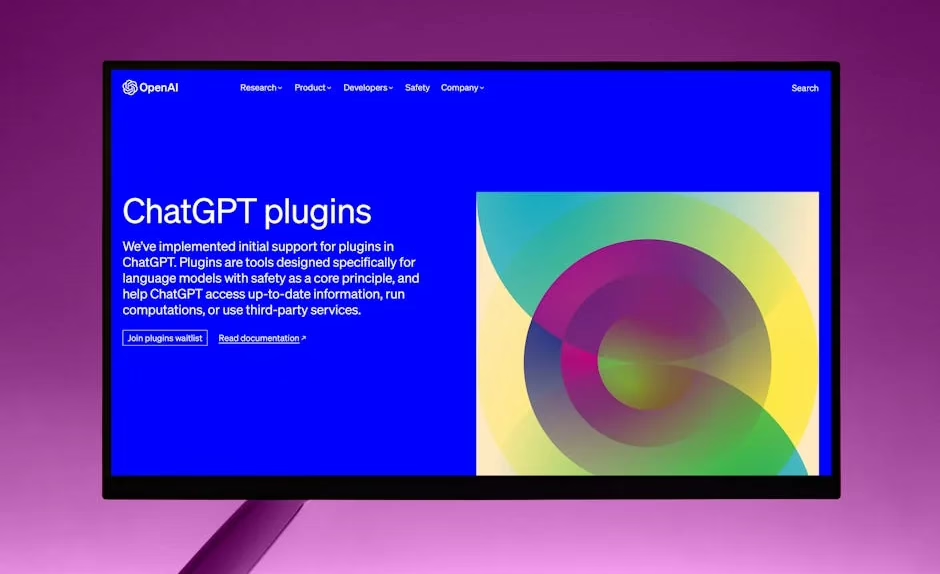

OpenAI on Monday officially launched ChatGPT Images 2.0, a multimodal AI system that generates images containing accurate multilingual text, full infographics, slides, maps, and manga-style illustrations. According to OpenAI’s announcement, the new `gpt-image-2` model represents “a fundamental shift in how the company views visual media.”

The system has been quietly tested for weeks on LM Arena AI under the codename “duct tape,” where it impressed users with its ability to generate long text blocks within images, realistic user interface screenshots, and research-backed visual content. ChatGPT Images 2.0 is now rolling out to all ChatGPT tiers and available through OpenAI’s API.

Advanced Text-in-Image Capabilities

ChatGPT Images 2.0’s standout feature is its ability to embed complex text directly into generated images without the typical AI artifacts that plague text generation in visual models. The system can produce:

- Multilingual text blocks within single images

- Complete infographics with data visualization

- Presentation slides with proper formatting

- Interactive maps with labels and legends

- Manga and comic panels with speech bubbles

According to early user reports from LM Arena, the model can also generate realistic screenshots of popular websites and platforms, reproduce recognizable public figures like OpenAI CEO Sam Altman, and perform web research to incorporate real-time data into visual outputs.

Google Counters With Deep Research Agents

Google responded to OpenAI’s multimodal advances by launching Deep Research and Deep Research Max agents, built on the Gemini 3.1 Pro model. Google’s blog post details how these agents can “fuse open web data with proprietary enterprise information through a single API call.”

The new Google agents feature:

- Model Context Protocol (MCP) support for third-party data integration

- Native chart and infographics generation within research reports

- Enterprise data fusion combining public and private information sources

- Autonomous research workflows for finance, life sciences, and market intelligence

“We are launching two powerful updates to Deep Research in the Gemini API, now with better quality, MCP support, and native chart/infographics generation,” Google CEO Sundar Pichai wrote on X.

https://x.com/sundarpichai/status/2046627545333080316

Xiaomi Releases Efficient Open-Source Multimodal Models

Xiaomi entered the multimodal AI race with the release of MiMo-V2.5 and MiMo-V2.5-Pro under the MIT License. VentureBeat reported that these models excel at “agentic claw tasks” — systems where users communicate through third-party messaging apps to have agents complete tasks like content creation, email organization, and scheduling.

According to Xiaomi’s ClawEval benchmarks, the Pro model achieved a 63.8% success rate while using fewer tokens than competing models. The models are available for download from Hugging Face and can be modified for commercial applications.

Key advantages of Xiaomi’s approach:

- Token efficiency reducing costs for usage-based billing systems

- Enterprise-friendly licensing under MIT License

- Local deployment options for privacy-conscious organizations

- High performance on agent-based task completion

Clinical AI Applications Advance

Multimodal AI is making significant inroads in healthcare applications. Researchers published findings in arXiv demonstrating an automated system for detecting dosing errors in clinical trial narratives using gradient boosting with comprehensive multi-modal feature engineering.

The system combines 3,451 features spanning traditional NLP, dense semantic embeddings, domain-specific medical patterns, and transformer-based scores to train a LightGBM model. On the CT-DEB benchmark dataset with severe class imbalance (4.9% positive rate), the system achieved 0.8725 test ROC-AUC through 5-fold ensemble averaging.

The research highlights the critical role of sentence embeddings in clinical text classification, with their removal causing the largest performance degradation (2.39%) despite contributing only 37.07% of total feature importance.

Enterprise Adoption Accelerates

Google’s internal data reveals the rapid enterprise adoption of multimodal AI systems. The company documented 1,302 real-world generative AI use cases from leading organizations, representing what Google calls “the fastest technological transformation we’ve seen.”

The majority of these deployments showcase agentic AI applications built with:

- Gemini Enterprise for multimodal reasoning

- Gemini CLI for developer integration

- Security Command Center for enterprise security

- AI Hypercomputer infrastructure for scalable deployment

Google notes that “production AI and agentic systems are now deployed in meaningful ways across virtually every one of the thousands of organizations” attending their Next ’26 conference in Las Vegas.

What This Means

The simultaneous launches from OpenAI, Google, and Xiaomi signal that multimodal AI has reached a critical inflection point. OpenAI’s ChatGPT Images 2.0 addresses the longstanding challenge of accurate text generation within images — a capability that opens new possibilities for automated content creation, data visualization, and educational materials.

Google’s emphasis on enterprise research workflows through Deep Research agents indicates the technology is moving beyond consumer applications toward mission-critical business intelligence. The ability to combine proprietary enterprise data with web research through a single API represents a significant step toward autonomous business analysis.

Xiaomi’s open-source approach with efficient token usage directly challenges the closed, expensive models from larger tech companies. Their focus on agentic tasks suggests a future where AI assistants can handle complex, multi-step workflows with minimal human oversight.

The rapid enterprise adoption documented by Google — over 1,300 production use cases — demonstrates that multimodal AI has moved from experimental to operational across industries. This transition suggests we’re entering what Google terms “the era of the agentic enterprise,” where AI systems don’t just assist but autonomously execute business processes.

FAQ

What makes ChatGPT Images 2.0 different from previous image generation models?

ChatGPT Images 2.0 can generate accurate multilingual text directly within images, create complete infographics and slides, and incorporate real-time research data into visual outputs. Previous models struggled with text accuracy and complex visual layouts.

How do Google’s Deep Research agents compare to ChatGPT’s capabilities?

Google’s Deep Research agents focus on autonomous research workflows that combine public web data with private enterprise information through a single API. They’re designed for business intelligence rather than general image generation, with native chart creation and MCP protocol support.

Are Xiaomi’s open-source multimodal models competitive with closed models?

Xiaomi’s MiMo-V2.5-Pro achieved 63.8% success rates on agentic tasks while using fewer tokens than competing models. The MIT License allows commercial modification and local deployment, making them attractive for cost-conscious enterprises despite potentially lower absolute performance than closed models.