AI-powered coding assistants like GitHub Copilot and Cursor are generating unprecedented volumes of code, with acceptance rates reaching 80-90%, but new research reveals a troubling disconnect between initial adoption metrics and real-world productivity gains. According to Waydev, a developer analytics firm tracking over 10,000 software engineers across 50 companies, the actual long-term acceptance rate of AI-generated code drops to just 10-30% after accounting for subsequent revisions and debugging cycles.

This emerging data challenges the prevailing narrative around AI coding productivity and highlights critical gaps in how organizations measure and optimize their AI development workflows. As enterprises invest heavily in coding agents and expand token budgets, understanding the true technical implications becomes essential for sustainable AI-assisted development practices.

The Token Budget Paradox in Developer Productivity

The rise of “tokenmaxxing” culture among Silicon Valley developers has created a peculiar metric for productivity measurement. Large token budgets—representing the computational resources allocated for AI processing—have become status symbols, but this input-focused approach fundamentally misaligns with output-oriented productivity goals.

TechCrunch reports that Alex Circei, CEO of Waydev, observes engineering managers celebrating high code acceptance rates without accounting for downstream maintenance costs. The initial 80-90% acceptance rates mask significant technical debt, as developers must frequently return to revise AI-generated code within weeks of initial implementation.

This phenomenon reflects a deeper architectural challenge: current large language models excel at pattern matching and syntax generation but struggle with complex system design and long-term maintainability considerations. The models lack sufficient context about existing codebases, architectural constraints, and business logic to generate truly production-ready code consistently.

Spec-Driven Development Emerges as Enterprise Solution

To address these quality concerns, forward-thinking enterprises are adopting spec-driven development methodologies that fundamentally change how AI agents approach code generation. VentureBeat highlights how this approach requires AI agents to work from structured, context-rich specifications before writing any code.

Key technical advantages of spec-driven development include:

- Formal verification capabilities: Specifications provide mathematical foundations for code correctness

- Context preservation: Agents maintain system-wide understanding throughout development cycles

- Quality gates: Automated validation against predefined specifications reduces debugging overhead

- Scalable architecture: Consistent specification formats enable better agent coordination

The Kiro IDE team demonstrated this approach’s effectiveness by using their own agentic coding environment to build Kiro IDE itself, reducing feature development cycles from two weeks to two days. Similarly, an AWS engineering team completed an 18-month rearchitecture project originally scoped for 30 developers with just six people in 76 days.

Local Inference Creates New Security Challenges

The shift toward on-device AI inference is creating unprecedented security blind spots for enterprise IT teams. VentureBeat’s security analysis reveals that traditional cloud access security broker (CASB) policies and data loss prevention (DLP) systems cannot monitor locally-executed AI models.

Three converging factors enable practical local inference:

- Consumer-grade accelerators: MacBook Pro with 64GB unified memory can run quantized 70B-parameter models at usable speeds

- Mainstream quantization: Model compression techniques now easily reduce memory requirements while preserving performance

- Optimized frameworks: Specialized inference engines like llama.cpp enable efficient local execution

This “Shadow AI 2.0” phenomenon means employees can run sophisticated coding assistants entirely offline, processing potentially sensitive code without any network signatures that security teams can detect or monitor.

Enterprise Platform Transformation for AI Agents

Salesforce’s recent “Headless 360” announcement represents the most significant architectural transformation in enterprise software for AI agent integration. The initiative exposes every platform capability as APIs, Model Context Protocol (MCP) tools, or CLI commands, enabling AI agents to operate without traditional user interfaces.

VentureBeat reports that this transformation ships over 100 new tools immediately available to developers, addressing the existential question facing enterprise software: whether companies still need graphical interfaces when AI agents can reason, plan, and execute tasks autonomously.

Jayesh Govindarjan, EVP of Salesforce and key architect of Headless 360, emphasized that this represents a fundamental shift from “burying capabilities behind a UI” to exposing them for programmatic access. This architectural approach enables more sophisticated agent workflows while maintaining enterprise security and governance requirements.

Technical Architecture Considerations for AI Coding Integration

Successful enterprise AI coding implementations require careful attention to several technical architecture principles:

Model Selection and Deployment:

- Context window optimization: Larger context windows (32K+ tokens) significantly improve code understanding

- Fine-tuning strategies: Domain-specific training on internal codebases improves relevance

- Hybrid deployment: Combining cloud-based large models with local specialized models

Integration Patterns:

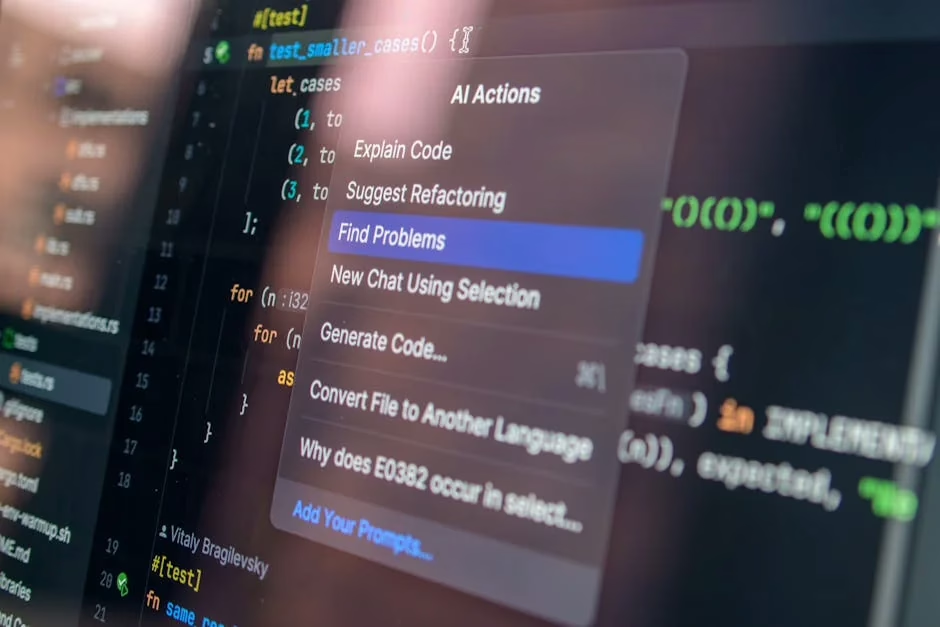

- IDE-native integration: Deep embedding within development environments rather than external tools

- Version control integration: Automatic tracking of AI contributions for audit and rollback capabilities

- Continuous integration pipelines: Automated testing and validation of AI-generated code

Performance Monitoring:

- Real-time acceptance tracking: Beyond initial approval rates to include revision cycles

- Code quality metrics: Cyclomatic complexity, maintainability indices, and technical debt measurement

- Developer velocity analysis: Time-to-completion for features rather than lines of code generated

What This Means

The AI coding tool landscape is experiencing a critical maturation phase where initial enthusiasm meets practical implementation challenges. While tools like Copilot and Cursor demonstrate impressive code generation capabilities, sustainable productivity gains require more sophisticated approaches to quality assurance, security governance, and architectural integration.

Enterprise organizations must move beyond simple adoption metrics to comprehensive productivity measurement frameworks that account for long-term maintenance costs and code quality. The emergence of spec-driven development and headless platform architectures suggests that successful AI coding integration demands fundamental changes to development methodologies rather than simple tool substitution.

Security teams face new challenges as local inference capabilities democratize AI access while creating monitoring blind spots. Organizations must develop new governance frameworks that balance developer productivity with security requirements in an increasingly distributed AI landscape.

FAQ

Q: What is the actual productivity impact of AI coding tools?

A: While initial code acceptance rates reach 80-90%, real-world productivity gains are limited by revision cycles that reduce effective acceptance to 10-30% of generated code, according to Waydev’s analysis of 10,000+ developers.

Q: How does spec-driven development improve AI code quality?

A: Spec-driven development requires AI agents to work from structured specifications that define system requirements and correctness criteria before generating code, enabling formal verification and reducing debugging overhead.

Q: What security risks do local AI coding tools create?

A: Local inference bypasses traditional network monitoring, creating “Shadow AI 2.0” scenarios where employees can process sensitive code with AI models that security teams cannot detect or govern through conventional DLP systems.