Zyphra released ZAYA1-8B this week, a mixture-of-experts reasoning model with just 760 million active parameters that matches GPT-5-High and DeepSeek-V3.2 performance on third-party benchmarks. According to Zyphra’s announcement, the model was trained entirely on AMD Instinct MI300 GPUs, demonstrating a viable alternative to NVIDIA’s dominant position in AI training infrastructure.

The model is available on Hugging Face under Apache 2.0 licensing, allowing immediate commercial deployment. While larger models from OpenAI and Anthropic require trillions of parameters, ZAYA1-8B achieves competitive results through what Zyphra calls “intelligence density” — maximizing performance per parameter through architectural innovations.

Subquadratic Claims 1,000x Efficiency Breakthrough

Miami startup Subquadratic emerged from stealth Tuesday claiming its SubQ 1M-Preview model achieves the first fully subquadratic architecture in large language models. According to the company’s announcement, their approach reduces attention compute by nearly 1,000 times compared to frontier models at 12 million tokens — a claim that would represent the largest efficiency gain in transformer history.

Subquadratic raised $29 million in seed funding at a $500 million valuation, with investors including Tinder co-founder Justin Mateen and former SoftBank Vision Fund partner Javier Villamizar. The company launched three products into private beta: an API with full context window access, SubQ Code for command-line development, and SubQ Search.

AI researchers have responded with skepticism to the extraordinary efficiency claims. VentureBeat reported that critics questioned the cherry-picked benchmarks and gated access model, with some comparing the situation to previous overhyped technology announcements.

Multi-Token Prediction Accelerates Gemma 4 Inference

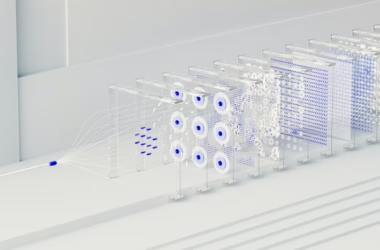

Google released Multi-Token Prediction (MTP) drafters for Gemma 4 models, achieving up to 3x speedup through speculative decoding without quality degradation. According to Google’s blog post, the technique pairs heavy target models with lightweight drafters to predict multiple future tokens simultaneously.

Standard LLM inference faces memory-bandwidth bottlenecks where processors spend most time moving parameters from VRAM to compute units for single token generation. The MTP approach utilizes idle compute by having the drafter “predict” several tokens while the target model processes one, then verifies predictions in parallel.

Google tested the acceleration across LiteRT-LM, MLX, Hugging Face Transformers, and vLLM frameworks. The company reported that Gemma 4 has achieved over 60 million downloads since its recent release, making it one of the most adopted open models to date.

Training Efficiency Advances Beyond Parameter Count

While major AI labs pursue ever-larger models, efficiency-focused approaches are gaining traction through architectural innovations rather than scale. Towards Data Science outlined the fundamental components LLM engineers need to understand, from tokenization and attention mechanisms to fine-tuning and evaluation frameworks.

Zyphra’s “full-stack innovation” approach spans architecture design, training techniques, and hardware optimization. The company’s previous Zamba model mimicked cortex-hippocampus interactions to share information across sequential layers, demonstrating how neuroscience principles can inform AI architecture design.

The shift toward efficiency reflects practical deployment constraints. Organizations need models that deliver strong performance within compute budgets, leading to innovations in mixture-of-experts architectures, speculative decoding, and parameter-efficient training methods.

Inference Scaling Changes Cost-Performance Trade-offs

Reasoning models like GPT-5.5 and o1 achieve higher performance by spending additional compute during inference rather than increasing training parameters. According to Towards Data Science analysis, this “test-time compute” approach generates hidden reasoning tokens that never appear in responses but dramatically increase billable compute costs.

This creates new operational challenges for product teams balancing the Cost-Quality-Latency triangle. Finance teams monitor shrinking margins from high token costs, infrastructure engineers manage p95 latency to prevent timeouts, and product managers decide whether better answers justify thirty-second delays.

Organizations are developing task taxonomies to route simple queries to efficient models while reserving compute budgets for high-stakes reasoning. This strategy optimizes resource allocation across different use cases, from basic customer service to complex analytical tasks requiring extended deliberation.

What This Means

The AI industry is fragmenting into two distinct optimization paths: scaling up through massive parameter counts versus scaling smart through architectural efficiency. ZAYA1-8B’s competitive performance at 760 million active parameters demonstrates that intelligence density can match raw scale, potentially democratizing AI capabilities for organizations with limited compute budgets.

Subquadratic’s claims, if validated, would fundamentally alter the economics of long-context AI applications. However, the skeptical research community response highlights the importance of independent verification for extraordinary efficiency claims. The gated access model contradicts typical patterns for genuinely breakthrough technologies.

Google’s MTP drafters represent incremental but practical improvements that developers can implement immediately. These 3x speedups compound across applications, making the difference between viable and unviable deployment scenarios for many use cases.

The emergence of inference scaling as a performance strategy creates new cost management challenges but also opportunities for more targeted AI deployment. Organizations can now choose between fast-and-cheap models for routine tasks and slow-and-smart models for complex reasoning, optimizing both performance and economics.

FAQ

How does ZAYA1-8B achieve GPT-5 performance with fewer parameters?

ZYA1-8B uses a mixture-of-experts architecture with only 760 million active parameters out of 8 billion total, combined with efficiency optimizations Zyphra calls “intelligence density.” The model was trained on AMD MI300 GPUs using architectural innovations that maximize performance per parameter rather than relying on scale.

What makes Subquadratic’s efficiency claims controversial?

Subquadratic claims 1,000x efficiency improvements and fully subquadratic scaling, which would be the largest breakthrough in transformer architecture since 2017. Researchers are skeptical due to cherry-picked benchmarks, gated access despite claimed low costs, and the extraordinary nature of the claims without independent verification.

How do Multi-Token Prediction drafters improve inference speed?

MTP drafters pair lightweight models with heavy target models to predict multiple future tokens while the main model processes one token. The target model then verifies predictions in parallel, utilizing idle compute to achieve up to 3x speedups without quality loss through speculative decoding techniques.