DeepSeek released its V4 model on Tuesday, delivering performance that matches or exceeds GPT-5.5 and Claude Opus 4.7 at approximately one-sixth the API cost. The 1.6-trillion-parameter Mixture-of-Experts model is available under MIT License on Hugging Face and through DeepSeek’s API, marking what industry observers call the “second DeepSeek moment.”

According to VentureBeat, DeepSeek AI researcher Deli Chen described the release as a “labor of love” 484 days after the V3 launch, emphasizing that “AGI belongs to everyone.” The Chinese AI startup, an offshoot of High-Flyer Capital Management, previously disrupted the industry in January 2025 with its open-source R1 model that matched proprietary U.S. systems.

https://x.com/deepseek_ai/status/2047516922263285776

Performance Benchmarks and Cost Analysis

DeepSeek-V4 achieves frontier-class performance across multiple evaluation metrics while maintaining significantly lower operational costs than closed-source alternatives. The model demonstrates competitive reasoning capabilities on standard benchmarks, with some tests showing performance that surpasses current market leaders.

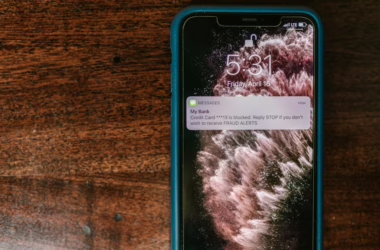

The cost advantage represents a fundamental shift in AI economics. Where Claude Opus 4.6 charges $5 for input tokens and $25 for output tokens per million through its API, DeepSeek-V4 operates at roughly one-sixth that pricing structure. This cost differential has immediate implications for enterprise adoption and development workflows.

For context, Towards Data Science reports that developers have been seeking alternatives to Claude Code subscriptions specifically due to Anthropic’s recent policy changes and high API pricing. The publication notes that Claude Opus 4.6’s current rates “quickly rack up the cost” for intensive applications.

Open Source Infrastructure and Licensing

The MIT License release removes typical commercial restrictions found in other open-source AI models. Unlike models with custom licenses that limit commercial use or require attribution, MIT licensing allows unrestricted modification, distribution, and commercial deployment.

DeepSeek-V4’s open-source nature extends beyond just model weights. The release includes comprehensive documentation, training methodologies, and implementation guides that enable developers to fine-tune and deploy the model independently. This contrasts with “open-weight” releases that provide model parameters without training code or detailed methodologies.

The Mixture-of-Experts architecture optimizes computational efficiency by activating only relevant model components for specific tasks. This design choice reduces inference costs while maintaining performance across diverse applications, making the model particularly attractive for resource-conscious deployments.

Industry Response and Competitive Pressure

The release places immediate pressure on closed-source providers to justify their premium pricing models. OpenAI, Anthropic, and Google now face a scenario where comparable performance is available at dramatically lower costs through open-source alternatives.

Community reactions on Hugging Face indicate significant enthusiasm for the cost-performance ratio. Industry analysts note that DeepSeek-V4 effectively “resets the developmental trajectory of the entire field,” forcing proprietary providers to reconsider their pricing strategies and value propositions.

The timing coincides with broader industry trends toward open-source AI infrastructure. OpenAI recently released its Privacy Filter model under Apache 2.0 license, while other major players have increased their open-source contributions. This suggests a potential industry shift toward more accessible AI development tools.

Technical Architecture and Implementation

DeepSeek-V4 employs a sophisticated Mixture-of-Experts design that balances computational efficiency with performance scalability. The 1.6-trillion-parameter model uses selective activation patterns that reduce memory requirements during inference while maintaining access to the full parameter space when needed.

The model supports extended context lengths up to 1 million tokens, enabling complex document analysis and multi-turn conversations without performance degradation. This capability positions it competitively against models like Claude that emphasize long-context reasoning.

Implementation options include local deployment for privacy-sensitive applications and API access for scalable production environments. The model’s hardware requirements remain accessible to organizations with standard GPU infrastructure, avoiding the specialized hardware dependencies of some competing systems.

Enterprise Adoption Implications

The cost-performance combination addresses a critical barrier to enterprise AI adoption. Organizations previously constrained by API pricing for high-volume applications now have access to comparable capabilities at sustainable cost structures.

Enterprise use cases particularly benefit from the open-source licensing model. Companies can modify the model for domain-specific applications, integrate it into existing workflows without vendor lock-in, and maintain data privacy through local deployment options.

The release also impacts AI development workflows. Teams building applications with intensive language model requirements can now prototype and scale without the budget constraints imposed by premium API pricing. This democratization effect could accelerate innovation across smaller organizations and research institutions.

What This Means

DeepSeek-V4 represents a significant market disruption that challenges the economic foundations of closed-source AI providers. The combination of frontier performance with open-source accessibility and dramatically lower costs creates new competitive dynamics that will likely accelerate industry-wide changes.

For enterprises, this release provides immediate cost optimization opportunities while maintaining access to state-of-the-art capabilities. The open-source nature enables customization and privacy controls that many organizations require but cannot achieve with proprietary solutions.

The broader implications suggest a potential shift toward open-source AI infrastructure as the default choice for many applications. If DeepSeek can maintain this performance-cost advantage through future releases, it may establish open-source models as the primary development platform for AI applications.

FAQ

How does DeepSeek-V4 compare to GPT-5.5 and Claude Opus 4.7 in performance?

DeepSeek-V4 matches or exceeds these models on most benchmarks while operating at approximately one-sixth the API cost. The model demonstrates competitive reasoning capabilities and supports extended context lengths up to 1 million tokens.

What are the licensing terms for DeepSeek-V4?

The model is released under MIT License, which allows unrestricted commercial use, modification, and distribution without attribution requirements. This is more permissive than many other open-source AI models that use custom licenses with commercial restrictions.

Can DeepSeek-V4 run on standard enterprise hardware?

Yes, the Mixture-of-Experts architecture optimizes resource usage to run on standard GPU infrastructure. Organizations can deploy it locally for privacy-sensitive applications or use DeepSeek’s API for scalable production environments.

Related news

Sources

- DeepSeek-V4 arrives with near state-of-the-art intelligence at 1/6th the cost of Opus 4.7, GPT-5.5 – VentureBeat

- How to Run OpenClaw with Open-Source Models – Towards Data Science