Enterprise AI agent deployments are hitting a security wall, with 85% of companies stuck in pilot phase while only 5% reach production, according to Cisco President Jeetu Patel at RSAC 2026. The gap stems from identity governance failures that leave autonomous agents operating without proper access controls or accountability frameworks.

According to IANS Research, most businesses lack role-based access control mature enough for human identities, and agents compound this problem exponentially. The 2026 IBM X-Force Threat Intelligence Index reported a 44% increase in attacks exploiting public-facing applications, driven by missing authentication controls and AI-enabled vulnerability discovery.

The Four-Surface Attack Model

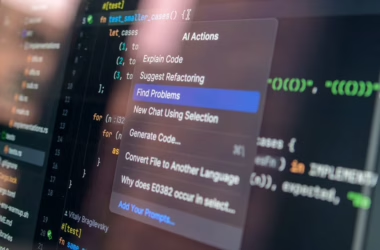

Traditional AI security focused on prompt injection attacks against standalone language models. Agents expand this to four distinct attack surfaces, according to Gravitee’s 2026 State of AI Agent Security report, which surveyed over 900 executives and practitioners.

The prompt surface handles external inputs, similar to traditional LLMs. The tool surface executes backend actions like database queries or API calls. The memory surface stores information across sessions, creating persistent attack vectors. The orchestration surface coordinates multi-agent workflows, multiplying potential failure points.

Key findings from the Gravitee survey:

- 88% of organizations reported confirmed or suspected AI agent security incidents

- Only 14.4% of agentic systems launched with full security approval

- 98% of cybersecurity leaders report friction between AI adoption speed and security requirements

Medical transcription agents updating electronic health records and computer vision agents running factory quality control exemplify the stakes. Both generate non-human identities that most enterprises cannot inventory, scope, or revoke at machine speed.

Anthropic Addresses Agent Reliability

Anthropic on Tuesday introduced “dreaming” for its Claude Managed Agents platform, allowing AI agents to learn from past sessions and self-correct over time. The feature addresses enterprise demands for self-improving AI systems before trusting agents with production workloads.

Early results show significant improvements. Legal AI company Harvey saw task completion rates increase 6x after implementing dreaming. Medical document review company Wisedocs cut review time by 50% using Anthropic’s outcomes feature. Netflix now processes logs from hundreds of builds simultaneously using multi-agent orchestration.

The company also moved outcomes and multi-agent orchestration from research preview to public beta. CEO Dario Amodei disclosed that Anthropic’s Q1 2026 growth outpaced internal projections, though specific revenue figures were not provided.

Subscription Model Tensions Surface

Anthropic simultaneously

to its Claude subscription tiers, introducing separate “Agent SDK” credits for programmatic uses including third-party agents like OpenClaw. The move reverses an April 2026 policy that prohibited subscriptions from powering external agents due to capacity issues.

https://x.com/ClaudeDevs/status/2054610152817619388

The original problem: Claude subscribers paying $20-$200 monthly were consuming hundreds or thousands of dollars in compute tokens through autonomous agents. The new system allocates specific credits for agent usage while preserving standard subscription limits for direct Claude interactions.

Developer reaction has been mixed. Popular AI YouTuber Theo Browne warned users about “disguised” free credits, while solo builder Kun Chen questioned the practical utility of the credit allocation. The backlash highlights tensions between subscription value and infrastructure costs as agent usage scales.

Enterprise Identity Architecture Gaps

Michael Dickman, SVP of Cisco’s Campus Networking business, outlined the core trust framework challenge in an interview with VentureBeat. Before agents can operate in production, enterprises need to answer: which agents have access to sensitive systems, and who is accountable when one acts outside scope?

Current identity and access management (IAM) systems were designed for human users with predictable access patterns. Agents operate at machine speed across multiple systems, often coordinating with other agents in ways that traditional role-based access control cannot accommodate.

The architectural problem extends beyond tooling. Agents need persistent memory, tool access, and orchestration capabilities that create new attack vectors. A navigation app suggesting routes differs fundamentally from an autopilot system controlling steering and throttle — the risk models are incomparable.

Automation’s Labor Market Impact

Outplacement firm Challenger, Gray and Christmas reported automation as the top reason for layoffs in both March and April 2026. U.S. employers eliminated 83,387 jobs in April, up 38% from March, with technology companies leading layoff announcements while citing AI spend and innovation.

“Regardless of whether individual jobs are being replaced by AI, the money for those roles is,” explained Andy Challenger, the firm’s chief revenue officer. The distinction matters: companies may not replace jobs one-to-one with agents, but budget reallocation toward AI infrastructure reduces overall headcount needs.

Meta exemplifies this trend, with Mark Zuckerberg announcing ambitious automation plans for many operations. The pattern suggests agent adoption will accelerate despite security concerns, potentially creating larger attack surfaces as enterprises rush deployment.

What This Means

The AI agent security crisis represents a classic enterprise technology adoption pattern: promising capabilities outpacing security infrastructure. Unlike previous waves, agents operate autonomously with persistent memory and tool access, creating attack surfaces that traditional IAM cannot address.

The 80-point gap between pilot and production adoption indicates fundamental architectural limitations, not just tooling gaps. Enterprises face a choice between accepting security risks for competitive advantage or waiting for mature identity governance frameworks.

Anthropic’s dreaming feature and subscription model changes suggest vendors recognize the production readiness challenge. However, solving agent reliability differs from solving agent security — self-improving systems may actually increase attack complexity.

The automation-driven layoffs provide economic pressure for faster agent deployment, potentially overwhelming security considerations. This creates a dangerous feedback loop where competitive pressure accelerates adoption while security infrastructure lags further behind.

FAQ

Why are enterprises stuck in AI agent pilot phase?

Identity governance systems cannot handle autonomous agents operating at machine speed across multiple systems. Current IAM frameworks were designed for human users with predictable access patterns, not self-directing agents with persistent memory and tool access.

What makes agent security different from traditional AI security?

Standalone LLMs have one attack surface (the prompt), while agents expose four: prompt inputs, tool execution, persistent memory, and multi-agent orchestration. Each surface creates new vulnerability vectors that traditional prompt injection defenses cannot address.

How are companies balancing agent adoption with security concerns?

Most are choosing competitive advantage over security maturity. Only 14.4% of agentic systems launch with full security approval, while 88% of organizations report confirmed or suspected agent security incidents. Economic pressure from automation-driven cost savings accelerates this trend.

Related news

Sources

- AI agents are running hospital records and factory inspections. Enterprise IAM was never built for them. – VentureBeat

- The AI Agent Security Surface: What Gets Exposed When You Add Tools and Memory – Towards Data Science

- Anthropic introduces “dreaming,” a system that lets AI agents learn from their own mistakes – VentureBeat