The artificial intelligence landscape continues evolving rapidly as researchers develop increasingly sophisticated neural network architectures that push the boundaries of computational efficiency and model performance. Recent breakthroughs in transformer architectures, parameter optimization, and inference acceleration are fundamentally reshaping how we approach large language model design and deployment.

Revolutionary Transformer Architecture Innovations

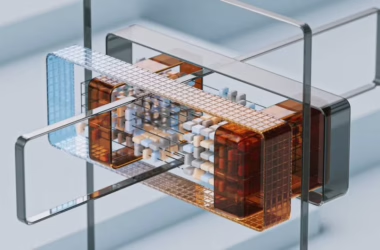

The transformer architecture, first introduced in the seminal “Attention Is All You Need” paper, has undergone remarkable refinements that address its computational limitations while enhancing performance capabilities. Modern transformer variants incorporate mixture-of-experts (MoE) architectures that dramatically reduce active parameter counts during inference while maintaining model capacity.

Key architectural innovations include:

- Sparse attention mechanisms that reduce quadratic complexity to near-linear scaling

- Rotary positional embeddings (RoPE) for improved sequence length extrapolation

- Multi-query attention (MQA) and grouped-query attention (GQA) for faster inference

- Layer normalization variants like RMSNorm for training stability

These advances enable models to process longer sequences with significantly reduced computational overhead. For instance, Longformer and BigBird architectures demonstrate how sparse attention patterns can handle sequences exceeding 16,000 tokens while maintaining performance on downstream tasks.

Advanced Training Methodologies and Optimization

Contemporary training techniques have evolved beyond traditional supervised learning approaches to incorporate sophisticated optimization strategies that maximize parameter efficiency. Constitutional AI training and reinforcement learning from human feedback (RLHF) represent paradigmatic shifts in how models learn complex reasoning patterns.

Parameter-Efficient Training Approaches

Modern training methodologies focus on maximizing model capabilities while minimizing computational requirements:

- Low-Rank Adaptation (LoRA) enables fine-tuning with <1% of original parameters

- Quantization-aware training maintains performance at INT8/INT4 precision

- Gradient checkpointing reduces memory requirements during backpropagation

- Mixed-precision training accelerates convergence while preserving numerical stability

Prefix tuning and prompt tuning techniques demonstrate how minimal parameter updates can achieve task-specific performance comparable to full fine-tuning. These approaches reduce training costs by orders of magnitude while enabling rapid model adaptation across diverse domains.

Distributed Training Innovations

Scaling training across multiple GPUs and nodes requires sophisticated parallelization strategies. 3D parallelism combining data, model, and pipeline parallelism enables training of trillion-parameter models. ZeRO optimizer states partition optimizer memory across devices, enabling larger models on existing hardware.

Inference Efficiency and Acceleration Techniques

Inference optimization represents a critical frontier for deploying large language models in production environments. Speculative decoding and parallel sampling techniques reduce latency while maintaining output quality.

Hardware-Software Co-optimization

Modern inference acceleration leverages both algorithmic improvements and specialized hardware:

- Tensor parallelism distributes computation across multiple accelerators

- KV-cache optimization reduces memory bandwidth requirements

- Dynamic batching maximizes throughput for variable-length sequences

- Quantization techniques enable deployment on resource-constrained devices

Flash Attention algorithms demonstrate how memory-efficient attention computation can achieve 2-4x speedups without approximation. These techniques enable real-time inference for applications requiring sub-second response times.

Model Compression and Distillation

Knowledge distillation techniques transfer capabilities from large teacher models to compact student architectures. Structured pruning removes entire attention heads or feed-forward layers while preserving performance. These approaches enable deployment on edge devices with limited computational resources.

Emerging Architecture Paradigms

Next-generation architectures explore alternatives to pure transformer designs. State Space Models (SSMs) like Mamba offer linear scaling with sequence length while maintaining competitive performance. Retrieval-augmented generation (RAG) architectures integrate external knowledge bases for factual accuracy.

Multimodal Integration

Vision-language transformers demonstrate how unified architectures can process diverse input modalities. Cross-attention mechanisms enable sophisticated reasoning across text, images, and structured data. These developments point toward more general artificial intelligence systems.

Mixture-of-modalities approaches allow models to dynamically allocate computational resources based on input complexity. This adaptive processing enables efficient handling of multimodal tasks without fixed computational overhead.

Performance Metrics and Benchmarking

Evaluating architectural advances requires comprehensive benchmarking across diverse tasks and efficiency metrics. FLOPS-per-token measurements provide hardware-agnostic performance comparisons. Memory bandwidth utilization quantifies inference efficiency on modern accelerators.

Standardized benchmarks like HELM and BigBench enable systematic comparison of architectural innovations. These evaluations consider both task performance and computational efficiency, providing holistic assessments of model capabilities.

What This Means

These architectural advances collectively represent a maturation of deep learning methodology, moving beyond raw parameter scaling toward sophisticated efficiency optimizations. The convergence of improved architectures, training techniques, and inference acceleration enables deployment of increasingly capable AI systems across diverse applications.

For researchers, these developments provide powerful tools for exploring novel applications while managing computational constraints. For practitioners, efficiency improvements enable real-world deployment of advanced AI capabilities in resource-constrained environments.

The trajectory toward more efficient, capable architectures suggests that future AI systems will achieve superior performance while requiring fewer computational resources, democratizing access to advanced AI capabilities across industries and applications.

FAQ

What makes modern transformer architectures more efficient than original designs?

Modern transformers incorporate sparse attention mechanisms, mixture-of-experts architectures, and optimized normalization techniques that reduce computational complexity while maintaining or improving performance capabilities.

How do parameter-efficient training methods compare to traditional fine-tuning?

Techniques like LoRA and prefix tuning achieve comparable performance to full fine-tuning while updating <1% of model parameters, dramatically reducing training costs and memory requirements.

What are the key factors driving inference acceleration improvements?

Inference acceleration results from algorithmic innovations like Flash Attention, hardware optimization through quantization and parallelism, and architectural improvements that reduce computational overhead per token generated.