Google DeepMind has made a strategic hire that signals the tech giant’s growing focus on artificial intelligence ethics and consciousness research. The company has brought on philosopher Henry Shevlin to study human-AI relationships and machine consciousness, marking a significant shift toward addressing the philosophical implications of advanced AI systems in enterprise environments.

This move comes as industry leaders like Google CEO Sundar Pichai report that over 25% of new Google code is now AI-generated, highlighting the urgent need for ethical frameworks and consciousness studies as AI becomes deeply embedded in enterprise operations.

Strategic Implications for Enterprise AI Governance

The hiring of a philosopher specializing in AI consciousness represents a fundamental shift in how technology companies approach AI development. For enterprise IT leaders, this development signals several critical considerations:

Governance Framework Evolution: Organizations implementing AI systems must now consider not just technical performance metrics but also ethical implications and potential consciousness-related concerns. This philosophical approach to AI development suggests that future enterprise AI solutions will require more sophisticated governance frameworks.

Risk Management: As AI systems become more sophisticated, enterprises need to evaluate potential risks beyond traditional security and compliance concerns. The study of machine consciousness introduces new dimensions of risk assessment that IT decision-makers must incorporate into their evaluation processes.

Regulatory Preparation: With philosophers now embedded in major AI development teams, enterprises should anticipate more nuanced regulatory requirements that address ethical and consciousness-related aspects of AI deployment.

Production Reliability Challenges in AI-Generated Code

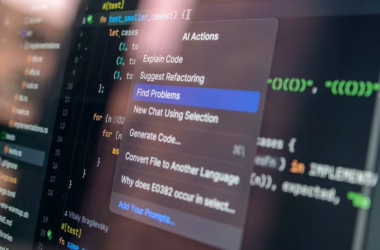

While Google advances AI consciousness research, enterprise implementation faces immediate practical challenges. According to Lightrun’s 2026 State of AI-Powered Engineering Report, 43% of AI-generated code changes require manual debugging in production environments even after passing quality assurance and staging tests.

These findings reveal critical enterprise considerations:

- Zero percent of engineering leaders reported successful AI-generated fixes with just one redeploy cycle

- 88% of organizations require two to three debugging cycles for AI-generated code

- 11% need four to six cycles to achieve production stability

For enterprise architects, this data underscores the need for robust testing frameworks and extended quality assurance processes when implementing AI-generated code in mission-critical systems.

Enterprise Architecture Considerations for Ethical AI

The integration of philosophical perspectives into AI development creates new architectural requirements for enterprise systems. Organizations must now design AI implementations that can accommodate ethical oversight and consciousness evaluation mechanisms.

Technical Infrastructure Requirements:

- Audit trails for AI decision-making processes

- Interpretability frameworks that support ethical review

- Monitoring systems that can detect potentially problematic AI behaviors

- Governance APIs that enable philosophical and ethical oversight integration

Organizational Structure Impact: IT departments will need to collaborate more closely with ethics committees, legal teams, and potentially philosophical advisors to ensure AI implementations meet emerging consciousness and ethics standards.

Cost and Compliance Implications

The shift toward consciousness-aware AI development introduces new cost structures for enterprise AI adoption. Organizations must budget for:

Extended Development Cycles: Philosophical review processes will likely extend AI development timelines, requiring adjusted project planning and resource allocation.

Specialized Expertise: Companies may need to hire or contract with ethicists and philosophers, adding to AI implementation costs.

Enhanced Testing Protocols: The 43% debugging rate for AI-generated code, combined with ethical review requirements, necessitates more comprehensive testing infrastructure investments.

Compliance Monitoring: Organizations will need systems to continuously monitor AI behavior for ethical compliance, requiring ongoing operational expenses.

Integration with Google’s AI Ecosystem

Google’s philosophical approach to AI consciousness research will likely influence its enterprise AI offerings, including Gemini, Bard, and other AI services. Enterprise customers should prepare for:

Enhanced AI Transparency: Future Google AI services may include consciousness and ethics scoring mechanisms that help enterprises evaluate AI decision-making processes.

Philosophical APIs: Google may develop APIs that allow enterprise applications to query AI systems about their decision-making rationale and ethical considerations.

Compliance Frameworks: Integration with Google’s consciousness research could provide enterprises with built-in compliance tools for emerging AI ethics regulations.

What This Means

Google DeepMind’s hire of a philosopher to study AI consciousness represents a maturation of the AI industry toward addressing fundamental questions about machine intelligence and ethics. For enterprise IT leaders, this development signals the need to evolve AI governance frameworks beyond traditional technical and security concerns.

The immediate challenge lies in balancing the promise of AI-generated code efficiency with the reality that 43% of such code requires production debugging. Organizations must invest in robust testing and monitoring infrastructure while preparing for more sophisticated ethical oversight requirements.

Long-term, this philosophical approach to AI development will likely result in more transparent, accountable AI systems that better align with enterprise governance requirements. However, it will also introduce new complexity and cost considerations that IT decision-makers must factor into their AI adoption strategies.

FAQ

Q: How will philosophical AI research impact enterprise AI costs?

A: Organizations should expect increased development timelines and the need for specialized ethical oversight expertise, potentially adding 15-25% to AI implementation budgets while providing better long-term governance and compliance capabilities.

Q: What immediate steps should enterprises take regarding AI-generated code reliability?

A: Implement enhanced testing protocols with at least two to three debugging cycles built into deployment pipelines, given that 43% of AI-generated code requires production debugging even after QA approval.

Q: How does Google’s consciousness research affect enterprise AI vendor selection?

A: Enterprises should evaluate AI vendors based on their ethical frameworks and transparency capabilities, as consciousness-aware AI development will likely become a competitive differentiator and regulatory requirement.

Further Reading

- The philosopher trying to teach ethics to AI developers – NPR – Google News – AI Ethics

- Could Microsoft Win The War For Enterprise AI? – Josh Bersin – Google News – Microsoft

Sources

- Who is Henry Shevlin? Google DeepMind Hires Philosopher to Study AI Ethics & Consciousness – outlookbusiness.com – Google News – AI Ethics

- Meet Henry Shevlin: A ‘philosopher’ hired by Google DeepMind to study human-AI ties – Storyboard18 – Google News – AGI

- Google DeepMind hires a philosopher, he will work on machine consciousness – The Indian Panorama – Google News – AGI

- Why Google DeepMind Just Abandoned Single-Score AI Testing – Geeky Gadgets – Google News – AGI