The Open Source AI Revolution Accelerates

Open source AI models are fundamentally transforming how organizations approach artificial intelligence deployment, with Meta’s Llama, Mistral, and other community-driven models gaining unprecedented adoption across enterprises. According to Google Cloud’s latest report, over 1,302 real-world generative AI use cases are now deployed across leading organizations, marking what experts describe as the fastest technological transformation in recent history.

The shift toward open source AI represents more than just a technical preference—it signals a critical inflection point in how society approaches AI governance, transparency, and democratic access to powerful technologies. As OpenAI recently launched Privacy Filter on Hugging Face under an Apache 2.0 license, the movement demonstrates that even traditionally closed-source companies are recognizing the ethical imperatives of open development.

Democratizing AI Access Through Open Weights

The availability of open weights through platforms like Hugging Face has fundamentally altered the AI landscape’s power dynamics. Unlike proprietary models that concentrate control within a few tech giants, open source alternatives like Llama and Mistral enable organizations of all sizes to access, modify, and deploy sophisticated AI capabilities.

This democratization carries profound ethical implications. Smaller organizations, academic institutions, and developing nations can now leverage state-of-the-art AI without being subject to the commercial interests or geopolitical constraints of major tech companies. However, this accessibility also raises concerns about potential misuse and the need for responsible deployment frameworks.

The fine-tuning capabilities offered by open source models present both opportunities and challenges. While organizations can customize models for specific use cases and cultural contexts, the same flexibility that enables beneficial applications also facilitates potentially harmful adaptations.

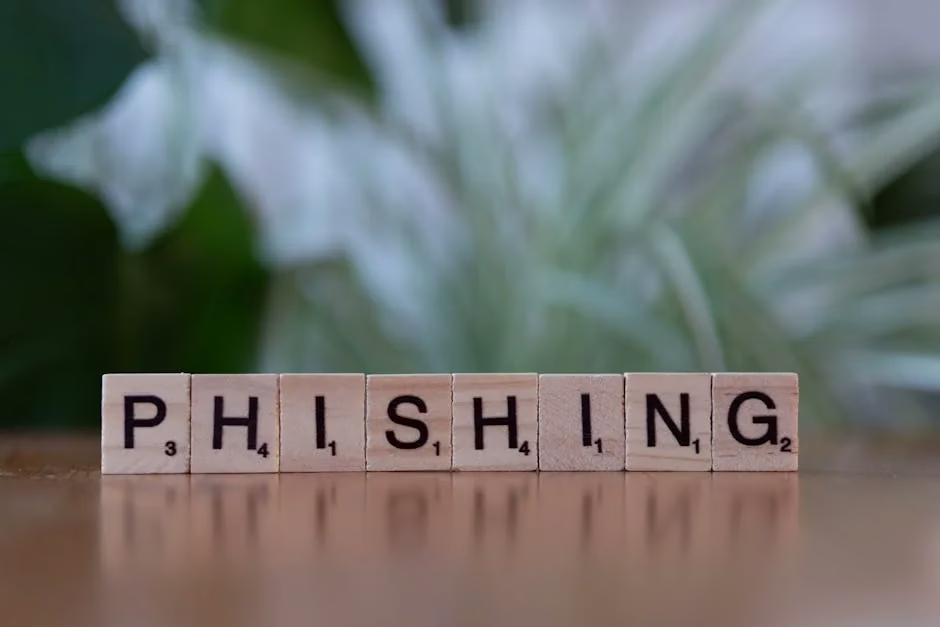

Transparency Versus Security Trade-offs

Open source AI models embody a fundamental tension between transparency and security. The availability of model weights and training methodologies enables unprecedented scrutiny of AI systems, allowing researchers to identify biases, study failure modes, and develop mitigation strategies. This transparency is essential for building trustworthy AI systems that can be held accountable to public interest.

However, recent security incidents highlight the complexity of this trade-off. VentureBeat’s enterprise survey reveals that 88% of organizations experienced AI agent security incidents in the past year, with only 21% maintaining runtime visibility into agent actions. The open nature of these models can potentially amplify security risks if proper governance frameworks aren’t implemented.

The Privacy Filter release by OpenAI demonstrates one approach to addressing these concerns—providing open source tools that enable privacy-preserving AI deployment. This 1.5-billion-parameter model can run locally to sanitize data before it reaches cloud servers, exemplifying how open source development can enhance rather than compromise security.

Governance Challenges in the Agentic Era

As we enter what Google describes as the “agentic enterprise” era, the governance challenges surrounding open source AI models become increasingly complex. Unlike traditional software, AI agents can make autonomous decisions that directly impact human lives and organizational operations.

The distributed nature of open source development complicates traditional regulatory approaches. Who bears responsibility when an open source model causes harm? How can policymakers ensure compliance with emerging AI regulations when the development process involves hundreds of contributors across multiple jurisdictions?

Recent developments in agentic AI security reveal that 97% of enterprise security leaders expect a material AI-agent-driven incident within 12 months, yet only 6% of security budgets address this risk. This disconnect suggests that current governance frameworks are inadequate for the realities of open source AI deployment.

Bias, Fairness, and Representational Concerns

Open source AI models present unique opportunities and challenges regarding bias and fairness. The transparency of open development enables community-driven bias detection and mitigation efforts that would be impossible with proprietary models. Diverse global contributors can identify cultural biases and develop more inclusive training approaches.

However, the democratization of AI development doesn’t automatically ensure equitable outcomes. Open source projects often reflect the demographics and perspectives of their contributor communities, which may not represent the full diversity of end users. Without intentional efforts to include underrepresented voices in development processes, open source models risk perpetuating existing inequalities.

The fine-tuning capabilities of models like Llama and Mistral offer potential solutions, allowing organizations to adapt models for specific cultural contexts and use cases. Yet this same flexibility raises questions about consistency in ethical standards across different deployments of the same base model.

Economic and Social Impact Considerations

The rise of open source AI models has significant implications for economic equity and social development. By reducing barriers to AI adoption, these models enable smaller companies and developing regions to compete more effectively in the global digital economy.

Educational institutions particularly benefit from open source AI access. Hugging Face’s recent ML Intern launch demonstrates how open platforms can accelerate AI education and research capabilities.

However, the economic disruption caused by AI automation affects all deployment models. Open source accessibility may accelerate job displacement in certain sectors while creating new opportunities in others. Policymakers must consider how to ensure that the benefits of open AI development are distributed equitably across society.

What This Means

The proliferation of open source AI models like Llama and Mistral represents a critical juncture in AI development that demands thoughtful ethical consideration and proactive governance. While these models democratize access to powerful AI capabilities and enhance transparency, they also introduce new challenges around security, accountability, and equitable deployment.

The key to realizing the benefits of open source AI while mitigating risks lies in developing robust governance frameworks that can adapt to the distributed nature of open development. This requires collaboration between technologists, policymakers, ethicists, and affected communities to establish standards that promote responsible innovation without stifling beneficial progress.

As organizations increasingly deploy agentic AI systems built on open source foundations, the need for comprehensive risk management and ethical oversight becomes paramount. The future of AI governance will likely depend on our ability to balance the democratic ideals of open source development with the practical requirements of safety, security, and accountability.

FAQ

What are the main ethical advantages of open source AI models?

Open source AI models enhance transparency, enable community-driven bias detection, democratize access to AI capabilities, and allow for independent security audits. This openness facilitates more accountable and inclusive AI development compared to proprietary alternatives.

How do open source models like Llama and Mistral address bias concerns?

These models enable diverse global communities to identify and address biases through collaborative development. Organizations can also fine-tune models for specific cultural contexts and use cases, though this requires careful attention to maintaining ethical standards across different deployments.

What governance challenges do open source AI models present?

Key challenges include determining accountability when distributed development leads to harmful outcomes, ensuring compliance with AI regulations across multiple jurisdictions, and managing security risks while maintaining the benefits of transparency and accessibility.

Related news

- Won Buddhism Links Founding Day Message to Mental Health, AI Ethics, and Suicide Prevention in South Korea – Buddhistdoor Global – Google News – AI Ethics

- 221 Blog Posts To Learn About Ai Ethics – HackerNoon – Google News – AI Ethics

- Introducing Cohere-transcribe: state-of-the-art speech recognition – HuggingFace Blog

Sources

- Fine-Tuning Your First Large Language Model (LLM) with PyTorch and Hugging Face – HuggingFace Blog

- OpenAI launches Privacy Filter, an open source, on-device data sanitization model that removes personal information from enterprise datasets – VentureBeat

- Hugging Face launches ML Intern, AI agent that beats Claude Code on reasoning | ETIH EdTech News – EdTech Innovation Hub – Google News – Tech Innovation