Open-source artificial intelligence models are fundamentally reshaping enterprise computing architectures, with new frameworks from NanoClaw, Salesforce’s Headless 360, and breakthrough research on Train-to-Test scaling laws providing organizations with unprecedented control over AI deployment costs and security protocols. These developments collectively address the most pressing challenges in enterprise AI adoption: inference optimization, agent security, and cost-effective model training.

Fine-Tuning Democratizes Custom AI Model Development

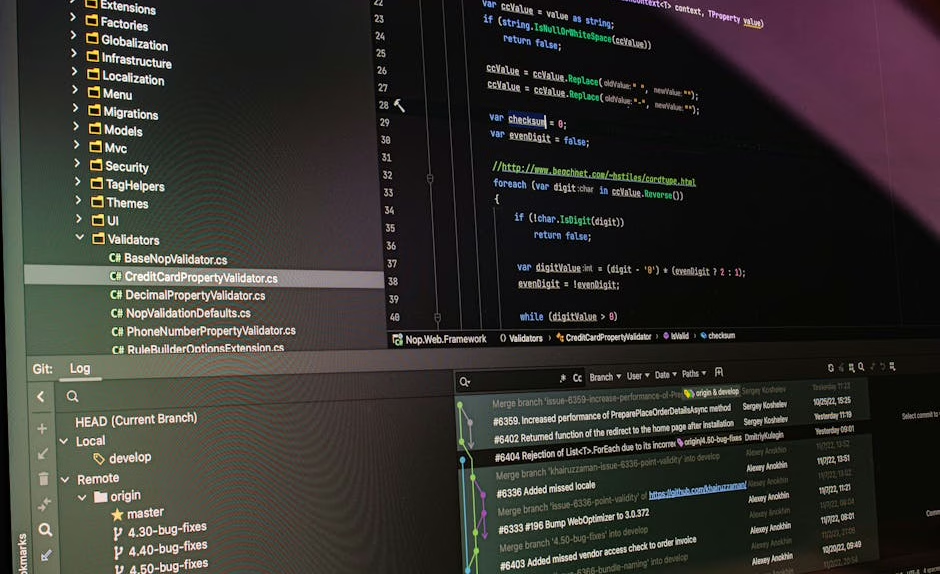

The democratization of large language model fine-tuning has reached a critical inflection point with comprehensive frameworks now available through Hugging Face’s latest educational resources. The platform’s systematic approach to PyTorch-based fine-tuning enables organizations to customize open-source models like Llama and Mistral for specific enterprise use cases.

Fine-tuning represents a paradigm shift from the traditional approach of relying solely on general-purpose foundation models. By leveraging techniques such as Low-Rank Adaptation (LoRA) and parameter-efficient fine-tuning (PEFT), organizations can achieve domain-specific performance improvements while maintaining computational efficiency.

The technical architecture underlying these fine-tuning frameworks utilizes gradient accumulation and mixed-precision training to optimize memory usage during the adaptation process. This enables deployment on standard enterprise hardware configurations, reducing the barrier to entry for organizations seeking to implement custom AI solutions.

Train-to-Test Scaling Laws Optimize AI Compute Economics

Researchers at University of Wisconsin-Madison and Stanford University have introduced revolutionary Train-to-Test (T²) scaling laws that fundamentally challenge conventional wisdom about optimal model sizing and training data allocation. According to VentureBeat’s analysis, this framework demonstrates that training smaller models on vastly more data and utilizing the computational savings for inference-time scaling yields superior performance per dollar spent.

The T² methodology addresses a critical gap in existing scaling laws, which optimize exclusively for training costs while ignoring inference expenses. Traditional approaches recommend large parameter counts with limited training data, but the new research proves that smaller, extensively trained models combined with multiple inference samples achieve better results on complex reasoning tasks.

Key findings from the research include:

- Compute-optimal models are substantially smaller than previously recommended

- Training data volume should be increased proportionally to the parameter reduction

- Inference-time scaling through multiple reasoning samples provides cost-effective accuracy improvements

- Total cost of ownership decreases significantly when training and inference costs are jointly optimized

This approach particularly benefits enterprise applications requiring complex reasoning capabilities, such as financial analysis, legal document review, and technical troubleshooting scenarios.

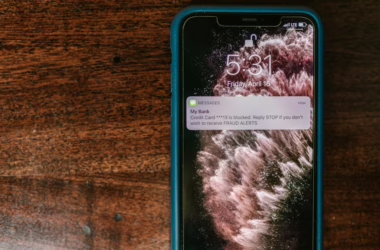

Agent Security Architecture Addresses Enterprise Deployment Gaps

Enterprise AI agent security has emerged as a critical bottleneck, with recent incidents at Meta and other organizations highlighting fundamental architectural vulnerabilities. According to VentureBeat’s three-wave survey of 108 qualified enterprises, 82% of executives believe their policies protect against unauthorized agent actions, yet 88% reported AI agent security incidents within the past twelve months.

The disconnect between perceived and actual security stems from a structural gap that security professionals describe as “monitoring without enforcement, enforcement without isolation.” Current enterprise architectures rely heavily on observation-based security models that lack real-time intervention capabilities.

NanoClaw 2.0 addresses these vulnerabilities through infrastructure-level enforcement mechanisms. The framework introduces standardized approval workflows that integrate with existing enterprise messaging platforms, ensuring that high-consequence “write” actions require explicit human authorization before execution.

The technical implementation leverages:

- Sandboxed execution environments that isolate agent operations

- API-level permission controls that prevent unauthorized system access

- Real-time approval workflows integrated with Slack, WhatsApp, and other messaging platforms

- Cryptographic verification of human consent before sensitive operations

Salesforce Headless 360 Redefines Platform Architecture

Salesforce’s announcement of Headless 360 represents the most significant architectural transformation in the company’s 27-year history. The initiative exposes every platform capability as APIs, Model Control Protocol (MCP) tools, or CLI commands, enabling AI agents to operate the entire system programmatically.

This architectural shift addresses a fundamental question facing enterprise software providers: whether traditional graphical user interfaces remain relevant in an agent-driven computing environment. Salesforce’s answer involves completely rebuilding their platform to prioritize programmatic access over visual interfaces.

The technical implementation includes:

- Over 100 new tools and skills immediately available to developers

- Universal API exposure for all platform capabilities

- MCP tool integration for standardized agent interactions

- CLI command interfaces for scriptable operations

Jayesh Govindarjan, EVP of Salesforce and key architect of the initiative, emphasized that the transformation stems from strategic recognition that AI agents require fundamentally different interaction patterns than human users.

Performance Metrics and Benchmarking Standards

The evaluation of open-source AI models requires sophisticated benchmarking methodologies that account for both training efficiency and inference performance. Recent developments in evaluation frameworks emphasize multi-dimensional performance assessment that includes computational cost, accuracy metrics, and deployment scalability.

Hugging Face’s evaluation infrastructure provides standardized benchmarks for comparing fine-tuned models across different domains and use cases. These benchmarks incorporate metrics such as:

- BLEU scores for text generation quality

- Perplexity measurements for language modeling performance

- Task-specific accuracy for domain applications

- Inference latency and throughput measurements

- Memory utilization during training and deployment

The integration of cost-aware metrics represents a significant advancement in model evaluation, enabling organizations to make informed decisions about the trade-offs between model capability and operational expenses.

What This Means

These developments collectively signal a maturation of the open-source AI ecosystem, with enterprise-grade security, cost optimization, and deployment frameworks now available to organizations of all sizes. The convergence of improved fine-tuning accessibility, revolutionary scaling laws, and robust security architectures removes many traditional barriers to AI adoption.

For enterprise decision-makers, the implications are profound. Organizations can now deploy custom AI solutions with confidence, knowing that security vulnerabilities can be systematically addressed and operational costs can be optimized through evidence-based scaling approaches. The shift toward headless, API-first architectures also suggests that the next generation of enterprise software will be fundamentally different from current GUI-based systems.

The technical community benefits from standardized frameworks that enable reproducible research and development. The availability of comprehensive fine-tuning resources, combined with rigorous evaluation methodologies, accelerates innovation while maintaining scientific rigor.

FAQ

What are the key advantages of open-source AI models over proprietary alternatives?

Open-source models offer complete transparency in architecture and training methodologies, enable custom fine-tuning for specific use cases, eliminate vendor lock-in concerns, and provide cost advantages through optimized deployment strategies. Organizations retain full control over their AI infrastructure and can modify models to meet specific regulatory or performance requirements.

How do Train-to-Test scaling laws change AI development strategies?

T² scaling laws demonstrate that training smaller models on larger datasets and using computational savings for inference-time scaling delivers better performance per dollar than traditional large-model approaches. This means organizations should prioritize extensive training data over parameter count and allocate budget for multiple inference samples rather than maximum model size.

What security measures are essential for enterprise AI agent deployment?

Enterprise AI agents require infrastructure-level security with sandboxed execution environments, explicit human approval workflows for sensitive operations, real-time monitoring with enforcement capabilities, and cryptographic verification of authorization. Traditional monitoring-only approaches are insufficient for production deployments handling critical business operations.