Anthropic launched Claude Design in April 2026, marking a significant expansion of multimodal AI capabilities that introduces new attack vectors and security vulnerabilities. The tool, powered by Claude Opus 4.7 vision model, transforms text prompts into visual prototypes and interactive designs, while simultaneously exposing organizations to sophisticated prompt injection attacks and data exfiltration risks.

This development coincides with broader multimodal AI adoption across enterprise platforms, including Salesforce’s Headless 360 initiative that exposes entire CRM systems to AI agent manipulation. Security professionals must now contend with attack surfaces that span text, image, video, and audio modalities simultaneously.

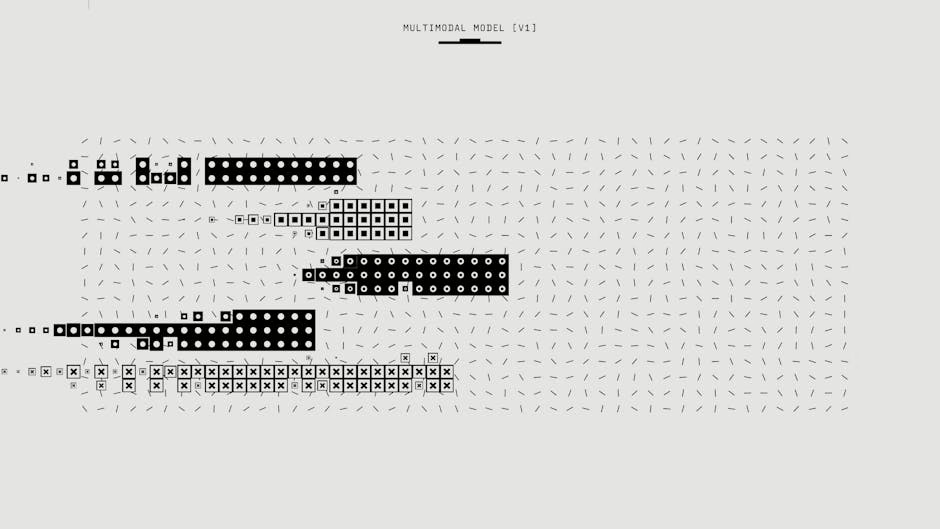

Vision-Language Model Attack Vectors

Vision-language models (VLMs) like Claude Design introduce multi-modal prompt injection vulnerabilities that traditional text-based security controls cannot detect. Adversaries can embed malicious instructions within images, bypassing content filters designed for text-only inputs.

Critical attack methodologies include:

- Visual prompt injection: Embedding hidden commands in images that manipulate AI responses

- Cross-modal data leakage: Extracting sensitive information through image-to-text conversion

- Adversarial image attacks: Using specially crafted visuals to trigger unintended AI behaviors

- Steganographic exploits: Hiding malicious payloads in image metadata or pixel patterns

According to VentureBeat’s analysis, Claude Design’s integration with enterprise workflows creates privileged access pathways where compromised visual inputs could manipulate business-critical design processes.

Security teams must implement multi-modal input validation that analyzes both textual prompts and visual elements for malicious content before processing.

Enterprise Platform Exposure Through API Integration

Salesforce’s Headless 360 architecture exposes over 100 new API endpoints and MCP tools to AI agents, creating an expanded attack surface across enterprise systems. This API-first approach enables sophisticated lateral movement attacks where compromised AI agents can access multiple business functions.

Key vulnerability areas include:

- Unrestricted API access: AI agents with excessive permissions across CRM functions

- Authentication bypass: Agents operating without proper user context validation

- Data aggregation risks: AI systems combining sensitive information across departments

- Privilege escalation: Agents gaining administrative access through API exploitation

The TDX conference announcement revealed that these APIs operate with minimal human oversight, creating autonomous attack chains where initial compromises can propagate across entire business ecosystems.

Organizations must implement zero-trust AI governance with strict API rate limiting, comprehensive logging, and real-time behavioral monitoring for all AI agent activities.

Video and Audio Processing Vulnerabilities

Multimodal AI systems processing video and audio content face unique security challenges that extend beyond traditional image-based attacks. Temporal-based exploits leverage the sequential nature of video data to embed malicious instructions across multiple frames.

Emerging threat vectors include:

- Audio steganography: Hiding commands in frequency ranges below human perception

- Video frame poisoning: Inserting malicious content in specific frame sequences

- Deepfake integration: Using synthetic media to bypass biometric authentication

- Real-time manipulation: Altering live video streams to influence AI decisions

The rise of robotic learning systems using vision-based training creates additional risks where poisoned visual data can corrupt entire learning models.

Security controls must include temporal anomaly detection that identifies suspicious patterns across video timelines and audio fingerprinting to detect synthetic or manipulated content.

Data Privacy and Exfiltration Risks

Multimodal AI systems aggregate vast amounts of visual, audio, and textual data, creating centralized intelligence repositories that become high-value targets for threat actors. The combination of different data types enables sophisticated correlation attacks that can reveal sensitive information.

Privacy threat scenarios include:

- Visual data mining: Extracting personal information from background elements in images

- Cross-modal correlation: Linking voice patterns with visual identities for surveillance

- Metadata exploitation: Using embedded file information to track user behaviors

- Inference attacks: Deducing sensitive information from seemingly innocuous multimodal inputs

Claude Design’s capability to generate marketing materials and prototypes means that intellectual property theft through AI manipulation becomes a significant concern for competitive intelligence gathering.

Implement data minimization principles with strict retention policies and differential privacy techniques to protect user information across all modalities.

Defense Strategies and Security Controls

Protecting against multimodal AI threats requires layered security architectures that address each input modality while maintaining system usability. Traditional security tools designed for single-modal systems prove inadequate against sophisticated cross-modal attacks.

Essential defensive measures:

Input Validation and Sanitization

- Multi-modal content scanning for embedded malicious instructions

- Format verification to ensure file integrity across image, video, and audio inputs

- Behavioral analysis of AI responses to detect manipulation attempts

Access Control and Monitoring

- Principle of least privilege for AI agent permissions

- Real-time activity logging across all API interactions

- Anomaly detection for unusual cross-modal data access patterns

Model Security

- Adversarial training to improve robustness against crafted inputs

- Model versioning with rollback capabilities for compromised systems

- Federated learning to reduce centralized attack surfaces

Regular red team exercises specifically targeting multimodal AI systems help identify novel attack vectors before threat actors exploit them.

What This Means

The expansion of multimodal AI capabilities fundamentally alters the enterprise threat landscape by introducing attack vectors that span multiple data types simultaneously. Organizations deploying vision-language models, video processing systems, and integrated AI platforms face sophisticated threats that traditional security tools cannot address.

Security teams must evolve beyond text-based threat detection to implement comprehensive multimodal security frameworks. This requires significant investment in new detection technologies, updated incident response procedures, and specialized training for security personnel.

The convergence of AI capabilities with enterprise systems creates both unprecedented efficiency gains and substantial security risks. Organizations that fail to adapt their security postures will face increased vulnerability to data breaches, intellectual property theft, and system manipulation through AI-powered attacks.

FAQ

What makes multimodal AI more vulnerable than traditional AI systems?

Multimodal AI processes multiple data types (text, images, video, audio) simultaneously, creating attack surfaces across each modality. Attackers can exploit weaknesses in one input type to compromise the entire system, making detection and prevention significantly more complex.

How can organizations protect against visual prompt injection attacks?

Implement multi-layered input validation that analyzes both textual and visual elements, deploy adversarial detection systems trained on multimodal attacks, and establish strict access controls with comprehensive logging for all AI interactions.

What are the key privacy risks with vision-language models in enterprise settings?

VLMs can extract sensitive information from visual backgrounds, correlate data across different modalities to reveal personal details, and potentially leak intellectual property through generated content. Organizations must implement data minimization practices and differential privacy techniques.

Related news

- New AI platforms hand hackers powerful new tools for cracking cybersecurity – Chicago Sun-Times – Google News – AI Tools