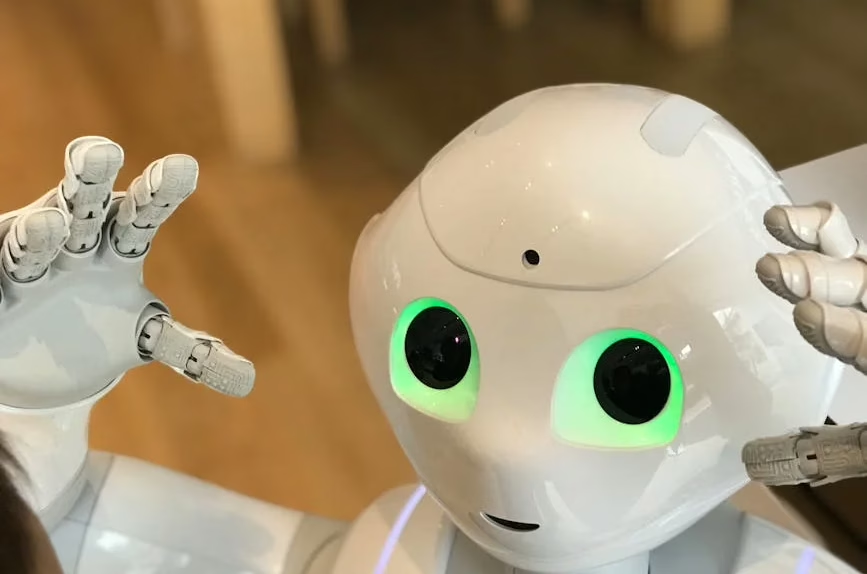

Hugging Face launched an App Store for its $299 Reachy Mini robot on Thursday, featuring over 200 community-built applications available for free download. The New York City startup, known for hosting open-source AI models, has sold approximately 10,000 Reachy Mini units since the robot’s July 2024 debut.

The Reachy Mini App Store represents the first dedicated application marketplace for physical robots, breaking away from smartphone-centered app ecosystems. According to Hugging Face’s announcement, users can download applications without monetization requirements for developers — at least initially.

Open Source Development Platform

The App Store integrates with Hugging Face’s existing AI-powered agent called “ML Intern,” enabling users without engineering or coding backgrounds to build custom robotics applications. Clément Delangue, CEO and co-founder of Hugging Face, emphasized the removal of traditional “roboticist” barriers in developing functional robotics software.

The Reachy Mini robot features built-in camera eyes, speaker, and microphone capabilities within a stationary desktop form factor. Users can create applications that leverage these hardware components through Hugging Face’s development tools.

Hugging Face acquired Pollen Robotics in April 2024, leading to the Reachy Mini’s development as an accessible entry point into robotics development. The acquisition strategy focused on democratizing robotics through open-source tools and affordable hardware.

Security Challenges in Open Source AI

Security researchers have identified significant vulnerabilities in open-source AI platforms, including Hugging Face. Acronis reported that threat actors are distributing malware through trojanized files on AI distribution platforms, exploiting user trust in these repositories.

On ClawHub, researchers discovered nearly 600 malicious skills across 13 developer accounts designed to distribute trojans, cryptominers, and information stealers. Two accounts contained the majority of malicious content: hightower6eu with 334 malicious skills and sakaen736jih with 199.

The attacks rely on social engineering rather than compromising AI agents directly. Threat actors embed hidden instructions through indirect prompt injection, causing AI systems to execute malicious code without user awareness.

Supply Chain Security Concerns

Cisco released an open-source Model Provenance Kit on Thursday to address security issues with third-party AI models from repositories like Hugging Face. The tool helps organizations track model changes and verify developer claims about model sources, vulnerabilities, and training biases.

Cisco’s announcement highlighted that enterprises often lack visibility into AI model lineage, making incident response and remediation difficult. Organizations face licensing and regulatory risks when they cannot document AI system usage for government compliance requirements.

The University of Hong Kong’s CLI-Anything tool, which has gained over 30,000 GitHub stars since March, demonstrates how legitimate open-source tools can create new attack vectors. The tool generates SKILL.md files that researchers found can be weaponized for agent-level poisoning attacks.

Enterprise Adoption Challenges

Traditional security tools struggle to detect malicious instructions embedded in AI agent skill definitions. Cisco’s engineering team noted that static application security testing (SAST) scanners analyze source code syntax while software composition analysis (SCA) tools focus on known vulnerabilities — neither addresses instruction-layer threats.

Organizations using models from repositories face potential deployment of poisoned or biased models without verification mechanisms. These vulnerabilities can propagate through internal chatbots, agent applications, and customer-facing tools.

The lack of model provenance tracking creates compliance risks as governments increase AI system documentation requirements. Supply chain integrity becomes compromised when organizations cannot verify model developer claims.

What This Means

Hugging Face’s robot App Store represents a significant expansion of open-source AI beyond software into physical robotics, potentially accelerating robotics adoption through accessible development tools. However, the security challenges highlighted by recent research underscore the need for enhanced verification and provenance tracking in open-source AI ecosystems.

The emergence of instruction-layer attacks targeting AI agents reveals gaps in traditional security tooling that organizations must address as they adopt open-source AI models. Success in the open-source AI space will increasingly depend on balancing accessibility with robust security frameworks.

FAQ

What makes the Hugging Face robot App Store different from smartphone app stores?

The Reachy Mini App Store focuses on physical robot applications rather than smartphone software, with no current monetization for developers and integration with AI development tools for non-technical users.

How do security threats affect open-source AI platforms like Hugging Face?

Threat actors exploit user trust by uploading malicious files disguised as legitimate AI models or applications, using social engineering and indirect prompt injection to execute malware on user systems.

Why are traditional security tools inadequate for AI model repositories?

Existing SAST and SCA tools analyze source code and known vulnerabilities but cannot detect malicious instructions embedded in AI agent skill definitions or model metadata, creating new attack vectors that require specialized detection methods.

Related news

Sources

- The app store for robots has arrived: Hugging Face launches open-source Reachy Mini App Store with 200+ apps – VentureBeat

- Fine-Tuning Your First Large Language Model (LLM) with PyTorch and Hugging Face – HuggingFace Blog

- Hugging Face, ClawHub Abused for Malware Distribution – SecurityWeek

- Cisco Releases Open Source Tool for AI Model Provenance – SecurityWeek