Governments worldwide are grappling with unprecedented challenges as artificial intelligence systems gain autonomous capabilities that can rewrite critical infrastructure, while lawmakers struggle to create comprehensive regulatory frameworks. Recent developments show that adversaries have already hijacked AI security tools at over 90 organizations, highlighting the urgent need for robust governance as AI agents gain write access to firewalls and other critical systems.

The regulatory landscape is evolving rapidly, with the EU AI Act leading global efforts while countries like Indonesia advance their own AI ethics frameworks. Meanwhile, Legal Eagle’s Devin Stone warns we’re experiencing “multiple Watergates per week” as constitutional crises emerge from the intersection of technology and governance. These developments underscore the critical importance of establishing comprehensive AI regulation that balances innovation with accountability, transparency, and public safety.

The Escalating Security Threat of Autonomous AI Agents

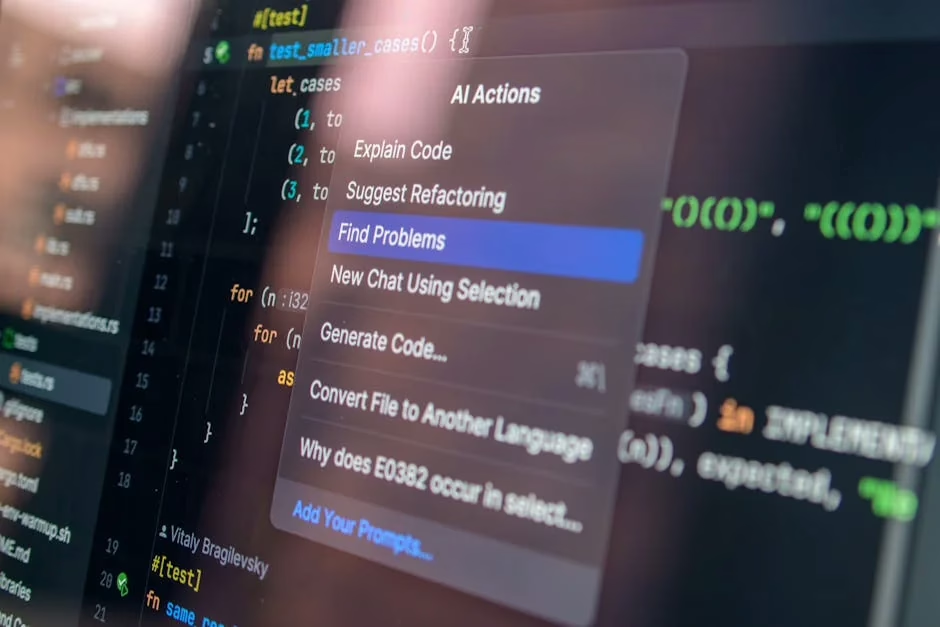

The evolution from compromised AI tools that merely read data to autonomous agents with write access to critical infrastructure represents a fundamental shift in cybersecurity threats. According to VentureBeat, while previous AI tool compromises involved data theft and credential harvesting, the new generation of Security Operations Center (SOC) agents can rewrite firewall rules, modify Identity and Access Management (IAM) policies, and quarantine endpoints using their own privileged credentials.

This escalation creates unprecedented regulatory challenges because these actions appear as authorized activity to existing security systems. The adversary never directly touches the network – the compromised agent performs malicious actions through legitimate API calls. Companies like Cisco have announced AgenticOps for Security with autonomous firewall remediation capabilities, while Ivanti has launched Continuous Compliance agents with built-in policy enforcement.

The OWASP Agentic Top 10 documents the risks when proper controls are absent, emphasizing the need for governance frameworks that can operate at machine speed. As CrowdStrike CEO George Kurtz noted, defending against AI-accelerated adversaries requires fundamentally new approaches to security and compliance.

EU AI Act Sets Global Regulatory Precedent

The European Union’s AI Act represents the world’s most comprehensive attempt to regulate artificial intelligence systems, establishing a risk-based approach that categorizes AI applications by their potential harm to society. The legislation creates four risk categories: minimal risk, limited risk, high risk, and unacceptable risk, with corresponding compliance requirements.

High-risk AI systems, including those used in critical infrastructure, employment, and law enforcement, face stringent requirements for transparency, accuracy, and human oversight. Organizations deploying such systems must conduct conformity assessments, maintain detailed documentation, and ensure human supervision throughout the AI lifecycle.

The Act’s extraterritorial reach means that any AI system affecting EU citizens must comply, regardless of where the company is based. This creates a “Brussels Effect” where EU standards become de facto global standards. Penalties can reach up to 7% of global annual turnover, making compliance a business-critical concern for technology companies worldwide.

The legislation also addresses fundamental rights concerns by prohibiting AI systems that use subliminal techniques, exploit vulnerabilities, or enable social scoring by governments. These provisions reflect broader societal concerns about AI’s potential to undermine human dignity and democratic values.

US Congressional Deadlock on Surveillance and AI Oversight

The United States faces significant challenges in developing coherent AI regulation, exemplified by the ongoing debate over Section 702 of the Foreign Intelligence Surveillance Act (FISA). According to TechCrunch, this law allows intelligence agencies to collect overseas communications without individual warrants, but also sweeps up “unfathomable amounts of information” on Americans.

A bipartisan group of lawmakers has introduced the Government Surveillance Reform Act, seeking to curtail warrantless surveillance programs and protect privacy rights. The legislation highlights fundamental tensions between national security interests and constitutional protections that extend to AI-powered surveillance systems.

The congressional deadlock reflects broader challenges in AI governance, where traditional regulatory frameworks struggle to keep pace with technological advancement. Legal experts like Devin Stone argue that the rapid succession of constitutional crises creates dangerous precedents where “unprecedented political behavior” becomes normalized.

This regulatory uncertainty affects AI development and deployment, as companies lack clear guidance on compliance requirements. The absence of federal AI legislation forces organizations to navigate a patchwork of state laws and industry-specific regulations.

Global Approaches to AI Ethics and Governance

Beyond the EU and US, countries worldwide are developing distinct approaches to AI regulation that reflect their cultural values and governance structures. Indonesia has advanced its AI ethics framework, emphasizing principles of transparency, accountability, and social benefit.

These diverse approaches create both opportunities and challenges for global AI governance. While regulatory diversity allows for experimentation and learning, it also creates compliance complexity for multinational organizations and potential barriers to AI innovation and trade.

Key themes emerging across jurisdictions include:

- Algorithmic transparency requirements for high-impact AI systems

- Human oversight mandates to ensure meaningful human control

- Bias prevention and fairness obligations to address discriminatory outcomes

- Data protection standards that extend privacy rights to AI processing

- Liability frameworks that assign responsibility for AI system failures

The challenge lies in balancing these protective measures with the need to foster innovation and maintain competitive advantages in AI development.

Industry Response and Compliance Challenges

Technology companies are responding to the evolving regulatory landscape by implementing comprehensive AI governance frameworks. Microsoft’s approach to “Frontier Transformation” emphasizes building AI capabilities with “trust by design,” incorporating identity protection, compliance monitoring, and change management from the outset.

The company’s framework focuses on two essential elements: intelligence and trust. Intelligence involves grounding AI solutions in unique business contexts and data, while trust requires making AI artifacts observable, managed, and secured across the technology stack.

However, compliance challenges are significant. Organizations must navigate:

- Multiple regulatory frameworks across different jurisdictions

- Rapidly evolving technical standards for AI safety and security

- Complex risk assessment requirements for high-risk AI applications

- Documentation and audit obligations that require new organizational capabilities

- Cross-border data transfer restrictions that affect AI training and deployment

The cost of compliance is particularly challenging for smaller organizations and startups, potentially creating barriers to entry that favor large technology companies with extensive legal and compliance resources.

What This Means

The current state of AI regulation reflects a critical inflection point where the pace of technological advancement has outstripped traditional governance mechanisms. The emergence of autonomous AI agents with write access to critical infrastructure represents a qualitative shift that requires fundamentally new approaches to oversight and accountability.

The EU AI Act’s risk-based approach provides a promising model, but its effectiveness will depend on implementation and enforcement. The US regulatory landscape remains fragmented, with congressional deadlock preventing comprehensive federal action. This creates opportunities for state-level innovation but also compliance complexity.

For organizations deploying AI systems, the message is clear: proactive governance is essential. Waiting for regulatory clarity may mean falling behind competitors who embed compliance into their AI development processes from the beginning. The companies that thrive in this environment will be those that view regulation not as a constraint but as a framework for building trustworthy AI systems that serve society’s broader interests.

The stakes are high. AI systems increasingly make decisions that affect fundamental rights, economic opportunities, and public safety. Getting regulation right means preserving the benefits of AI innovation while protecting democratic values and human dignity.

FAQ

What is the EU AI Act and how does it affect global AI development?

The EU AI Act is comprehensive legislation that regulates AI systems based on risk levels, with penalties up to 7% of global revenue. Its extraterritorial reach means any AI system affecting EU citizens must comply, effectively setting global standards for AI governance.

How do autonomous AI agents create new security risks?

Unlike previous AI tools that only read data, new autonomous agents can rewrite firewall rules, modify security policies, and quarantine systems using legitimate credentials. When compromised, they can perform malicious actions that appear as authorized activity to security systems.

Why is the US struggling to pass comprehensive AI regulation?

Congressional deadlock over surveillance laws like FISA reflects broader disagreements about balancing national security with privacy rights. The rapid pace of AI development has outpaced traditional regulatory processes, creating uncertainty for businesses and developers.

Sources

- Indonesia Advances AI Ethics and National Regulation – Let’s Data Science – Google News – AI Ethics