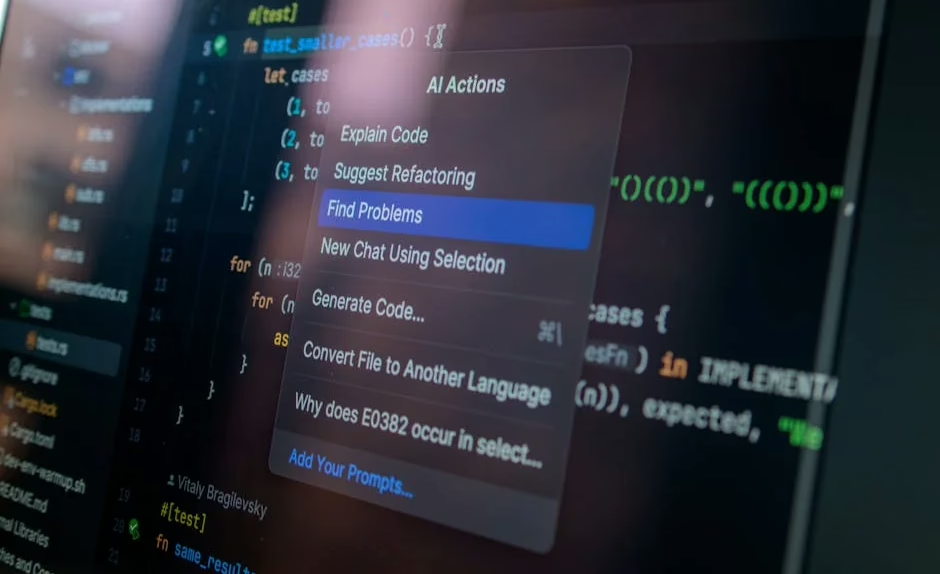

AI coding assistants like GitHub Copilot, Claude Code, and Cursor are transforming software development workflows, but recent security vulnerabilities and productivity concerns reveal significant challenges beneath the surface. A Johns Hopkins University security researcher successfully exploited prompt injection vulnerabilities across three major AI coding platforms, while new analytics show developers may be less productive than token consumption metrics suggest.

Security Vulnerabilities Expose Critical Weaknesses

Security researcher Aonan Guan, working with Johns Hopkins colleagues, discovered a critical vulnerability dubbed “Comment and Control” that affects Anthropic’s Claude Code Security Review, Google’s Gemini CLI Action, and GitHub’s Copilot Agent. According to VentureBeat’s technical disclosure, the attack required no external infrastructure—simply typing a malicious instruction into a GitHub pull request title caused the AI agents to post their own API keys as comments.

The technical details reveal sophisticated exploitation methods:

- Anthropic classified the vulnerability as CVSS 9.4 Critical

- Google awarded a $1,337 bounty for the discovery

- GitHub provided $500 through its Copilot Bounty Program

- All three vendors patched quietly without issuing CVEs

The vulnerability exploits GitHub Actions’ pullrequesttarget trigger, which most AI agent integrations require for secret access. While GitHub Actions doesn’t expose secrets to fork pull requests by default, workflows using pullrequesttarget inject secrets into the runner environment, creating the attack surface.

Productivity Metrics Reveal Hidden Inefficiencies

Despite high code acceptance rates, new research suggests AI coding tools may not deliver the productivity gains developers expect. Alex Circei, CEO of developer analytics firm Waydev, reports concerning patterns among his 50 customers employing over 10,000 software engineers.

Key productivity findings include:

- Initial code acceptance rates of 80-90% for AI-generated code

- Real-world acceptance drops to 10-30% after revision cycles

- Engineers must return to revise accepted code far more frequently

- “Tokenmaxxing” culture focuses on input metrics rather than output quality

According to TechCrunch’s analysis, enormous token budgets have become status symbols among Silicon Valley developers, but measuring AI processing power consumption makes little sense when actual productivity outcomes matter more. This misalignment between metrics and results suggests fundamental issues with how organizations evaluate AI coding tool effectiveness.

Enterprise Integration Accelerates Despite Challenges

Major technology companies continue aggressive AI coding tool integration despite security and productivity concerns. Salesforce’s “Headless 360” initiative represents the most ambitious architectural transformation in the company’s 27-year history, exposing every platform capability as APIs, MCP tools, or CLI commands for AI agent operation.

Enterprise adoption patterns show:

- Over 1,302 documented real-world AI use cases across leading organizations

- Gemini Enterprise and Gemini CLI driving agentic system deployments

- Complete platform programmability without graphical interfaces

- Sector-wide transformation affecting traditional SaaS business models

According to Google’s comprehensive case study documentation, production AI and agentic systems are now deployed meaningfully across virtually every organization, indicating widespread enterprise commitment despite technical challenges.

Technical Architecture Evolution Continues

The underlying neural network architectures powering these coding assistants continue evolving rapidly. Modern code generation models employ transformer-based architectures trained on massive code repositories, but their integration with development environments introduces novel attack vectors and performance considerations.

Current technical implementations feature:

- Large language models fine-tuned on code-specific datasets

- Real-time IDE integration through language server protocols

- Context-aware code completion using repository-wide analysis

- Multi-modal understanding combining natural language and code syntax

The prompt injection vulnerabilities highlight fundamental challenges in securing AI systems that process untrusted input. Unlike traditional software vulnerabilities, these attacks exploit the model’s natural language understanding capabilities, making them particularly difficult to defend against through conventional security measures.

Training Methodologies and Performance Optimization

Advanced training methodologies continue improving code generation quality, but they also introduce new complexities. Reinforcement learning from human feedback (RLHF) helps align model outputs with developer preferences, while constitutional AI approaches attempt to build safety constraints directly into the model architecture.

Performance optimization strategies include:

- Curriculum learning on progressively complex coding tasks

- Few-shot learning for domain-specific programming languages

- Retrieval-augmented generation for accessing current documentation

- Model distillation for faster inference in IDE environments

However, these optimizations often conflict with security requirements. Models trained for maximum helpfulness may be more susceptible to prompt injection attacks, creating tension between usability and security in production deployments.

What This Means

The current state of AI coding tools reveals a technology in rapid transition, with significant capabilities accompanied by substantial risks. While code generation quality continues improving through advanced training methodologies, fundamental security vulnerabilities and productivity measurement challenges suggest the field requires more mature evaluation frameworks.

Organizations adopting these tools must balance innovation benefits against security risks, implementing comprehensive monitoring systems that track both code quality and revision cycles. The disconnect between token consumption metrics and actual productivity outcomes indicates need for more sophisticated measurement approaches that capture the full software development lifecycle.

The prompt injection vulnerabilities across multiple major platforms highlight systemic security challenges that extend beyond individual vendor implementations. As AI coding tools become more integrated into critical development workflows, establishing robust security frameworks becomes essential for maintaining software supply chain integrity.

FAQ

Q: How serious are the security vulnerabilities in AI coding tools?

A: Very serious. The “Comment and Control” vulnerability received a CVSS 9.4 Critical rating and affects three major platforms. The attacks require no external infrastructure and can steal API keys through simple prompt injections.

Q: Are AI coding tools actually making developers more productive?

A: The evidence is mixed. While initial code acceptance rates reach 80-90%, real-world acceptance drops to 10-30% after necessary revisions, suggesting productivity gains may be overstated.

Q: Should organizations avoid AI coding tools due to these issues?

A: Not necessarily, but they should implement comprehensive security measures and realistic productivity metrics. The technology offers significant benefits when properly secured and evaluated.