AI coding assistants including GitHub Copilot, Claude Code, and OpenAI Codex suffered a string of critical security breaches in 2024, with attackers consistently targeting authentication credentials rather than the AI models themselves. According to VentureBeat, six research teams disclosed exploits across nine months, revealing a systemic vulnerability in how AI coding tools handle production system access.

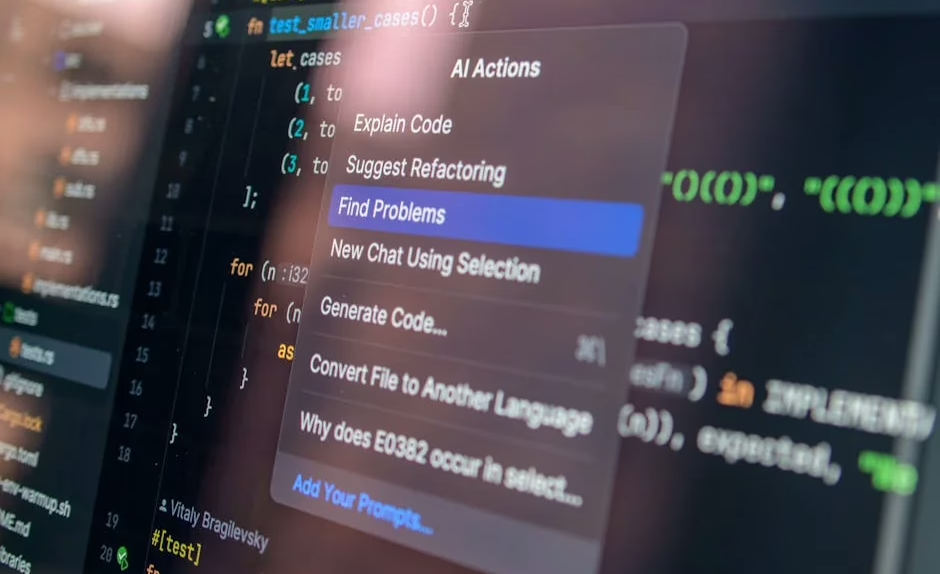

The pattern emerged clearly: attackers exploited AI agents that held credentials, executed actions, and authenticated to production systems without human session anchoring. BeyondTrust demonstrated this on March 30 when researchers proved a crafted GitHub branch name could steal Codex’s OAuth token in cleartext — a vulnerability OpenAI classified as Critical P1.

The Credential Attack Surface Expands

The vulnerability landscape first gained attention at Black Hat USA 2025, where Zenity CTO Michael Bargury demonstrated zero-click credential hijacking across ChatGPT, Microsoft Copilot Studio, Google Gemini, Salesforce Einstein, and Cursor. Nine months later, these same credential pathways became the primary attack vector.

“Enterprises believe they’ve ‘approved’ AI vendors, but what they’ve actually approved is an interface, not the underlying system,” Merritt Baer, CSO at Enkrypt AI and former Deputy CISO at AWS, told VentureBeat in an exclusive interview. “The credentials underneath the interface are the breach.”

Two days after the Codex disclosure, Anthropic’s Claude Code source code leaked onto the public npm registry. Adversa quickly discovered that Claude Code silently ignored its own security deny rules once a command exceeded 50 subcommands, creating another credential exposure pathway.

Student Usage Patterns Reveal Deeper Issues

Beyond security vulnerabilities, research into student-AI interactions reveals concerning patterns in how developers use coding assistants. An arXiv study analyzing 19,418 interaction turns from 110 undergraduate students found significant differences between high and low-performing programmers.

Top-performing students engaged in “instrumental help-seeking” — using AI for inquiry and exploration that elicited tutor-like responses. Low performers relied on “executive help-seeking,” frequently delegating entire tasks and prompting AI to provide ready-made solutions without understanding.

The findings suggest current AI coding tools mirror student intent rather than optimizing for learning. “To evolve from tools to teammates, AI systems must move beyond passive compliance,” the researchers concluded. They argued for pedagogically aligned design that detects unproductive delegation and steers interactions toward inquiry-based learning.

Infrastructure Solutions Emerge

As security concerns mount, new infrastructure approaches aim to address development workflow vulnerabilities. Runpod launched Flash, an open-source Python tool designed to eliminate Docker containerization barriers in serverless GPU development.

The MIT-licensed tool specifically targets AI coding assistants like Claude Code, Cursor, and Cline, enabling them to orchestrate remote hardware with reduced attack surface. “We make it as easy as possible to bring together the cosmos of different AI tooling in a function call,” Runpod CTO Brennen Smith told VentureBeat.

Flash supports “polyglot” pipelines that route data preprocessing to CPU workers before transferring workloads to high-end GPUs, reducing credential exposure points. The platform includes low-latency load-balanced HTTP APIs and queue-based batch processing designed for production environments.

Autonomous Research Capabilities Advance

Despite security challenges, AI coding capabilities continue expanding into autonomous research territory. Andrej Karpathy’s autoresearch framework demonstrates LLMs operating in continuous experimental loops, measuring impact and iterating independently.

A Towards Data Science analysis of autoresearch applied to marketing budget optimization showed promising results. The system ran dozens of experiments autonomously, discarding unsuccessful approaches and iterating on effective strategies without human intervention.

“Research is now entirely the domain of autonomous swarms of AI agents running across compute cluster megastructures,” Karpathy wrote in his repository description, positioning autoresearch as the beginning of fully autonomous AI development cycles.

What This Means

The credential-focused attack pattern across major AI coding platforms exposes a fundamental architecture flaw: these tools authenticate to production systems without proper session anchoring or human oversight. Unlike traditional software vulnerabilities that target application logic, these exploits bypass the AI entirely to steal the keys to enterprise systems.

For enterprises deploying AI coding tools, the security model requires rethinking. Current “vendor approval” processes evaluate interfaces rather than underlying credential management systems. Organizations need credential isolation, session-based authentication, and human-in-the-loop verification for production system access.

The student usage research suggests similar architectural changes for educational contexts. AI coding tools that passively comply with any request may actually harm learning outcomes by enabling cognitive offloading rather than skill development.

FAQ

What made the AI coding tool attacks so effective?

Attackers consistently targeted stored credentials rather than the AI models themselves. Tools like Codex and Claude Code held OAuth tokens and API keys that could authenticate to production systems without human session verification, creating a direct pathway to enterprise infrastructure.

How do top-performing programmers use AI coding assistants differently?

High-performing students engage in “instrumental help-seeking” — using AI for exploration and learning that produces tutor-like interactions. Low performers rely on “executive help-seeking,” delegating entire tasks to AI without understanding the underlying code or concepts.

What security measures should enterprises implement for AI coding tools?

Enterprises should implement credential isolation, session-based authentication, and human-in-the-loop verification for any AI tool that accesses production systems. Current vendor approval processes that focus on interfaces rather than underlying credential management are insufficient for enterprise security.

Related news

- Top Five Sales Challenges Costing MSPs Cybersecurity Revenue – The Hacker News

- Two Cybersecurity Professionals Get 4-Year Sentences in BlackCat Ransomware Attacks – The Hacker News

- ISA names Palindrome Technologies as ISO/IEC 17065 ISASecure certification body, advancing industrial cybersecurity standards – Industrial Cyber – Google News – Industries