The FDA has accelerated approvals for AI-powered diagnostic tools while major hospital systems expand automation deployments, marking a shift toward algorithmic decision-making in clinical care. According to CNBC Tech, OpenAI and Amazon launched consumer healthcare tools in January, while biotechnology companies report both promising results and implementation challenges.

The regulatory momentum reflects growing confidence in AI’s clinical accuracy, though experts warn of risks when healthcare providers lack sufficient training on new platforms.

Clinical AI Tools Gain FDA Clearance

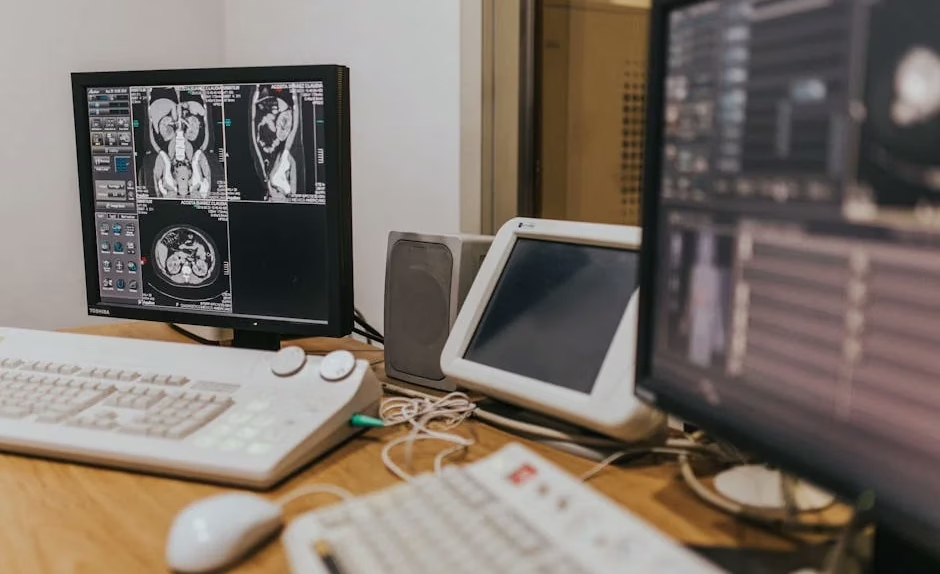

The FDA has streamlined its approval process for AI diagnostic tools, particularly in radiology and pathology applications. Healthcare facilities report deploying AI systems for image analysis, patient triage, and preliminary diagnosis support.

Alex Zhavoronkov, CEO of Insilico Medicine, told CNBC that AI can handle basic health questions from consumers, potentially reducing time spent with physicians for routine inquiries. This shift allows doctors to focus on complex cases requiring human expertise.

However, Shreehas Tambe, CEO of biotechnology company Biocon, warned of potential errors when healthcare providers are “still getting a hang of” AI platforms. The learning curve for medical professionals remains a significant implementation barrier.

Hospital Systems Expand AI Deployments

Major hospital networks have accelerated AI adoption across multiple departments. According to Healthcare Asia Magazine, technology integration extends from hospital walls into everyday patient care, creating more patient-centric healthcare delivery models.

Deployments focus on three primary areas:

- Diagnostic imaging: AI systems analyze X-rays, MRIs, and CT scans for early disease detection

- Electronic health records: Automated data analysis identifies patient risk factors and treatment recommendations

- Drug discovery: AI platforms accelerate pharmaceutical research and clinical trial design

The technology aims to reduce diagnostic errors while improving treatment speed and accuracy. Early implementations show promising results in detecting conditions that human physicians might miss during initial screenings.

Security Challenges in Medical AI Systems

Healthcare AI deployments face significant cybersecurity risks, as demonstrated by recent breaches in medical software platforms. TechCrunch reported that Practice by Numbers, which serves over 5,000 dental practices, fixed a security flaw that exposed private patient health records.

The vulnerability allowed any user to access other patients’ documents by simply changing document numbers in web addresses. Patient Joseph R. Cox discovered he could view medical histories, personal information, and photo identification of other patients through the portal.

Data Protection Concerns

The incident highlights broader security challenges in healthcare AI systems:

- Sequential numbering: Predictable document identifiers make unauthorized access easier

- Limited reporting channels: Companies often lack clear security vulnerability disclosure processes

- Patient portal vulnerabilities: Web-based systems require robust authentication and access controls

Healthcare organizations implementing AI tools must prioritize cybersecurity measures to protect sensitive patient data from both external threats and internal system flaws.

AI Agents Transform Healthcare Operations

Advanced AI agent systems are reshaping healthcare administration and patient care coordination. According to NVIDIA’s AI Blog, OpenClaw agents have gained significant traction, with the open-source project reaching 250,000 GitHub stars by March 2026.

These persistent AI assistants run locally or on private servers, eliminating dependence on cloud infrastructure. Healthcare organizations can deploy specialized agents for:

- Patient scheduling optimization: Automated appointment management and resource allocation

- Clinical documentation: Real-time transcription and medical record updates

- Treatment protocol recommendations: Evidence-based care pathway suggestions

The self-hosted approach addresses healthcare’s strict data privacy requirements while providing continuous AI assistance without external API dependencies.

Patient Support and Communication

AI tools increasingly support patient communication and emotional care alongside clinical applications. According to Forbes, 89% of cancer patients wish people in their lives had better education on offering support.

Dr. Ihuoma Njoku, medical director of psychiatric oncology at Sidney Kimmel Comprehensive Cancer Center, emphasizes that many cancer patients fight both their disease and social isolation. AI-powered communication tools help bridge this gap by:

- Personalized patient education: Tailored information delivery based on individual conditions

- Symptom tracking: Automated monitoring and alert systems for care teams

- Support network coordination: Platforms connecting patients with appropriate resources

Jessica Walker, founder of Five Dot Post, develops specialized communication tools for cancer patients, demonstrating how technology can address the social aspects of healthcare beyond pure medical treatment.

What This Means

The convergence of FDA approvals, hospital deployments, and advanced AI agents signals a fundamental shift in healthcare delivery. While AI tools show promise for improving diagnostic accuracy and operational efficiency, implementation challenges around training, security, and patient privacy remain significant hurdles.

Healthcare organizations must balance innovation with safety, ensuring proper staff training and robust cybersecurity measures. The success of AI in healthcare will depend on addressing both technical capabilities and human factors, from physician adoption to patient trust.

The expansion beyond pure medical applications into patient communication and support suggests AI’s role in healthcare will encompass both clinical and social dimensions of care.

FAQ

How does the FDA approve AI diagnostic tools for healthcare use?

The FDA has streamlined its approval process for AI diagnostic tools, particularly in radiology and pathology. Companies must demonstrate clinical accuracy and safety through trials, with accelerated pathways for certain low-risk applications.

What security risks do AI healthcare systems face?

Major risks include data breaches through vulnerable patient portals, unauthorized access to medical records, and inadequate authentication systems. Healthcare organizations must implement robust cybersecurity measures and regular security audits.

Can AI agents replace human doctors in clinical decision-making?

AI agents serve as diagnostic and administrative assistants rather than replacements for physicians. They excel at data analysis, routine tasks, and preliminary assessments, but complex medical decisions still require human expertise and patient interaction.