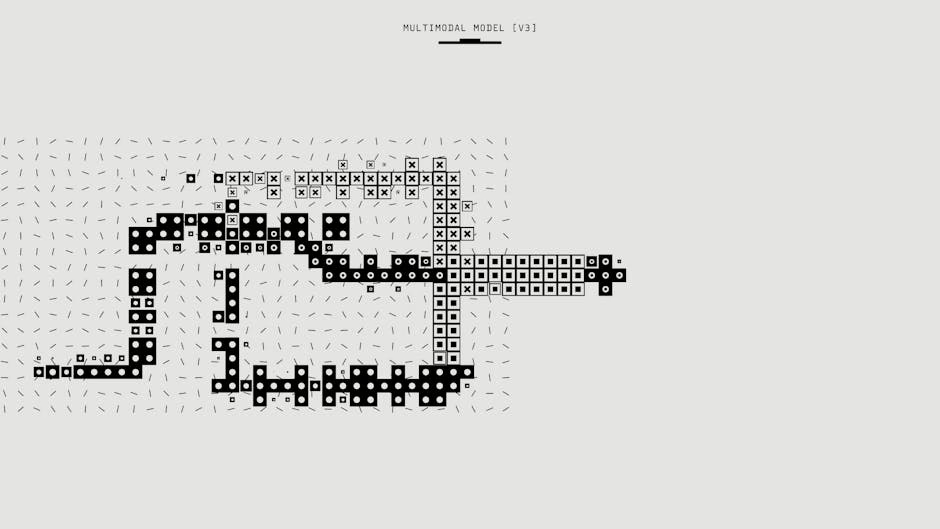

NVIDIA on Monday unveiled Nemotron 3 Nano Omni, an open multimodal model that unifies vision, audio, and language processing in a single system, delivering up to 9x more efficient AI agents compared to traditional multi-model architectures. According to NVIDIA, the model tops six leaderboards for complex document intelligence, video understanding, and audio processing while maintaining production-grade accuracy.

The release addresses a critical bottleneck in current AI agent systems, which typically juggle separate models for different modalities, losing time and context as data passes between systems. Nemotron 3 Nano Omni processes text, images, audio, video, documents, charts, and graphical interfaces through a unified architecture, outputting text responses with advanced reasoning capabilities.

Inference Scaling Drives New Architecture Demands

The efficiency gains come as enterprises grapple with skyrocketing inference costs from reasoning models. Towards Data Science reported that flagship models like GPT-5.5 and the o1 series achieve higher performance by spending significantly more compute resources on every response through “test-time compute” or inference scaling.

This approach generates hidden reasoning tokens that never appear in final outputs but represent massive surges in billable compute. For enterprises, enabling reasoning mode becomes “an adaptive resource commitment rather than a casual toggle,” creating a Cost-Quality-Latency triangle that product teams must navigate carefully.

The infrastructure strain is evident in enterprise GPU utilization rates. Cast AI’s 2026 State of Kubernetes Optimization Report found that most companies run their GPU fleets at roughly 5% utilization — about six times worse than a no-effort baseline. Cast AI co-founder Laurent Gil told VentureBeat that “many of the neoclouds are not cloud — they are neo-real estate.”

Open Source Models Challenge Efficiency Standards

Xiaomi’s simultaneous release of MiMo-V2.5 and MiMo-V2.5-Pro under MIT License demonstrates how open source alternatives are pushing efficiency boundaries. According to VentureBeat, both models rank among the most efficient available for agentic “claw” tasks — systems where agents complete tasks on behalf of users through third-party messaging apps.

https://x.com/xiaomimimo/status/2048821516079661561

Xiaomi’s ClawEval benchmark chart shows the Pro model leading the open-source field with 63.8% performance while using fewer tokens than competing models. This efficiency matters increasingly as services like Microsoft’s GitHub Copilot move to usage-based billing, charging users for each token consumed rather than imposing rate limits or offering unlimited subscriptions.

The open source approach provides enterprises with full deployment flexibility and control, allowing local or virtual private cloud deployment without vendor lock-in concerns.

Enterprise Orchestration Emerges as Critical Layer

Mistral AI’s launch of Workflows in public preview highlights how the industry bottleneck has shifted from model capabilities to operational infrastructure. Elisa Salamanca, head of product at Mistral AI, told VentureBeat that “organizations are struggling to go beyond isolated proofs of concept. The gap is operational.”

Workflows, powered by Temporal’s orchestration engine, is already processing millions of daily executions for enterprise customers. The product separates execution from control to maintain data privacy while providing production-grade reliability for business-critical AI processes.

The timing proves critical as the dedicated agentic AI market, valued at $10.9 billion in 2026, faces a harsh reality check. Industry research indicates that over 40% of agentic AI projects will be abandoned by 2027 due to high costs, unclear value, and operational complexity.

Infrastructure Costs Break Historical Patterns

The efficiency push gains urgency as cloud compute pricing breaks its 20-year pattern of consistent price reductions. AWS quietly raised reserved H200 GPU prices by roughly 15% in January without formal announcement, marking the first time since EC2’s 2006 launch that a hyperscaler has meaningfully increased reserved GPU pricing.

Memory suppliers compounded the pressure by pushing HBM3e prices up 20% for 2026 as NVIDIA H200 ASIC demand rises. The combination challenges the fundamental assumption underlying most enterprise AI budgets — that cloud compute costs decrease annually.

Enterprises face a paradox where releasing idle GPU capacity would improve utilization, but the same shortage driving prices up prevents teams from surrendering reserved capacity. This creates a self-reinforcing cycle where fleets sit largely unused while costs continue climbing.

What This Means

The convergence of unified architectures like Nemotron 3 Nano Omni, efficient open source alternatives, and enterprise orchestration platforms signals a maturation phase for AI infrastructure. Organizations can no longer rely solely on model improvements to drive ROI — operational efficiency and cost management have become primary competitive factors.

The 9x efficiency improvement promised by unified multimodal architectures addresses real pain points as inference costs spiral upward. Combined with open source licensing and production-grade orchestration, these advances provide enterprises with viable paths to scale AI systems without proportional cost increases.

However, the infrastructure cost crisis and low GPU utilization rates suggest that technology improvements alone won’t solve enterprise AI economics. Organizations need comprehensive strategies encompassing architecture selection, resource management, and operational frameworks to avoid joining the 40% of projects expected to fail by 2027.

FAQ

What makes Nemotron 3 Nano Omni different from existing multimodal models?

Nemotron 3 Nano Omni processes all modalities (text, images, audio, video) in a single unified system rather than passing data between separate models. This eliminates context loss and data transfer overhead, delivering up to 9x efficiency improvements while maintaining production-grade accuracy across six benchmark leaderboards.

Why are enterprises running GPU fleets at only 5% utilization?

Enterprises hoard GPU capacity due to shortage fears and FOMO, but this creates a paradox where releasing idle capacity would improve utilization while simultaneously making it harder to secure future capacity. The result is massively underutilized fleets running at about six times worse than baseline efficiency levels.

How do inference scaling and test-time compute affect AI costs?

Reasoning models like GPT-5.5 and o1 generate hidden reasoning tokens during inference that never appear in final outputs but consume significant compute resources. This “test-time compute” can dramatically increase token usage and latency, turning model selection into a high-stakes operational decision balancing cost, quality, and response time.