AI productivity applications are showing measurable but incremental efficiency gains as enterprise adoption gradually expands beyond early adopters. According to Federal Reserve Bank of St. Louis research, workers actively using generative AI save an average of 5.4% of their working hours, though this drops to 1.4% when averaged across entire workforces including non-users.

Gallup data shows daily AI usage among U.S. employees increased modestly from 10% to 12% between 2023 and late 2025, reflecting gradual rather than transformational adoption. The gap between executive expectations and ground-level reality remains significant, with many implementations remaining “surface-level” rather than fundamentally changing workflows.

Enterprise AI Usage Patterns Reveal Growing Sophistication

Frontier enterprises—those at the 95th percentile of AI usage—now deploy 3.5x as much AI intelligence per worker as typical firms, up from 2x a year ago, according to OpenAI’s B2B Signals research. This advantage stems from depth of implementation rather than just activity volume, with message volume explaining only 36% of the performance gap.

Advanced agentic workflows are becoming a key differentiator. Frontier firms send 16x as many Codex messages per worker compared to typical organizations, indicating a shift from simple chat-based assistance toward delegated, autonomous AI work. These leading organizations focus on measuring usage depth, building governance frameworks for production deployment, and scaling successful implementations across departments.

Token Economics Reshape Business Cost Models

Tokens—the fundamental unit of AI processing—have emerged as a critical business expense and performance metric. Companies are now assessing employee productivity through token usage patterns, with both excessive and insufficient usage raising management concerns.

A token represents a word, part of a word, or group of words that AI models process. Business leaders report that “tokenmaxxing”—maximizing token asset efficiency—has become a widespread corporate behavior extending beyond Silicon Valley. AI services bill by token consumption, with pricing varying based on service levels and usage patterns.

For practical context, revising a typical business email might consume 200-500 tokens, while generating a comprehensive report could require 2,000-5,000 tokens. Understanding token economics helps organizations budget AI costs and optimize employee workflows.

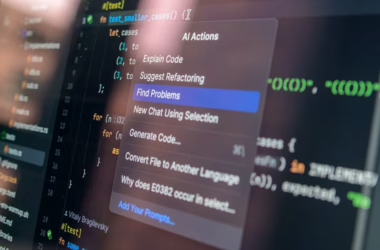

Technical Implementation Challenges Persist in Production

Production AI systems face significant technical hurdles, particularly in retrieval-augmented generation (RAG) applications. Enterprise teams report that semantic search alone often fails to surface relevant documents, requiring hybrid search approaches combining dense vectors with traditional keyword matching.

One infrastructure team discovered their internal knowledge assistant was confidently providing incorrect answers about retry policies because embedding models incorrectly associated “exponential backoff” with “dead-letter queue threshold.” The correct document ranked 11th in search results, just outside the top 10 passed to the language model.

Solutions include implementing cross-encoder re-ranking systems, metadata filtering, and hybrid search architectures that combine multiple retrieval methods. These technical improvements are essential for moving AI productivity tools from experimental to mission-critical status.

Enterprise Governance Frameworks Emerge for AI Tools

SAP’s unified API policy exemplifies how enterprise software vendors are implementing governance frameworks for AI connectivity. The policy establishes rate limits, usage controls, and restrictions on undocumented interfaces—measures that mirror existing controls across CRM platforms, collaboration suites, and hyperscale infrastructure.

These governance frameworks address multi-tenant cloud infrastructure challenges as AI workloads scale. Rate limiting prevents individual users from overwhelming shared resources, while API restrictions ensure stable performance across enterprise deployments. Organizations implementing AI productivity tools must balance accessibility with system stability and security requirements.

What This Means

AI productivity applications are transitioning from experimental tools to measurable business assets, but the transformation remains incremental rather than revolutionary. The 5.4% time savings for active users represents real value, yet the technology hasn’t fundamentally restructured work processes for most organizations.

The growing gap between frontier and typical enterprises suggests competitive advantages will compound over time. Organizations that invest in deep implementation, governance frameworks, and technical sophistication are positioning themselves for sustained benefits as AI capabilities mature.

Token economics introduce new cost management challenges requiring careful monitoring and optimization. Companies must develop internal expertise in AI resource management while building governance frameworks that enable productive use without compromising system stability.

FAQ

How much time can AI productivity tools actually save?

Active users of generative AI save an average of 5.4% of their working hours, according to Federal Reserve research. However, when averaged across entire workforces including non-users, the impact drops to 1.4% of total work hours saved.

What are tokens and why do they matter for businesses?

Tokens are the fundamental units of AI processing, representing words or parts of words that AI models consume. They’ve become a critical business expense and performance metric, with companies now assessing employee productivity through token usage patterns and implementing “tokenmaxxing” strategies to optimize costs.

What separates leading AI adopters from typical organizations?

Frontier enterprises use 3.5x as much AI intelligence per worker as typical firms, focusing on depth rather than just activity volume. They implement advanced agentic workflows, measure usage depth, build production governance frameworks, and move beyond simple chat assistance toward delegated AI work.