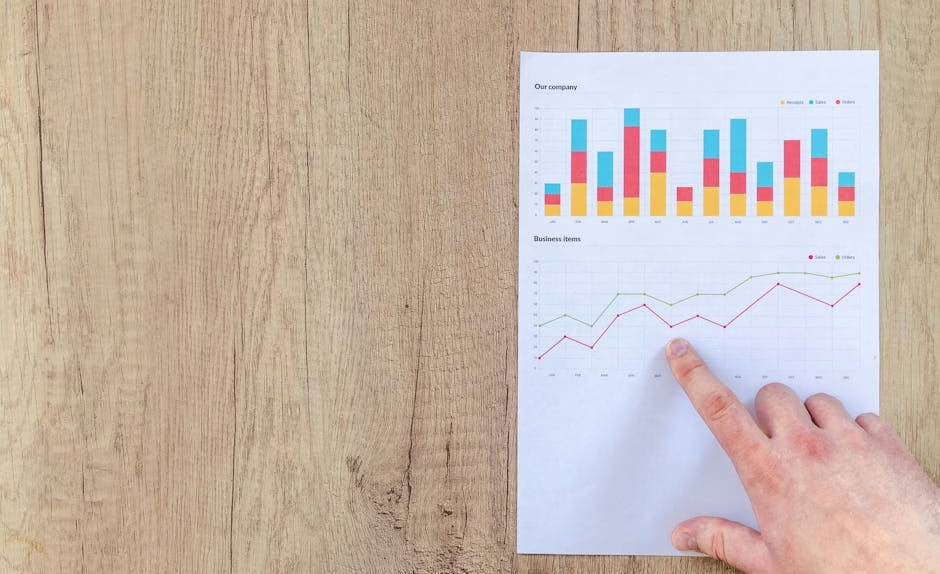

AI evaluation has reached a cost threshold that fundamentally changes who can afford comprehensive model testing. According to HuggingFace’s analysis, the Holistic Agent Leaderboard (HAL) recently spent approximately $40,000 to run 21,730 agent rollouts across 9 models and 9 benchmarks, marking a new era where evaluation expenses rival training costs.

The financial barrier extends beyond academic benchmarks. A single GAIA run on a frontier model now costs $2,829 before caching optimizations, while Exgentic’s recent study required $22,000 to sweep across agent configurations. The research revealed a 33× cost spread on identical tasks, with scaffold choice emerging as a primary cost driver.

Enterprise Testing Faces New Economic Reality

The evaluation bottleneck has created distinct winners and losers in AI development. UK-AISI recently scaled agentic steps into the millions to study inference-time compute, demonstrating the resources required for comprehensive testing. Meanwhile, smaller organizations find themselves priced out of thorough evaluation protocols.

Thinking Machines, the AI startup founded by former OpenAI CTO Mira Murati, announced a research preview of “interaction models” that treats interactivity as a first-class architectural component rather than external software. The company claims impressive gains on third-party benchmarks and reduced latency, though the models remain in limited preview.

In scientific machine learning, The Well benchmark requires approximately 960 H100-hours to evaluate a single new architecture and 3,840 H100-hours for a complete four-baseline comparison. These compute requirements have pushed evaluation costs beyond the reach of many research teams.

Specialized Benchmarks Address Real-World Gaps

New benchmark development continues despite cost pressures, with researchers targeting specific failure modes in production systems. The Partial Evidence Bench introduces a deterministic benchmark for measuring authorization-limited evidence in enterprise agents.

The benchmark ships three scenario families — due diligence, compliance audit, and security incident response — with 72 tasks total and ACL-partitioned corpora. According to the research, checked-in baselines show that silent filtering creates catastrophic safety risks across all scenario families, while explicit fail-and-report behavior eliminates unsafe completeness without reducing tasks to trivial abstention.

Preliminary real-model runs reveal model-dependent and scenario-sensitive differences in whether systems overclaim completeness, conservatively underclaim, or report incompleteness in enterprise-usable formats.

Automotive Safety Sets New Standards

Beyond AI-specific benchmarks, traditional safety testing has evolved to accommodate advanced systems. The National Highway Traffic Safety Administration (NHTSA) announced that the 2026 Tesla Model Y became the first vehicle to meet new driver assistance safety benchmarks.

The updated criteria include four pass-fail tests: automatic emergency braking for pedestrians, blind-spot warning, blind-spot intervention, and lane assist functionality. These tests address the gap between marketing claims and measurable performance in advanced driver assistance systems.

The new benchmark rating applies specifically to 2026 Tesla Model Y vehicles assembled on or after November 12, 2025, as part of NHTSA’s New Car Assessment Program (NCAP) updates implemented in 2024.

Cost Competition Reshapes Global AI Strategy

Chinese firms are betting on cost efficiency over absolute performance. SenseTime cofounder Lin Dahua told CNBC that lower-cost models could win market share despite quality gaps, representing a strategic shift in the global AI race.

The U.S.-sanctioned, Hong Kong-listed firm continues expanding globally with unchanged Middle East plans. This approach reflects broader industry recognition that AI competition has shifted beyond pure technical capabilities to include platform advantages, user acquisition, and financial sustainability.

Analysts note that evaluation costs create additional competitive moats, as comprehensive testing becomes accessible only to well-funded organizations. The $40,000 benchmark runs represent just the beginning, with training-in-the-loop benchmarks expensive by design and reliability requirements multiplying costs through repeated runs.

What This Means

The emergence of evaluation as a computational bottleneck fundamentally alters AI development economics. Organizations must now budget significant resources for testing alongside training and inference costs. This shift advantages large technology companies with substantial compute budgets while potentially stifling innovation from smaller research teams.

The trend toward specialized benchmarks like Partial Evidence Bench reflects growing recognition that general-purpose evaluations miss critical failure modes in production deployments. As AI systems handle more sensitive enterprise tasks, targeted testing for specific risk scenarios becomes essential.

Cost-conscious strategies from companies like SenseTime suggest the industry may bifurcate between premium, thoroughly-tested models and lower-cost alternatives that accept evaluation trade-offs. This dynamic could reshape competitive positioning as organizations balance performance requirements against testing expenses.

FAQ

How much does it cost to run comprehensive AI benchmarks?

The Holistic Agent Leaderboard spent $40,000 for 21,730 agent rollouts across 9 models and 9 benchmarks. Individual runs can cost $2,829 for GAIA testing on frontier models, with full research sweeps reaching $22,000.

What makes AI evaluation so expensive now?

Agent benchmarks require multiple rollouts for reliability, scaffold choices create 33× cost variations on identical tasks, and training-in-the-loop benchmarks are computationally expensive by design. Compression techniques work for static benchmarks but are less effective for noisy, scaffold-sensitive agent evaluations.

Are there alternatives to expensive comprehensive testing?

Researchers are developing targeted benchmarks like Partial Evidence Bench for specific failure modes, and some companies are betting on lower-cost models despite quality gaps. However, safety-critical applications still require thorough evaluation regardless of expense.