OpenAI on Monday began distributing tenfold increases in Codex rate limits to more than 8,000 developers who applied for its invite-only GPT-5.5 party, effective immediately through June 5. The company sent emails to all applicants — whether accepted, waitlisted, or rejected — offering the enhanced access as compensation for the overwhelmed event capacity.

“We had over 8,000 people express interest in just 24 hours, and while we wish our office was big enough to welcome everyone, we weren’t able to make space for every person who applied,” OpenAI wrote in the email obtained by VentureBeat. CEO Sam Altman telegraphed the move on X, writing “We are gonna do something nice for everyone who applied for the GPT-5.5 party and that we didn’t have space for.”

https://x.com/sama/status/2051318922805436896

What the Codex Rate Limit Boost Actually Means

The practical implications for developers are substantial. Codex, OpenAI’s AI-powered coding agent, normally operates under daily usage caps that vary by subscription tier. A tenfold increase gives developers dramatically more capacity to prototype, debug, and ship code using GPT-5.5, which OpenAI says matches GPT-5.4’s per-token latency while performing at higher intelligence levels.

The enhanced limits apply to personal ChatGPT accounts, not enterprise or team subscriptions. According to multiple recipients confirmed on social media, the boost affects all coding-related interactions including code generation, debugging assistance, and technical documentation creation. Standard Codex limits typically allow 50-100 complex coding requests per day for individual users, meaning the boost could enable 500-1,000 daily interactions.

Developers responded with enthusiasm across social platforms. “I’m literally not taking my Codex hat off for the month,” one developer declared on X. Others expressed regret for not applying, with several noting they skipped the application assuming geographic limitations would exclude them.

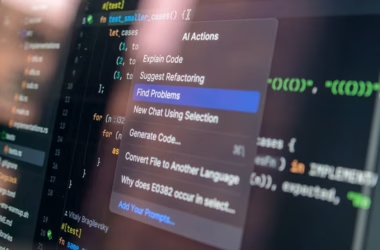

Growing Security Concerns Around AI Coding Tools

The Codex giveaway comes amid mounting security vulnerabilities in AI coding assistants. Between March and December 2025, six research teams disclosed exploits against major coding tools including Codex, Claude Code, GitHub Copilot, and Google Vertex AI. According to VentureBeat’s investigation, every successful attack followed the same pattern: targeting credentials rather than the AI models themselves.

In March, BeyondTrust researchers demonstrated that a crafted GitHub branch name could steal Codex’s OAuth token in cleartext — a vulnerability OpenAI classified as Critical P1. Days later, Claude Code’s source code leaked onto the public npm registry, revealing that the system silently ignored its own security deny rules once commands exceeded 50 subcommands.

“Enterprises believe they’ve ‘approved’ AI vendors, but what they’ve actually approved is an interface, not the underlying system,” Merritt Baer, CSO at Enkrypt AI and former Deputy CISO at AWS, told VentureBeat. “The credentials underneath the interface are the breach.”

Supply Chain Attacks Target Developer Credentials

Security researchers have identified a sophisticated Linux backdoor called Quasar Linux (QLNX) specifically designed to steal developer credentials across software supply chains. Trend Micro’s analysis reveals the malware targets AWS credentials, Kubernetes tokens, Docker Hub access, Git tokens, NPM authentication, and PyPI API keys.

“An attacker who successfully deploys QLNX against a package maintainer gains access to that maintainer’s publishing pipeline,” Trend Micro warned. “A single compromise can be silently leveraged to trojanize packages, inject backdoors into build artifacts, or pivot into cloud environments where production infrastructure lives.”

The RAT executes in memory, spoofs process names, and deploys Pluggable Authentication Module (PAM) backdoors to harvest credentials. It specifically targets developer workflows, gathering SSH keys, browser profiles, and clipboard contents that often contain sensitive authentication tokens. The malware’s modular architecture includes rootkit capabilities and multiple persistence mechanisms designed to evade detection in development environments.

Student Learning Patterns with AI Code Assistants

Research into how students interact with AI coding tools reveals significant differences between high and low performers. A study published on arXiv analyzed 19,418 interaction turns from 110 undergraduate students using AI coding assistants, identifying two distinct help-seeking patterns.

Top-performing students engaged in “instrumental help-seeking” — asking questions and exploring concepts that elicited tutor-like responses from AI systems. These students used AI tools to understand programming concepts and debug their thinking process. In contrast, low-performing students relied on “executive help-seeking,” frequently delegating entire tasks and prompting AI to provide ready-made solutions without explanation.

The research suggests current generative AI systems mirror student intent rather than optimizing for learning outcomes. “To evolve from tools to teammates, AI systems must move beyond passive compliance,” the researchers concluded. They argue for pedagogically aligned design that detects unproductive delegation and steers interactions toward inquiry-based learning.

Enterprise Adoption and Integration Challenges

Despite security concerns, enterprise adoption of AI coding tools continues accelerating. GitHub Copilot has expanded beyond individual developers to enterprise teams, while newer entrants like Cursor and Claude Code compete for market share in professional development environments. The tools promise significant productivity gains — GitHub reports Copilot users complete tasks 55% faster than without assistance.

However, integration challenges persist beyond security vulnerabilities. Development teams must establish governance frameworks for AI-generated code, including review processes, testing protocols, and intellectual property considerations. Many organizations struggle with balancing developer productivity gains against code quality and security requirements.

The credential-focused attack patterns identified by security researchers highlight a fundamental challenge: AI coding tools require extensive permissions to function effectively, creating attractive targets for attackers seeking supply chain access. As these tools become more deeply integrated into development workflows, the attack surface continues expanding.

What This Means

OpenAI’s generous Codex rate limit increase demonstrates the competitive pressure in AI coding tools, where user engagement drives market position. However, the timing coincides with growing security awareness around AI development tools, creating a tension between accessibility and safety.

The pattern of credential-focused attacks against major coding assistants suggests the industry needs fundamental architectural changes, not just security patches. As AI tools gain deeper access to development environments, the traditional security perimeter dissolves — making every developer workstation a potential supply chain entry point.

For enterprises evaluating AI coding tools, the research on student learning patterns offers important insights: the most effective implementations may require active intervention to prevent over-reliance and maintain skill development. The challenge isn’t just deploying AI assistants, but ensuring they enhance rather than replace human expertise.

FAQ

Who received the enhanced Codex limits from OpenAI?

All 8,000+ developers who applied for OpenAI’s invite-only GPT-5.5 party received 10x rate limit increases on their personal ChatGPT accounts, regardless of whether they were accepted, waitlisted, or rejected for the event.

What security vulnerabilities have been found in AI coding tools?

Six research teams disclosed exploits against major coding assistants in 2025, with every successful attack targeting credentials rather than AI models. Notable examples include GitHub token theft through crafted branch names in Codex and Claude Code ignoring security rules for complex commands.

How do top-performing students use AI coding assistants differently?

Research shows high performers engage in “instrumental help-seeking” — asking questions to understand concepts and elicit tutor-like responses. Low performers rely on “executive help-seeking,” delegating tasks for ready-made solutions without learning the underlying concepts.