Recent breakthroughs in artificial general intelligence (AGI) research are emerging from novel approaches to logical reasoning and planning capabilities. A significant development comes from new research on attention-aware intervention techniques that could fundamentally change how large language models handle complex reasoning tasks.

End-to-End Reasoning Without External Dependencies

Traditional logical reasoning systems for LLMs have relied heavily on interactive frameworks that decompose problems into subtasks through carefully crafted prompts or external symbolic solvers. However, new research published on arXiv (arXiv:2601.09805v1) introduces a non-interactive, end-to-end framework that enables reasoning to emerge directly within the model architecture itself.

This approach represents a crucial technical advancement because it eliminates the computational overhead of interactive systems while removing dependencies on external components that limit scalability. The methodology focuses on introducing structural information into the model’s attention mechanisms, allowing for more sophisticated logical processing without requiring additional resources.

Technical Architecture Innovations

The attention-aware intervention technique addresses a fundamental challenge in AGI development: how to build models that can perform complex reasoning tasks while maintaining analyzability and generalization capabilities. By embedding structural reasoning patterns directly into the neural network architecture, researchers are creating systems that can handle logical operations more efficiently.

This technical breakthrough is particularly significant because it demonstrates that sophisticated reasoning capabilities can be achieved through architectural innovations rather than simply scaling model size or training data. The approach suggests that AGI development may benefit more from targeted improvements to model design than from brute-force computational increases.

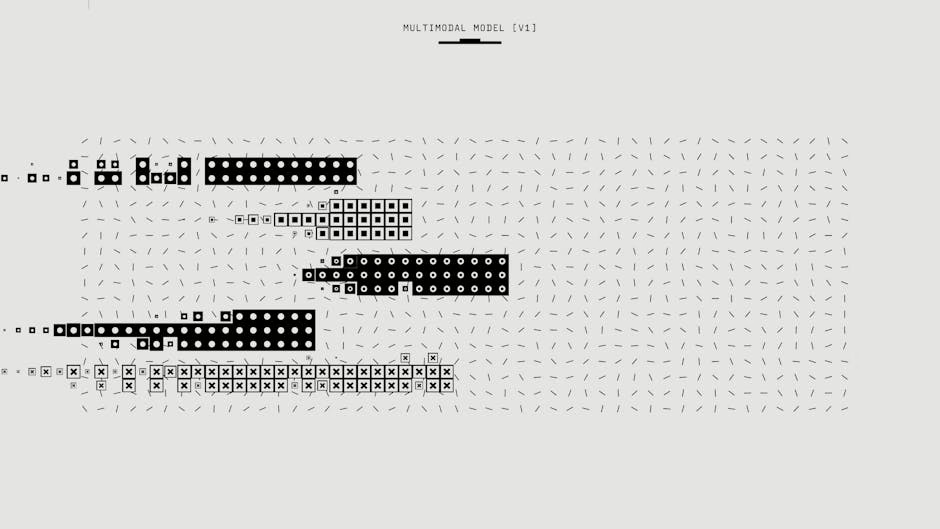

Multimodal Capabilities and Specialized Applications

While reasoning advances represent core AGI progress, specialized applications are also pushing the boundaries of AI capabilities. Recent developments in image generation models, such as improvements in complex text rendering, showcase how domain-specific optimizations contribute to overall AI advancement.

These specialized models demonstrate increasingly sophisticated understanding of complex visual-textual relationships, a capability that will be essential for AGI systems that need to process and generate multimodal content with human-level proficiency.

Integration Challenges and Real-World Applications

As AI systems become more capable, integration into practical applications presents both opportunities and challenges. The emergence of AI-powered health analysis tools and coding assistants illustrates how advanced reasoning capabilities are being deployed in high-stakes domains.

However, the effectiveness of these applications depends heavily on the underlying reasoning architectures. Systems that can perform end-to-end logical reasoning without external dependencies are better positioned for reliable real-world deployment, particularly in applications where consistency and interpretability are crucial.

Implications for AGI Timeline

The development of non-interactive reasoning frameworks represents a significant milestone in AGI research because it addresses fundamental limitations in current AI architectures. By enabling models to perform complex logical operations internally, these advances reduce the gap between narrow AI applications and general intelligence capabilities.

The technical approach of embedding structural reasoning into attention mechanisms suggests that AGI development may accelerate through targeted architectural innovations rather than requiring entirely new computational paradigms. This could potentially compress the timeline for achieving human-level reasoning across diverse domains.

Future Research Directions

The success of attention-aware intervention techniques opens several promising research avenues. Future work will likely focus on scaling these methods to handle increasingly complex reasoning tasks while maintaining computational efficiency.

Additionally, combining these reasoning advances with multimodal capabilities could lead to AI systems that can perform sophisticated analysis across text, images, and other data types within unified architectural frameworks. Such integration would represent a significant step toward the flexible, general-purpose intelligence that defines AGI.

Related news

Sources

Readers new to the underlying architecture can start with, see how large language models actually work.