AI Safety Research Faces Funding Surge Amid Regulatory Pushback

AI safety research has reached a critical inflection point in 2026, with Silicon Valley investors spending millions to influence regulatory outcomes while governments worldwide implement new oversight frameworks. The UK Department for Transport achieved 90% accuracy using Google Cloud’s Gemini models to analyze public consultations, saving up to £4 million annually, while a super PAC funded by OpenAI’s Greg Brockman and Palantir cofounder Joe Lonsdale launched aggressive campaigns against AI regulation advocates like New York Assembly member Alex Bores.

According to Stanford’s 2026 AI Index, AI development continues accelerating despite predictions of hitting technical walls, with global AI data centers now consuming 29.6 gigawatts of power—enough to run New York state at peak demand. This rapid advancement has intensified debates over responsible AI deployment, algorithmic fairness, and the need for comprehensive safety protocols.

Industry Leaders Push Back Against Regulatory Frameworks

The tension between innovation and regulation has reached unprecedented levels, with tech industry leaders actively funding political campaigns to oppose AI oversight measures. According to WIRED, the Leading the Future super PAC has targeted politicians who support rigorous AI regulation, describing their approach as “ideological and politically motivated legislation that would handcuff the country’s ability to lead on AI jobs and innovation.”

Key opposition tactics include:

- Financial lobbying: Multi-million dollar campaigns targeting pro-regulation candidates

- Industry messaging: Framing safety measures as innovation barriers

- Political influence: Leveraging connections to shape policy discussions

Alex Bores, who previously worked at Palantir before entering politics, cosponsored New York’s RAISE Act, which became law in 2025. The legislation requires major AI firms to implement and publish safety protocols for their models, representing a significant shift toward mandatory transparency and accountability measures.

Government Adoption Demonstrates Practical AI Safety Implementation

Despite industry resistance, government agencies are successfully implementing responsible AI practices with measurable results. The UK Department for Transport’s Consultation Analysis Tool (CAT) exemplifies how safety-conscious AI deployment can deliver both efficiency gains and public benefit.

The CAT system achievements:

- Processing speed: Analyzes 100,000+ public responses in hours versus months

- Accuracy rates: Achieves up to 90% accuracy in theme categorization

- Cost savings: Reduces analysis costs by £4 million annually

- Transparency: Maintains public consultation response timelines

Built on Google’s Vertex AI platform using Gemini models, the system demonstrates that responsible AI implementation doesn’t require sacrificing performance. The tool supported analysis of responses to the Integrated National Transport Strategy and driving test booking improvements, showing practical applications of ethical AI frameworks.

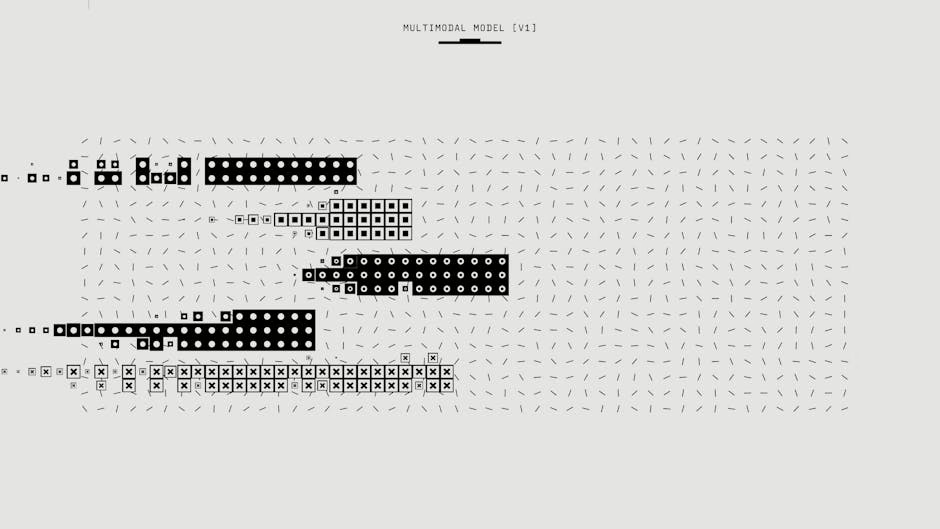

Enterprise AI Safety Requires Systematic Approach

As organizations move beyond pilot programs to production-scale AI deployment, safety considerations become paramount. According to VentureBeat, successful agentic AI implementation requires “clear goals, data-driven workflows, and an enterprise platform that balances autonomy, governance, observability, and flexibility with hard guardrails from day one.”

Critical safety implementation elements:

- Outcome-anchored design: Tying AI goals to measurable business KPIs

- Governance frameworks: Establishing clear oversight and accountability structures

- Bias monitoring: Continuous assessment of algorithmic fairness across diverse populations

- Risk assessment: Regular auditing of AI decision-making processes

The shift from experimental pilots to operational deployment in “grey zones”—areas where human handoffs and approvals traditionally occur—requires robust safety protocols. Organizations must translate high-level KPIs into specific agent objectives while maintaining human oversight and intervention capabilities.

Global AI Competition Intensifies Safety Concerns

The geopolitical dimensions of AI development add complexity to safety research priorities. Stanford’s AI Index reveals that the US and China are nearly tied in AI model performance, with both nations investing heavily in capabilities that could have significant societal implications.

Key competitive dynamics:

- Performance parity: US and China models showing equivalent capabilities on standardized benchmarks

- Infrastructure concentration: Most AI data centers located in the US, with TSMC in Taiwan fabricating leading chips

- Resource consumption: Annual water use from GPT-4o alone may exceed drinking water needs of 12 million people

- Supply chain vulnerabilities: Fragile chip manufacturing dependencies create systemic risks

This competitive pressure creates tension between rapid advancement and careful safety consideration. Nations face incentives to prioritize speed over comprehensive risk assessment, potentially compromising long-term safety outcomes for short-term competitive advantages.

Algorithmic Bias and Fairness Remain Critical Challenges

Despite technological advances, fundamental questions about algorithmic fairness and bias mitigation persist across AI applications. The rapid adoption rate—faster than personal computers or the internet—means potentially biased systems are being deployed at unprecedented scale before comprehensive bias testing can occur.

Persistent bias challenges include:

- Training data representation: Ensuring diverse perspectives in model development

- Deployment context: Accounting for different demographic impacts across use cases

- Feedback loops: Preventing biased outputs from reinforcing discriminatory patterns

- Measurement standards: Developing consistent metrics for fairness assessment

Government implementations like the UK’s CAT system provide valuable case studies for bias-aware AI deployment. By processing public consultation responses that represent diverse citizen perspectives, these systems must account for varied communication styles, languages, and cultural contexts while maintaining analytical accuracy.

What This Means

The current state of AI safety research reflects a technology advancing faster than our ability to govern it effectively. While government agencies demonstrate successful responsible AI implementation with measurable benefits, industry resistance to regulatory frameworks creates concerning gaps in oversight.

The concentration of AI capabilities in a few companies and nations, combined with massive resource requirements and supply chain vulnerabilities, suggests that safety considerations extend beyond individual algorithms to systemic risks. The political battle over regulation indicates that technical solutions alone are insufficient—successful AI safety requires coordinated policy frameworks that balance innovation incentives with public protection.

Organizations implementing AI systems must prioritize systematic safety approaches from the beginning rather than retrofitting protections after deployment. The examples of successful government AI implementation provide blueprints for responsible deployment that maintains both performance and accountability.

FAQ

What are the main challenges in AI safety research today?

The primary challenges include managing rapid technological advancement that outpaces regulatory frameworks, addressing algorithmic bias across diverse populations, ensuring transparency in AI decision-making processes, and balancing innovation incentives with comprehensive risk assessment.

How are governments implementing responsible AI practices?

Governments are implementing mandatory safety protocols through legislation like New York’s RAISE Act, deploying AI systems with built-in transparency measures like the UK’s consultation analysis tool, and establishing oversight frameworks that require public disclosure of AI safety measures.

Why is industry resistance to AI regulation concerning?

Industry resistance through political lobbying and funding campaigns against regulation advocates creates gaps in oversight that could allow potentially harmful AI systems to be deployed without adequate safety measures, particularly given the rapid adoption rate and scale of current AI implementations.

Further Reading

- Supporting new research on the impacts of AI – Google Blog

For the broader 2026 landscape across research, industry, and policy, see our State of AI 2026 reference.