Five separate developments this week illustrate how quickly multimodal AI — systems that process video, audio, images, and text together — is moving from research labs into commercial products. From a startup founded by former OpenAI CTO Mira Murati previewing real-time voice-and-video models, to a two-year-old company pricing video analysis at 80–90% below market rates, the field is seeing simultaneous pressure on both capability and cost.

Thinking Machines Previews Real-Time Interaction Models

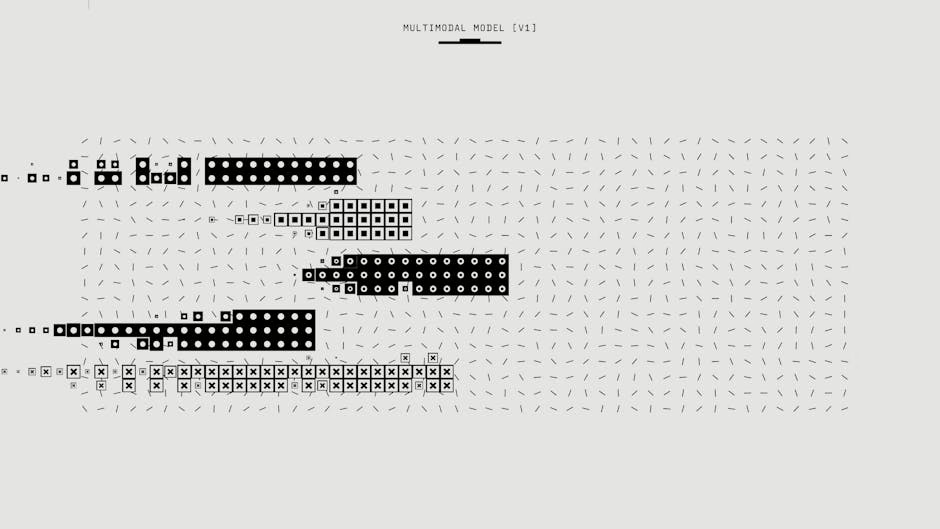

Thinking Machines Lab, founded by former OpenAI CTO Mira Murati and former OpenAI researcher John Schulman, announced a research preview of what it calls “interaction models” — a class of native multimodal systems that treats interactivity as a core architectural feature rather than a software wrapper bolted onto a language model.

According to Thinking Machines’ announcement blog post, the models communicate through a camera and microphone and are designed to understand continuous, messy human communication — pauses, interruptions, and tone shifts — rather than simply transcribing speech and feeding it to a chatbot backend. The company says this architecture reduces latency and improves benchmark performance compared to conventional pipeline designs.

The models are not yet publicly available. Thinking Machines said it will open a limited research preview in the coming months to collect feedback before a wider release. The company is positioning the work as a direct challenge to the dominant “turn-based” model of AI interaction, where a user submits input, waits, and receives output — a loop that breaks down in fluid, real-world conversation.

Murati framed the broader mission to Wired as keeping humans in the loop rather than automating them out: “At some point we will have super-intelligent machines. But we think that the best way to actually have many possible futures — good futures — is to keep humans in the loop.”

Perceptron Mk1 Undercuts Rivals on Video Analysis Pricing

Perceptron Inc., a two-year-old startup led by co-founder and CEO Armen Aghajanyan (formerly of Meta FAIR and Microsoft), released its flagship video analysis reasoning model, Mk1, at pricing that VentureBeat reported comes in roughly 80–90% below comparable offerings from Anthropic, OpenAI, and Google.

Specific pricing, according to Perceptron’s announcement, is $0.15 per million tokens input and $1.50 per million tokens output via API — compared to the higher rates charged by Anthropic’s Claude Sonnet 4.5, OpenAI’s GPT-5, and Google’s Gemini 3.1 Pro.

The company spent 16 months developing what it describes as a “multi-modal recipe” built from scratch to handle physical-world complexity: cause-and-effect reasoning, object dynamics, and physics-based understanding. Benchmark results focus on spatial and video grounding tasks. A public demo is available for prospective enterprise customers.

The target use cases Perceptron highlights include:

- Security monitoring over live facility feeds

- Automated extraction of highlight clips from marketing video

- Identification of errors or continuity problems in recorded content

- Behavioral analysis in controlled research settings

Wirestock Raises $23M to Supply Multimodal Training Data

Wirestock, which pivoted from a stock photography distribution platform to an AI data supplier in 2023, closed a $23 million Series A round led by Nava Ventures, with participation from SBVP (co-founded by Sheryl Sandberg), Formula VC, and I2BF Ventures, TechCrunch reported.

The company now supplies datasets of images, videos, design assets, and gaming and 3D content to AI labs. Co-founder and CEO Mikayel Khachatryan told TechCrunch that Wirestock currently provides multimodal data to six of the largest foundation model makers, though he declined to name them. The company’s platform has signed up more than 700,000 artists and designers who complete data collection tasks, with an annual run-rate revenue of $40 million and $15 million paid out to contributors to date.

Khachatryan said the business shifted from selling existing library content to fulfilling custom data requests: “Initially, a lot of our deals were just selling what we had off the shelf, like our existing library. But then it turned into a lot of custom requests for content and data, and that created new opportunities for creators.”

Wirestock said it was transparent about its 2022 pivot and allowed photographers to opt out of the data supply business. Khachatryan said “the majority” of users stayed on as data providers, though he did not give a specific figure.

Auto-Rubric as Reward: A New Alignment Method for Multimodal Models

On the research side, a paper posted to arXiv introduces Auto-Rubric as Reward (ARR), a framework designed to improve how multimodal generative models are aligned with human preferences.

Current reinforcement learning from human feedback (RLHF) approaches typically reduce complex, multi-dimensional human judgments to scalar or pairwise labels — a compression that the paper’s authors argue collapses nuanced preferences into opaque proxies and creates vulnerabilities to reward hacking.

ARR takes a different approach: before any pairwise comparison, it externalizes a vision-language model’s internalized preference knowledge as prompt-specific rubrics — explicit, independently verifiable quality dimensions rather than a single score. The paper pairs ARR with a training method called Rubric Policy Optimization (RPO), which distills this structured evaluation into a binary reward signal to stabilize policy gradients during generative training.

On text-to-image generation and image editing benchmarks, ARR-RPO outperformed both pairwise reward models and VLM judges, according to the paper. The authors argue the key finding is that the bottleneck in multimodal alignment is not a lack of knowledge in existing models, but the absence of a structured interface for externalizing that knowledge.

The framework supports zero-shot deployment and few-shot conditioning on minimal labeled data — a practical advantage for teams without large preference datasets.

The Data Layer Becomes a Competitive Front

The Wirestock raise underscores a structural dynamic that has become increasingly visible across the multimodal space: the supply of high-quality, diverse training data is a distinct business, not just an input cost absorbed by model developers.

As vision-language models extend into video, 3D content, audio, and interactive settings, the data requirements grow more complex and harder to source at scale. Wirestock’s model — paying a large network of human creators to produce and annotate custom content on demand — addresses a gap that web scraping cannot fill for specialized or synthetic-free datasets.

At the same time, the pricing pressure Perceptron is applying to video analysis suggests that inference costs for multimodal models are following a similar trajectory to text-only LLMs: rapid commoditization as more entrants build specialized architectures optimized for specific modalities rather than general-purpose capability.

What This Means

The multimodal AI space is splitting along two distinct axes simultaneously. On the capability axis, companies like Thinking Machines are pushing toward architectures that can handle the full messiness of real-time human interaction — not just processing video and audio, but responding to them fluidly while new inputs arrive. That is a harder problem than batch video analysis, and Thinking Machines has not yet shipped a product, only a research preview.

On the cost axis, Perceptron’s Mk1 pricing — if the benchmark performance holds up under enterprise workloads — represents the kind of displacement that tends to force incumbents to respond. An 80–90% price reduction is not a rounding error; it is the difference between a capability that is experimental and one that fits into a production budget.

The ARR paper adds a third dimension: alignment methods for multimodal models are still catching up to the sophistication of the models themselves. Scalar reward signals were always a crude proxy for human judgment; the move toward explicit, rubric-based evaluation is a more honest accounting of what human preferences actually look like across multiple dimensions.

Taken together, these four threads — real-time interaction, low-cost video reasoning, structured alignment, and a maturing data supply chain — suggest that multimodal AI is moving from a collection of impressive demos toward something closer to deployable infrastructure.

FAQ

What are interaction models, and how do they differ from standard voice AI?

Interaction models, as described by Thinking Machines Lab, are multimodal systems where real-time interactivity is built into the model architecture itself rather than handled by external software. Unlike standard voice interfaces that transcribe speech and pass text to a language model, interaction models natively process continuous audio and video, including pauses, interruptions, and tone changes, allowing them to respond while still receiving new input.

How does Perceptron Mk1’s pricing compare to major AI providers?

Perceptron prices Mk1 at $0.15 per million tokens input and $1.50 per million tokens output — approximately 80–90% below the rates charged by Anthropic’s Claude Sonnet 4.5, OpenAI’s GPT-5, and Google’s Gemini 3.1 Pro for comparable video analysis tasks, according to Perceptron’s announcement. The model is available via API and through a public demo site.

What is Auto-Rubric as Reward (ARR) and why does it matter for multimodal AI?

ARR is a research framework that replaces scalar or pairwise reward signals in multimodal model training with explicit, prompt-specific rubrics that break human preferences into independently verifiable quality dimensions. The approach reduces evaluation biases and works in zero-shot settings, and the accompanying Rubric Policy Optimization method showed stronger results than standard reward models on text-to-image and image editing benchmarks, according to the arXiv paper.

Sources

- Auto-Rubric as Reward: From Implicit Preferences to Explicit Multimodal Generative Criteria – arXiv AI

- Thinking Machines shows off preview of near-realtime AI voice and video conversation with new ‘interaction models’ – VentureBeat

- Perceptron Mk1 shocks with highly performant video analysis AI model 80-90% cheaper than Anthropic, OpenAI & Google – VentureBeat

- Wirestock raises $23M to supply creative multi-modal data to AI labs – TechCrunch

- Mira Murati Wants Her AI to ‘Keep Humans in the Loop’ – Wired