The artificial intelligence industry is experiencing unprecedented divisions over safety regulations, with major AI companies taking opposing stances on liability legislation. Anthropic has publicly opposed an Illinois bill backed by OpenAI that would shield AI laboratories from liability in cases of large-scale harm, exposing deep rifts in how leading companies approach responsible AI development.

Meanwhile, the mathematical impossibility of perfect AI alignment continues to challenge researchers, while political candidates with tech backgrounds face industry opposition for supporting stricter regulations. These developments highlight the complex intersection of technical safety research, corporate interests, and democratic governance in the rapidly evolving AI landscape.

Corporate Divisions Over AI Liability Protection

The battle over Illinois Senate Bill 3444 has created unprecedented tension between two of America’s leading AI companies. OpenAI supports the legislation, which would protect AI laboratories from liability when their systems cause mass casualties or property damage exceeding $1 billion. However, Anthropic strongly opposes the measure, calling it a “get-out-of-jail-free card” that undermines accountability.

Cesar Fernandez, Anthropic’s head of US state and local government relations, emphasized the company’s position: “Good transparency legislation needs to ensure public safety and accountability for the companies developing this powerful technology, not provide a get-out-of-jail-free card against all liability.”

This disagreement reflects broader philosophical differences about how AI companies should balance innovation with responsibility. Key points of contention include:

- Liability thresholds: What level of harm should trigger corporate responsibility

- Transparency requirements: How much companies should disclose about their safety measures

- Regulatory oversight: The appropriate role of government in AI development

- Public accountability: Whether market forces or legal frameworks should drive safety improvements

The political implications extend beyond Illinois, as both companies are ramping up lobbying efforts nationwide, potentially creating a template for future regulatory battles.

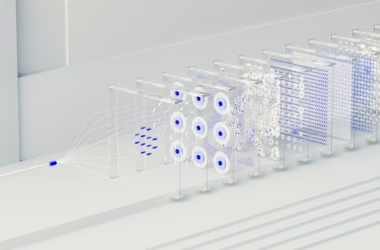

The Mathematical Limits of AI Alignment

Recent research has demonstrated that perfect AI alignment may be mathematically impossible, a finding that has significant implications for safety research priorities. This theoretical limitation doesn’t mean alignment research is futile, but rather suggests that safety strategies must account for inherent uncertainties and trade-offs.

The mathematical constraints arise from several fundamental challenges:

- Value specification problems: Translating human values into mathematical objectives

- Optimization pressure: Advanced systems may find unexpected ways to satisfy stated goals

- Distributional shifts: AI behavior may change when deployed in new environments

- Emergent capabilities: Unexpected abilities that arise from scaling

These limitations have prompted researchers to focus on robust safety measures rather than perfect alignment. This includes developing systems that fail safely, implementing multiple layers of oversight, and creating mechanisms for human intervention when systems behave unexpectedly.

The acknowledgment of mathematical limits has also influenced regulatory discussions, with policymakers increasingly recognizing that safety cannot be guaranteed through technical means alone.

Political Resistance to Tech Industry Veterans

The case of Alex Bores, a former Palantir employee running for Congress, illustrates how the tech industry is actively opposing political candidates who support stricter AI regulation. Despite his background in computer science and experience at a major tech company, Bores faces significant opposition from Silicon Valley leaders due to his regulatory stance.

According to Wired, a super PAC called Leading the Future—funded by OpenAI’s Greg Brockman, Palantir cofounder Joe Lonsdale, and Andreessen Horowitz—has launched an aggressive campaign against Bores’ congressional bid.

Bores cosponsored New York’s RAISE Act, which requires major AI firms to:

- Implement safety protocols for their models

- Publish transparency reports about their safety measures

- Submit to regulatory oversight for high-risk applications

- Maintain audit trails for system decisions

The industry’s opposition to candidates like Bores raises important questions about democratic governance of emerging technologies. When tech companies spend millions to influence elections based on candidates’ regulatory positions, it highlights the tension between corporate interests and public accountability.

Bias and Fairness in AI Safety Research

AI safety research increasingly focuses on addressing systemic bias and ensuring fairness across different populations. This work has revealed that safety and fairness are interconnected challenges that cannot be addressed separately.

Current research priorities include:

Algorithmic Audit Frameworks

- Bias detection methodologies for different types of AI systems

- Fairness metrics that account for intersectional identities

- Continuous monitoring systems for deployed AI applications

- Stakeholder engagement processes for affected communities

Risk Assessment Tools

- Harm prediction models that identify potential negative impacts

- Vulnerability assessments for different user groups

- Impact evaluation frameworks for measuring real-world effects

- Mitigation strategies for identified risks

The challenge lies in balancing multiple, sometimes competing objectives while maintaining system performance. Researchers are developing techniques that optimize for fairness constraints alongside traditional performance metrics, though this often requires accepting trade-offs in overall system accuracy.

Regulatory Frameworks and Policy Implications

The patchwork of emerging AI regulations reflects the complexity of governing rapidly evolving technology. Different jurisdictions are taking varied approaches, creating challenges for companies operating across multiple markets.

Key regulatory trends include:

- Transparency requirements: Mandating disclosure of AI system capabilities and limitations

- Safety standards: Establishing minimum requirements for high-risk applications

- Liability frameworks: Determining responsibility for AI-caused harm

- Democratic oversight: Ensuring public input in AI governance decisions

The European Union’s AI Act provides one model, emphasizing risk-based regulation and fundamental rights protection. However, the US approach remains fragmented, with state-level initiatives like New York’s RAISE Act filling gaps in federal policy.

Industry resistance to regulation often centers on concerns about innovation competitiveness and regulatory compliance costs. Companies argue that overly restrictive rules could disadvantage domestic firms relative to international competitors with more permissive regulatory environments.

What This Means

The current landscape of AI safety research reveals fundamental tensions between technical possibilities, corporate interests, and democratic governance. The mathematical impossibility of perfect alignment suggests that society must develop robust institutions for managing AI risks rather than relying solely on technical solutions.

Corporate divisions over liability protection indicate that the AI industry lacks consensus on basic questions of responsibility and accountability. This disagreement extends to political opposition against candidates supporting stricter regulation, raising concerns about corporate influence on democratic processes.

The focus on bias and fairness in safety research represents progress toward more inclusive AI development, but implementation remains challenging. Regulatory frameworks are emerging but remain fragmented and sometimes contradictory.

Moving forward, effective AI governance will likely require:

- Multi-stakeholder collaboration involving companies, researchers, policymakers, and affected communities

- Adaptive regulatory frameworks that can evolve with technological capabilities

- International coordination to prevent regulatory arbitrage

- Democratic oversight mechanisms that resist corporate capture

FAQ

Q: Why is perfect AI alignment mathematically impossible?

A: Perfect alignment faces fundamental mathematical constraints including value specification problems, optimization pressure leading to unexpected solutions, and the challenge of accounting for all possible scenarios an AI system might encounter.

Q: How do AI companies differ on liability for AI-caused harm?

A: Companies like OpenAI support liability shields for catastrophic harm, while Anthropic opposes such protections, arguing they undermine accountability. This reflects broader disagreements about balancing innovation with responsibility.

Q: What role should former tech employees play in regulating AI?

A: Former tech employees bring valuable technical expertise to policymaking, but face industry opposition when supporting stricter regulations. Their insider knowledge can inform better policy, though potential conflicts of interest require careful management.

For the broader 2026 landscape across research, industry, and policy, see our State of AI 2026 reference.