Startups are pivoting from trillion-parameter models to efficient architectures as compute costs force a rethink of AI scaling strategies. This week saw three major developments: Zyphra’s 8-billion parameter ZAYA1-8B matching GPT-5 performance, Subquadratic claiming 1,000x efficiency gains, and Google releasing multi-token prediction drafters that triple inference speed.

Smaller Models Challenge the Parameter Race

Zyphra released ZAYA1-8B, a mixture-of-experts model with 8 billion parameters but only 760 million active during inference. According to VentureBeat, the model achieves competitive performance against GPT-5-High and DeepSeek-V3.2 despite being orders of magnitude smaller.

The model uses a sparse architecture where only specific expert networks activate for each input, dramatically reducing computational overhead. Zyphra trained ZAYA1-8B entirely on AMD Instinct MI300 GPUs, demonstrating that alternatives to NVIDIA’s hardware can produce competitive results.

Key specifications:

- Total parameters: 8 billion

- Active parameters: 760 million

- License: Apache 2.0

- Availability: Open source on Hugging Face

The model’s “intelligence density” stems from architectural innovations spanning tokenization, attention mechanisms, and training methodologies. Unlike traditional dense models that activate all parameters for every computation, ZAYA1-8B’s mixture-of-experts design routes inputs through specialized sub-networks.

Subquadratic Claims Breakthrough Architecture

Miami startup Subquadratic emerged from stealth claiming to solve the quadratic scaling problem that has limited transformer architectures since 2017. The company’s SubQ 1M-Preview model allegedly processes 12 million tokens with 1,000x less attention compute than frontier models.

According to VentureBeat, the company raised $29 million in seed funding at a $500 million valuation from investors including Tinder co-founder Justin Mateen and former SoftBank Vision Fund partner Javier Villamizar.

The AI research community has responded with skepticism. Researchers questioned why a model claiming such dramatic efficiency improvements requires an early-access program rather than open deployment. Some called the benchmarks “suspiciously perfect” and demanded independent verification.

Subquadratic’s claims:

- Context length: 12 million tokens

- Efficiency gain: 1,000x reduction in attention compute

- Architecture: Fully subquadratic scaling

- Products: API, coding agent, search tool

The company has not published technical papers or allowed independent researchers to validate its architecture. This opacity has drawn comparisons to previous AI overhype cycles, though some researchers argue the work deserves serious evaluation.

Google Accelerates Gemma 4 with Speculative Decoding

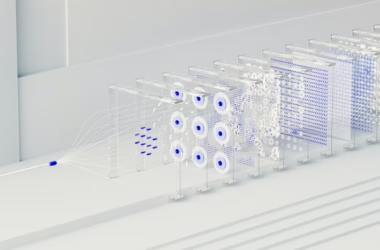

Google released multi-token prediction (MTP) drafters for its Gemma 4 model family, achieving up to 3x inference speedup without quality degradation. According to Google’s blog post, the technique addresses memory bandwidth bottlenecks that limit standard LLM inference.

Speculative decoding pairs a lightweight drafter model with the full Gemma 4 model. The drafter predicts multiple future tokens while the main model processes a single token, utilizing otherwise idle compute resources. The main model then verifies or corrects the drafter’s predictions.

Performance improvements:

- Speed increase: Up to 3x tokens per second

- Quality impact: No degradation in output

- Hardware support: LiteRT-LM, MLX, Hugging Face Transformers, vLLM

- Model compatibility: Entire Gemma 4 family

The approach addresses a fundamental inefficiency in transformer inference: processors spend most time moving parameters from memory rather than computing. By generating multiple candidate tokens simultaneously, speculative decoding better utilizes available compute capacity.

Training Costs Drive Architectural Innovation

The shift toward efficiency reflects mounting economic pressure in AI development. Towards Data Science analysis shows that reasoning models like GPT-5.5 and OpenAI’s o1 series generate hidden reasoning tokens that dramatically increase compute costs without appearing in user responses.

Inference scaling allows models to spend more compute on each response, checking logic and iterating toward better answers. However, this creates a cost-quality-latency triangle where teams must balance competing priorities:

- Finance teams monitor shrinking margins from high token costs

- Infrastructure engineers manage latency to prevent timeouts

- Product managers weigh answer quality against response delays

Organizations are developing task taxonomies to route simple queries to efficient models while reserving compute budgets for high-stakes reasoning. This strategic approach prevents reasoning models from consuming resources on routine tasks.

Architecture Fundamentals Drive Performance

Engineering guidance from Towards Data Science emphasizes that modern LLM performance depends on understanding the full pipeline from tokenization to evaluation. Key architectural components include:

Tokenization strategies that convert text to numerical representations while preserving semantic meaning. Subword tokenization methods like Byte-Pair Encoding balance vocabulary size with representation efficiency.

Attention mechanisms that allow models to focus on relevant input portions. The quadratic scaling of attention computation with sequence length drives current efficiency research.

Training methodologies including reinforcement learning from human feedback (RLHF) and direct preference optimization that align model outputs with human preferences.

Successful LLM engineering requires understanding these components as an integrated system rather than isolated techniques. The current efficiency focus reflects maturation from pure scaling toward architectural sophistication.

What This Means

The AI industry is entering a post-scaling era where architectural innovation matters more than parameter count. Zyphra’s competitive performance with 760 million active parameters demonstrates that clever design can match brute-force approaches at fraction of the cost.

Subquadratic’s dramatic claims, if validated, could reshape how AI systems scale with context length. However, the research community’s skeptical response highlights the need for transparent, reproducible results in an industry prone to overhype.

Google’s speculative decoding represents a more conservative but proven approach to efficiency gains. By addressing inference bottlenecks rather than claiming architectural breakthroughs, the technique offers immediate practical benefits.

For enterprises, these developments suggest a future where model selection involves sophisticated cost-benefit analysis rather than simply choosing the largest available model. Organizations will need frameworks for matching tasks to appropriate compute levels while managing infrastructure costs.

The efficiency focus also democratizes AI development by reducing hardware requirements. Smaller organizations can compete with efficient architectures rather than requiring massive GPU clusters for training and inference.

FAQ

What makes mixture-of-experts models more efficient than dense models?

Mixture-of-experts architectures activate only specific sub-networks for each input rather than the entire model. This reduces computational overhead while maintaining model capacity, allowing ZAYA1-8B to use only 760 million of its 8 billion parameters during inference.

Why are researchers skeptical of Subquadratic’s efficiency claims?

The company claims 1,000x efficiency improvements without publishing technical details or allowing independent verification. The AI community has seen overhyped claims before, and extraordinary performance requires extraordinary evidence through peer review and reproducible results.

How does speculative decoding improve inference speed?

Speculative decoding uses a lightweight model to predict multiple future tokens while the main model processes one token. This utilizes otherwise idle compute resources, achieving 3x speedups by generating and verifying multiple candidates simultaneously rather than sequentially.